NDA Lynn, an AI that can evaluate confidentiality agreements for free, is a perfect example of the role artificial intelligence will probably play in our life.

For the past decade or so, Arnoud Engelfriet has been the Netherland’s go-to guy for any question regarding internet and the law. Also his last name translates roughly to ‘Angelic Fries,’ which is awesome.

One of the services Engelfriet offered was checking NDAs if they should be signed. Non-disclosure agreements (NDAs) are pretty standard business contracts used to keep confidential information under wraps. They are quite common, but do require some scrutiny to establish what exactly establishes a breach and that they don’t gag one forever.

“A businessman who needs his NDAs checked, but doesn’t have the budget to have them all checked, only needs to know which one really needs to looked at by a professional,” he tells me

“And if something is too expensive to do manually, but people need it a lot, it’s a good candidate for automation,” he concludes.

Checking NDAs pretty standard work, but nonetheless time-consuming. So he put his computer science degree to good use and started building an AI that could help him out.

“Way back in 1993 I studied computer sciences at university. Back then there was no machine learning, or at least not in this way. For this project I used BigML, a cloud solution for machine learning that aims at making it more accessible to the masses.”

All Engelfriet needed was a good dataset, which he happened to have as a lawyer. “To start out with, I uploaded 300 NDAs from my archive, and labeled them by hand,” he tells me.

The dataset consisted of about 10,000 sentences, and to train the AI, each of those had to be marked if they contained something untoward for an NDA.

“I put all that in the system, and that produced an AI. All it needed afterwards was a nice wrapper.”

Engelfriet is very realistic about how revolutionary NDA Lynn is. “It’s not very innovative, but that’s exactly what makes it powerful. AI has become a commodity.”

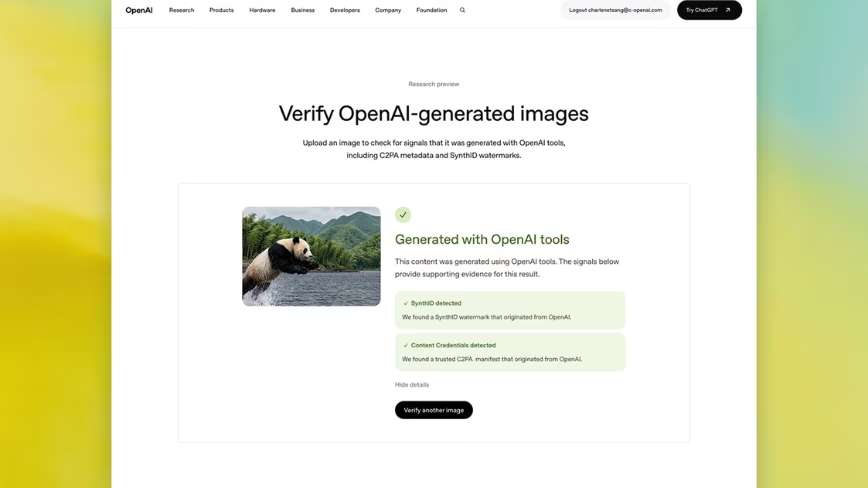

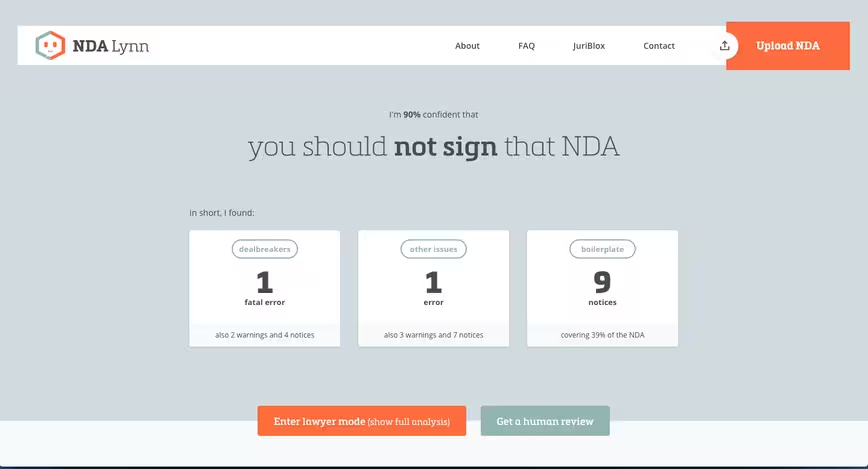

NDALynn itself is a machine learning system that has been trained to identify clauses in NDAs that don’t seem right. It then returns a clause-by-clause report on which ones seem fine, and which ones seem to require too much or are unclear.

It sounds trivial, but reviewing NDAs requires a significant amount of work. “Drafting or reviewing a non-disclosure agreement is an art and therefore requires significant amounts of work to get right.”

At the moment NDA Lynn achieves an accuracy of 94,8 percent in the latest tests, which is remarkably high. A study published this week by LawGeex, a competing NDA AI, pitted lawyers against their system to check NDAs, with lawyers scoring a paltry 85 percent accuracy on average.

I tried out a few NDAs collected from colleagues, and most of them did contain some fishy clauses, which the system labels as ‘dealbreakers.’ But that doesn’t necessarily mean they’re not ok, Engelfriet admits.

“Imagine a reporter, for a story they look for sources, reach out to a few of them. You could train an AI to do that. But every reporter has certain biases. Some like talking to scientists instead of politicians or PR representatives, and that skews the AI in a certain direction.”

The same goes for NDA Lynn. Because it was trained by Engelfriet, who has certain biases against certain clauses, it takes over those biasses and applies them to the NDA. The AI assigns more weight to clauses Engelfriet as a lawyer would assign more weight.

Moreover, since it’s a machine that learns by association, Engelfriet had to weed out quirks that it picked up along the way.

He tells me that the machine consistently labeled NDA written under California law as too strict, even though some of them were quite permissible. In the end, it turned out that his original training set contained a disproportionate amount of strict NDAs written under California law, so the AI simply concluded all NDAs from California were too strict.

This silly quirk sadly also illustrates another characteristic of AIs: before they take shitty work out of our hands, we’ll have to do quite some shitty work for them. To teach an AI to do a task we think is shitty, we have to do that shitty task A LOT.

One example of this is something a maker of interactive sex toys once told me. To make their toy react to the movements in porn videos, they hired a sweatshop full of workers to physically jerk off sensor-laden dildos to the beat of the people fucking on screen. Later, once AI tech had progressed enough, they trained it to take over that unfortunate job.

Training an AI to take over is an investment, that should pay out in the end in alleviating the number of hours we have to do shitty tasks. At the same time, we’re polarizing work into the fun stuff AI can’t do and the boring stuff we want an AI to take over.

In a way, this is exactly what Engelfriet also did with NDA Lynn: the NDAs that look fine – and would thus be a boring waste of time to review – get a thumbs-up, and the NDAs that contain irregular clauses get flagged and could be passed on to him for a human review – and to further train Lynn.

He’s also just released an enterprise version of Lynn that can be trained by companies to incorporate their own specific biases. Unlike Engelfriet, some companies might be fine with perpetual NDAs, and this version would allow them to train the AI to let those pass.

On the one hand automating boring tasks to leave more time for interesting work is great news. But on the other, I can’t shake the image of a corporate headquarter basement filled with ‘AI trainers’ – basically glorified data entry grunts, endlessly feeding information into systems and telling them what is right and what is wrong.

In the grimmest version of our AI future, the polarization of work could lead to a divide between doing the shitty work of teaching AI how to do shitty work, and all the cool human work that will be able to be done by less people – leading to more competition for those human jobs.

In a slightly less grim version, the models become better and better at training themselves, requiring less and less input from humans – leaving the grunts without a job.

But however it turns out, someone make me that source contacting AI plz.

The Machine:Learners track at our flagship TNW Conference 2018 in May offers more deep learning on the future of AI. Find out who’s speaking and more about the themes here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.