One of the biggest rumors surrounding the iPhone 7 is that Apple is adding a second camera module (at least on the Plus model), and it makes a lot more sense than you might realize.

After all, other manufacturers have already caught on. LG’s G5 comes with a ultra-wide angle lens, Huawei’s new P9 sports a dedicated black-and-white camera, and HTC beat everyone to the punch with the One M8‘s depth-sensing camera a couple of years ago.

I know what you’re thinking – that it’s a gimmick (it certainly was on the M8). Apple should just spend its money building a better camera. Smartphone cameras still suck in low light conditions, and it’s all about quality over quantity right?

Well yes, except when quantity leads to better quality.

Smartphone sensors aren’t going to get that much better

Smartphones still have a long way to go before they can match up to current mirrorless and DSLR offerings with larger sensors, but it’s important to acknowledge that smartphone cameras are already really good.

The combination of hardware advancements in small sensors and smart software processing like fast auto HDR has made smartphone photography more viable for a multitude of conditions, especially for viewing on digital screens.

But there will be diminishing returns on sensor improvements, particularly considering that many people with a current generation smartphone already consider their smartphones ‘good enough’ – just look at Flickr’s camera stats.

In other words, slightly better resolution or low light performance from a small sensor won’t make a huge difference, and manufacturers need to do more to differentiate their photography chops.

Multiple Focal Lengths allow for creative flexibility

I’ve shot the LG G5 and a Samsung S7 side by side for a while now. It turns out S7 has (slightly) better image quality, but the G5 is the one I keep coming back to.

There’s a simple reason: the G5 has two focal lengths (basically, how wield the field of view is). Every time I take a photo with the ‘regular’ camera, I check to see what it would look like with the wide-angle camera , just in case. About half the time, it’s better.

There’s a reason professional photographers generally carry multiple lenses. Different focal lengths lend different feeling to an image, they can make a portrait more flattering, or can simply be more practical for tight spaces and groups of people or long distances and closeups.

It’s not hard to to see why Apple is rumored to be taking this approach.

You can effectively double the sensor size without creating a brick of a phone

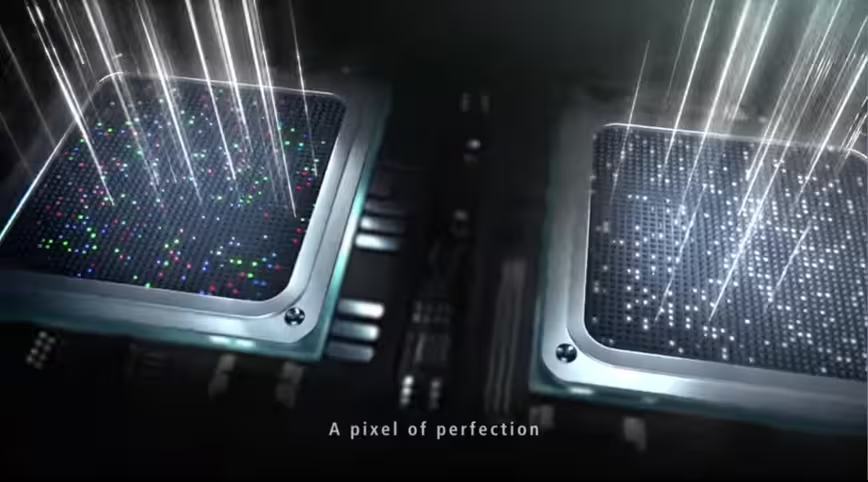

A second camera could bolster photography in more ways than a second focal length. Huawei, for instance, keeps the same focal length and resolution on both of its Leica-branded cameras, but makes one monochrome.

Leica already had experience making a black-and white digital camera, the M Monochrom, which had two unique advantages over a color sensor:

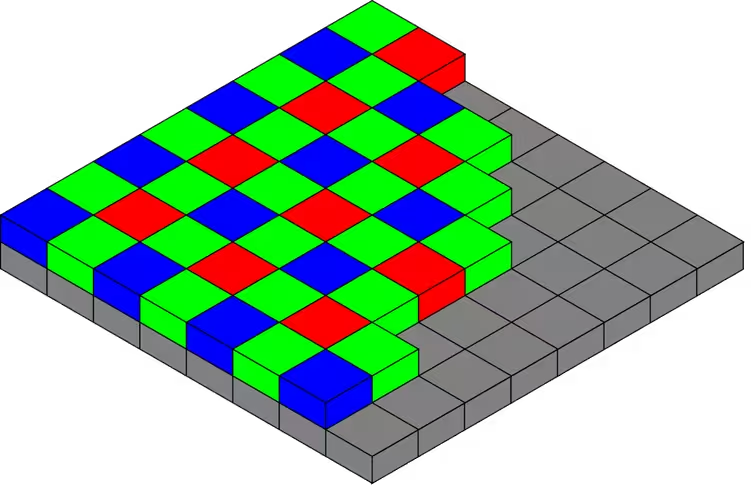

- Color sensors require something called a Bayer filter, which decreases the energy of the light hitting the sensor.

- That same filter significantly reduces sharpness, as a camera has to ‘guess’ what the right color is at each pixel location through a process called demosaicing, and noise is further spread across the three color channels.

That’s all to say the megapixel count in 99 percent of mobile cameras is misleading; it tells you the number of photosites on a sensor, but demosaicing means the ‘true’ resolution of an image will be lower.

Estimates vary, but that basically means an iPhone’s 12 megapixel camera may really only be 4-6 megapixels in practice. On a black-and-white sensor, each photosite corresponds to a real image pixel.

Huawei is using software to combine the color data from the normal sensor with the luminance data from the black and white sensor. That effectively doubles the sensor size, without making the phone incredible thick like a Lumia 1020, which requires a large lens to match its sensor.

How Huawei implements it in practice is a whole different matter, but it’s a sound concept.

Shallow depth-of-field (blurred out backgrounds)

Smartphones can take amazing photos nowadays, especially in the daylight, but one thing they can’t imitate is the shallow depth of field (DoF) you get from a large sensor.

Unlike sharpness or dynamic range, DoF has particular physical constraints, and it’s virtually impossible to create a lens and sensor combination that would provide interesting DoF at smartphone sizes. Professional cameras are their size for a reason.

However, using a second lens allows you to measure depth information that can be used to effectively imitate realistic depth of field in post-processing. Moreover, you could adjust the amount of DoF to your liking.

This is something HTC tried and failed with the One M8, but that was simply a case of poor execution. Google has also added DoF effects to its camera app by measuring depth using parallax, but it’s too slow and haphazard to be useful.

While we’ve yet to try Huawei’s approach, it’s another sound concept that simply needs quick and effective implementation, much like Apple did to make auto-HDR an integral part of smartphone photography. You can bet that Apple will include a similar feature if it launches a dual lens camera.

Making smartphone photography more like professional photography

A good photographer can do a lot with a single smartphone camera, but even the best will find themselves limited in certain scenarios.

Adding a second camera, whether to augment the image quality of the the first, to provide a different perspective, or providing more depth of field options, means smartphone manufacturers can differentiate themselves.

Moreover, it means budding photographers have a bit more flexibility in how they create their photos. I’ll take that over an improved sensor any day.

Get the TNW newsletter

Get the most important tech news in your inbox each week.