A “bat-sense” algorithm that generates images from sounds could be used to catch burglars and monitor patients without using CCTV, the technique’s inventors say.

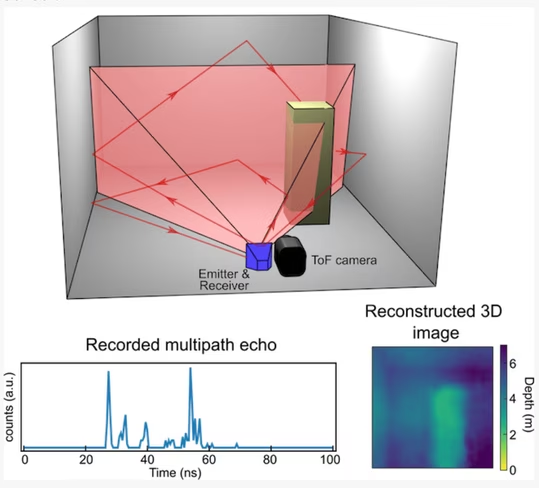

The machine-learning algorithm developed at Glasgow University uses reflected echoes to produce 3D pictures of the surrounding environment.

The researchers say smartphones and laptops running the algorithm could detect intruders and monitor care home patients.

[Read: 3 new technologies ecommerce brands can use to connect better with customers]

Study lead author Dr Alex Turpin said two things set the tech apart from other systems:

Firstly, it requires data from just a single input — the microphone or the antenna — to create three-dimensional images. Secondly, we believe that the algorithm we’ve developed could turn any device with either of those pieces of kit into an echolocation device.

The system analyses sounds emitted by speakers or radio waves pulsed from small antennas. The algorithm measures how long it takes for these signals to bounce around a room and return to the sensor.

It then analyzes the signal to calculate the shape, size, and layout of the room, as well as pick out the presence of objects or people. Finally, the data is converted into 3D images that are displayed as a video feed.

The system functions in a similar way to how bats use echolocation to navigate and hunt. The mammals send out sound waves that bounce back when they hit an object. The bats then interpret the echoes to determine the object’s location, size, and the direction (if any) that it’s moving.

The researchers believe their algorithmic recreation of this natural ability could greatly reduce the cost of 3D imaging.

You can read the research paper in the journal Physical Review Letters.

Greetings Humanoids! Did you know we have a newsletter all about AI? You can subscribe to it right here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.