Google provided all the context we’d need for the rest of the week with an almost overlooked announcement on Tuesday: it was renaming its “Google Research” division “Google AI.”

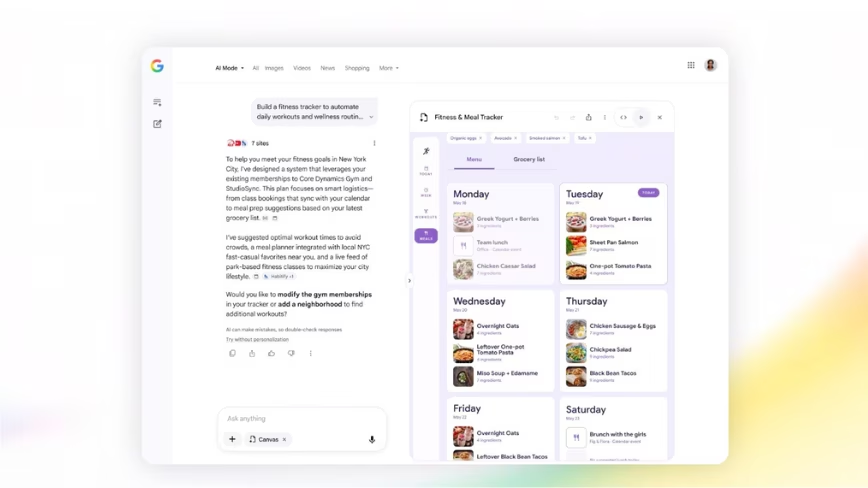

The rest of the week came fast. Starting on Tuesday, at the keynote, journalists found themselves neck deep in news touting all the amazing ways AI would make our lives better, and soon(ish). If Google is to be believed — and it might not be — its updates to Photos, Google Assistant, Maps, News, and numerous other products would leave little doubt as to who, among giant tech companies, is leading the AI charge.

It all started on day one with the big reveal of several improvements to its already excellent Assistant.

First, it’ll get six new voices. One of those belongs to Grammy-winning songbird John Legend. It’ll use these voices to remind you to say “please” before you bark commands at Google Home devices. If you forget, you’ll get a gentle reminder that you’re a jerk. For those that remember, Google Assistant will tell you what a good boy, or girl, you are.

If these features are icing, the cake belongs to Google Duplex, a realistic-sounding AI capable of making calls with real people to set up appointments, make reservations, or do all of the other tasks you’d rather not pick up the phone for. And it sounds like a real person.

I’m highly skeptical this feature is as far along as Google made it out to be, but if it is indeed coming “later this year,” then color me excited. It was perhaps the most jaw-dropping moment of the entire keynote.

Here’s Duplex in action, scheduling a hair appointment and making a reservation at a restaurant.

Impressive, right?

Not quite as impressive, but undoubtedly convenient for anyone that finds themselves shouting at mobile devices regularly is the the continued conversation feature in Google Assistant. In it, you won’t have to repeat “Hey Google” each time you ask a question, so long as it’s part of the conversation you started with the first command. For example, I can say: “Hey Google: Who is the President of France.” And then ask “And how tall is he?” after it answers, all without repeating the trigger words.

Also, there’s Multiple Actions, which allow you to ask two questions at once: “Hey Google: Who is the President of France and how tall is he?”

Suggested Actions in Google Photos were another bar-raising moment.

The company introduced a handful of actions that, at the touch of a button, can brighten, colorize, or add additional styling options with minimal human interaction. Colorizing old black and white (or sepia) images, stripping color from the background (while retaining it in the foreground), or converting photos to PDF documents are just a few of the insanely useful ways Photos’ AI could soon change your life — or at lest the way you allow others to view it.

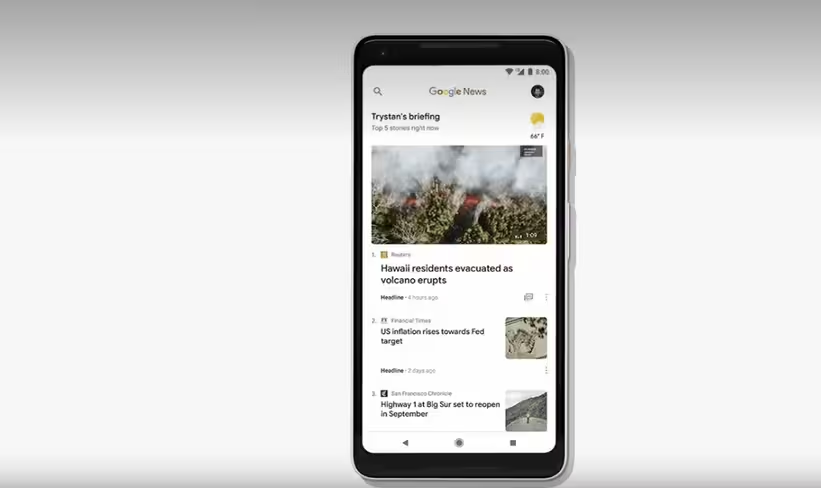

Then there was Google News, followed by Maps. Both were interesting, although lacking the awe-inducing moments of other announcements.

In News, Google aims to provide better suggestions that’ll get you consuming more content. Once you have better stuff to read, the next goal is to erase any pain points preventing you from subscribing or, gasp, even paying for stories from your favorite publishers.

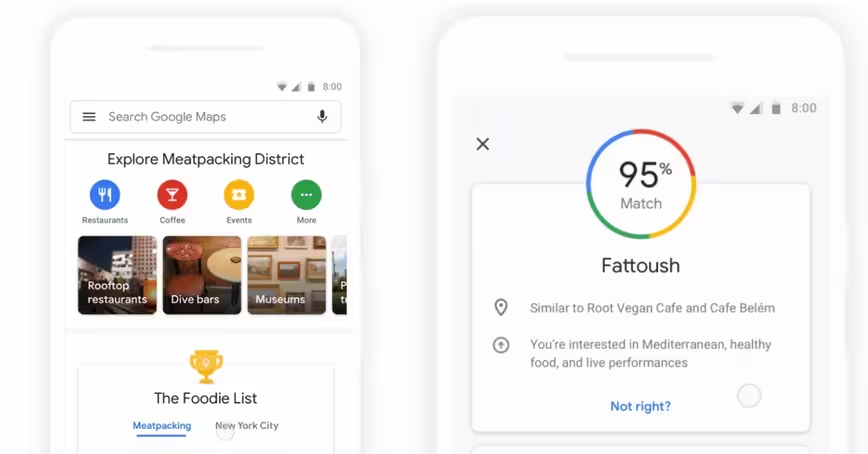

Maps was a little more of a mellow announcement, but one that solves a simple (and silly) problem.

Google tells walkers to begin heading south, and then semi-expects you to know which way that is. By using the camera, paired with GPS, and a new VPS — virtual positioning system — Google’s trying to end the song and dance that begins and ends with walkers turning in circles until the cone extending from their blue dot on the map is oriented in the right direction.

It’ll also add some on-screen augmented reality effects to point you in the right direction, or a friendly digital creature (a dog, in this case) to lead the way. It’s nice having a travel partner.

On the AI front, we’re getting better suggestions that adds an algorithmic score to places you search (or stuff that’s near you) based on how likely you are to enjoy it.

Tying it all together was the highly anticipated launch of Android P, at least in beta form. Android P is perhaps the most ambitious version of Google’s mobile operating system in years. It’s early in the release cycle, but so far we’ve been promised:

- AI that learns how to brighten (and dim) your screen based on usage

- Shush and Wind Down, two features that help you kill that mobile phone addiction by analyzing your usage patterns and encouraging users to turn their phone off at bed time or offers a simple swipe gesture to activate Do Not Disturb mode.

- Slices and Actions, tools that predict what you might want based on previous actions. Slices are snippets that show in-app content without opening an app, like Lyft, for example. Or there’s Actions, which links an app to content in other parts of the OS based on previous usage.

- A handful of really cool developer tools, like Jetpack, and Android App Bundles, which upload everything to Google, but only downloads what the user needs thus saving time on the download and space on their phone.

It’s available now, although it’s an early beta and we’d advise against installing it on your primary Android device. If you have a backup phone gathering dust, have at it.

Gmail even got a bit more AI shoved under the hood. Google announced a predictive type feature called Smart Compose that attempts to match what you’ve typed with words that could finish the sentence or phrase. It’s essentially the same tech that powers predictive text in your favorite messaging applications.

At this point you might be assuming that Google I/O was all about AI. And while you wouldn’t be wrong, we did get a handful of announcements that weren’t related to robots.

There was Google’s new Tour Creator that makes VR content creation a breeze. There’s a Material Design update that adds Theming. Or, maybe you’d like to use your Google Home Max as a sound bar; which you can now that it’s reduced line-in latency by 93 percent.

But as action packed as these past few days have been, there’s one thing that’s running through our mind more than anything Google announced… we’re ready for a nap and a long weekend.

Get the TNW newsletter

Get the most important tech news in your inbox each week.