Artificial intelligence and machine learning have never been more prominent in the public forum. CBS’s 60 Minutes featured earlier this year a segment promising myriad benefits to humanity in fields ranging from medicine to manufacturing. World chess champion Garry Kasparov recently debuted a book on his historic chess game with IBM’s Deep Blue. Industry luminaries continue to opine about the potential threat by AI to human jobs and even humanity itself.

Much of the conversation focuses on machines replacing humans. But the fact is the future doesn’t have to see humans eclipsed by machines. In my field of cybersecurity, as long as there are human adversaries behind cybercrime and cyber warfare, there will always be a critical need for human beings teamed with technology.

Intellectual honesty required

During last Christmas Break, I wanted explore the field of machine learning by creating some simple models that would examine some of its strengths and weaknesses — but also demonstrate some of the issues related to sampling and over-fitting. Given that we were two months away from the Super Bowl, I built a set of models that would attempt to predict the winner.

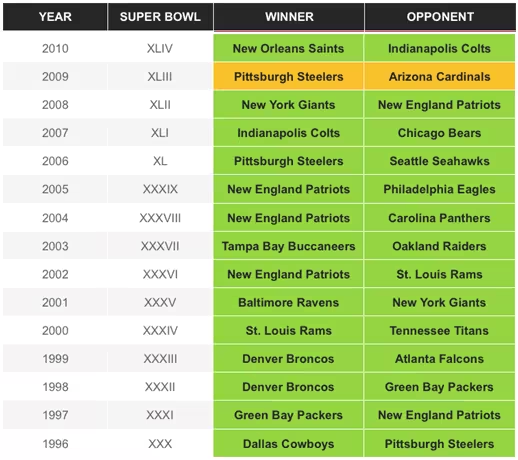

One model was trained on 14 years of team data from 1996 to 2010. I used input training features such as regular season results, offensive strength and defensive strength. The model was amazingly effective at predicting the winners for those years, picking all but one of the games correctly. The one miss was the prediction that both the Pittsburgh Steelers and Arizona Cardinals would win in 2009: But why am I writing this then, instead of flying to Vegas to place huge wagers on games? Well, let’s start by checking how the model worked on six more recent games. Below we show the true results and color grading for the model’s accuracy:

But why am I writing this then, instead of flying to Vegas to place huge wagers on games? Well, let’s start by checking how the model worked on six more recent games. Below we show the true results and color grading for the model’s accuracy: The effectiveness of this model no longer appears too impressive — in fact, it’s no more effective than flipping a coin! What is it about this model that made it work so well on games from 1996 to 2010, but fall apart in more recent years?

The effectiveness of this model no longer appears too impressive — in fact, it’s no more effective than flipping a coin! What is it about this model that made it work so well on games from 1996 to 2010, but fall apart in more recent years?

The answer is there are two aspects of the way the model was built and the experiment was run that caused this behavior. The model was “over-trained”, meaning it learned the “noise” about the games that it was trained on. We also see how different the results can be for testing the model on data it was trained on versus data it was not trained on (what we call testing in-sample data versus out-of-sample data respectively).

A key point to this demonstration is that a very bad model can be presented to have amazing results. In this case, the model generally doesn’t “know” what you are asking it, it doesn’t understand the concept of “winning the Super Bowl,” but it can make classification decisions based on a complex set of inputs and their relationship to each other. This is important to understand as we apply machine learning to cybersecurity.

In cybersecurity, models generally don’t understand the concept of “a cyber-attack” or “malicious content,” but they can do a remarkable job of fighting it by being trained on the massive quantities of data we have related to those issues. For example, we can look at structural elements of all malware seen over the last 20 years to build effective models for identifying new malware similar in structure or built using similar techniques.

The issue with “is it similar to the known” is that it can lead to both false positives and false negatives. For example, a new form of malicious content developed from scratch will be difficult to detect, as well as benign samples that have the characteristics of malicious content. For example, a benign executable (such as calc.exe) can be packed using a packer known to be used by cybercriminals to compress and obfuscate malware. Many existing detection models will recognize the packer’s work and falsely flag the executable as malicious.

The human advantage

Human-machine teaming is nothing new. Over the last thirty to forty years we have used machine learning in hurricane forecasting. In the last 25 years, we’ve been able to improve the accuracy of our hurricane forecasting from within 350 miles to 100 miles of contact.

Nate Silver’s best seller The Signal and the Noise (2012) notes an interesting trend suggesting that while our weather forecasting models have improved, combining this technology with human knowledge of how weather systems work has improved forecast accuracy by 25 percent. Such human-machine teaming saves thousands of lives.

The key is recognizing that humans are good at doing certain things and machines are good at doing certain things. The best outcome is recognizing the strengths of each and combining them. Machines are good at processing massive quantities of data and performing operations that inherently require scale. Humans have intellect, so they can understand the theory about how an attack might play out even if it has never been seen before.

Cybersecurity is also very different from other fields that utilize big data, analytics, and machine learning, because there is an adversary trying to reverse engineer your models and evade your capabilities. We have seen this time and time again in our industry.

Technologies such as spam filters, virus scans and sandboxing are still part of protection platforms, but their industry buzz has cooled since criminals began working to evade their technology. Thunderstorms are not trying to evade the latest in machine learning detection technologies — but cyber criminals are.

A major area we see playing out with human-machine teaming is attack reconstruction. Essentially having technology assess what has happened inside your environment then having a human work on a scenario.

Efforts to orchestrate security incident responses can benefit tremendously when a complex set of actions is required to remediate a cyber incident, and some of those actions are going to have very severe consequences. Having a human in the loop not only helps guide the orchestration steps, but also assesses whether the required actions are appropriate for the level of risk involved.

Whether it’s threat intelligence analysis, attack reconstruction, or orchestration — human-machine teaming takes the machine assessment of new intelligence and layers upon it the human intellect that only a human can bring.

Doing so can take us to a very new level of outcomes in all aspects of cybersecurity. And, now more than ever, better outcomes are everything in cybersecurity.

Get the TNW newsletter

Get the most important tech news in your inbox each week.