Ahead of the launch of iOS 11 this fall, Apple has published a research paper detailing its methods for improving Siri to make the voice assistant sound more natural, with the help of machine learning.

Beyond capturing several hours of high-quality audio that can be sliced and diced to create voice responses, developers face the challenge of getting the prosody – the patterns of stress and intonation in spoken language – just right. That’s compounded by the fact that these processes can heavily tax a processor, and so straightforward methods of stringing sounds together would be too much for a phone to handle.

That’s where machine learning comes in. With enough training data, it can help a text-to-speech system understand how to select segments of audio that pair well together to create natural-sounding responses.

For iOS 11, the engineers at Apple worked with a new female voice actor to record 20 hours of speech in US English and generate between 1 and 2 million audio segments, which were then used to train a deep learning system. The team noted in its paper that test subjects greatly preferred the new version over the old one found in iOS 9 from back in 2015.

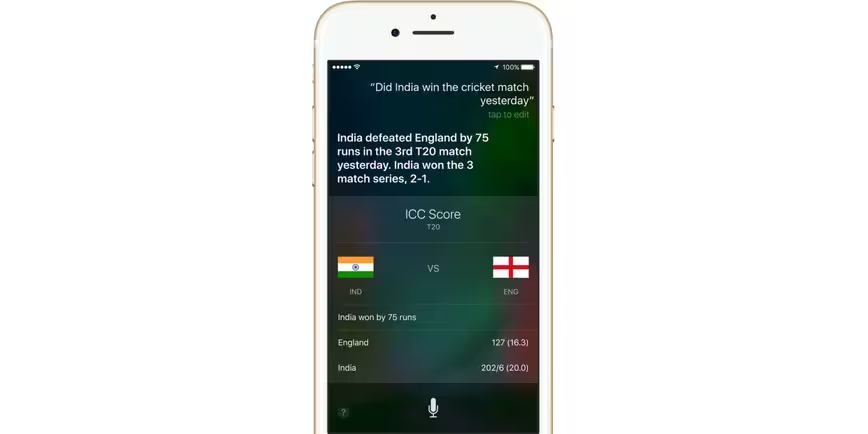

The results speak for themselves (ba dum tiss): Siri’s navigation instructions, responses to trivia questions and ‘request completed’ notifications sound a lot less robotic than they did two years ago. You can hear them for yourself at the end of this paper from Apple.

That’s another neat treat to look forward to in iOS 11. If you’re keen to check out all the cool features that are coming soon, you can install the beta and try them out right away.

Get the TNW newsletter

Get the most important tech news in your inbox each week.