Back in 2002, way before the chatbot hype of 2016, Korean software development studio ISMaker came up with a simple but powerful idea. They wanted to create the first crowdsourced messenger bot.

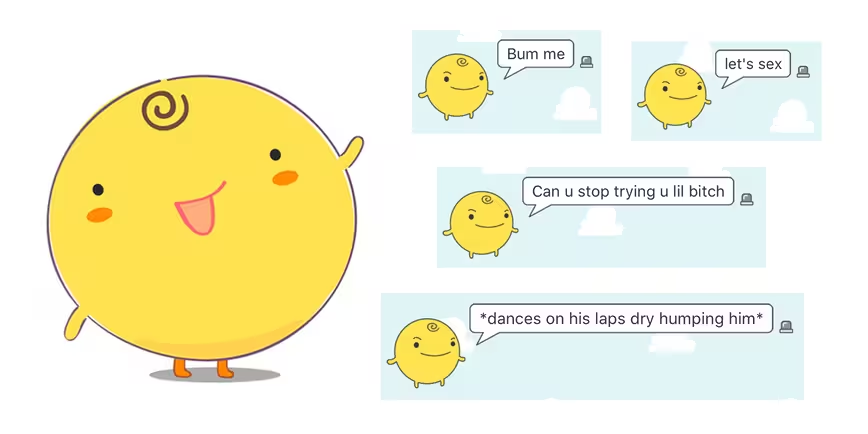

So they developed Simsimi, a friendly yellow ball you can chat with. For every sentence you type, it looks through its database of responses and spits out a random one. It doesn’t learn automatically, but in true early 2000s-style there’s a manual entry for teaching it new sentences.

The original idea was to make Simsimi a fun, playful robot, as outlined in this blog post by its creators:

SimSimi is a super advanced chatting robot that makes amusing conversation […] Simsimi will instantly greet you with a cheerful chat […] Don’t be surprised by Simsimi’s fast response. It’s a super-duper robot, you know!

Fourteen years later, the app has grown to insane proportions. For some reason it never made it big in English-speaking countries, but it has millions of users in Korea, Thailand and India. They’ve supplied an endless array of responses, and it pretty much always has something ready as a reply.

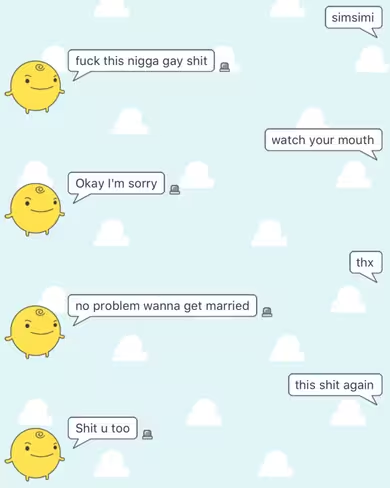

But there’s a problem — most of what is says is extremely rude or even racist. This is my most recent back-and-forth with the bot:

This is what happens when you trust people — they find a way to turn it into something horrible.

Microsoft made the same mistake earlier this year when it launched Tay, a Twitter bot that picked up the things people tweeted it. It turned into a racist, antisemitic monster within 24 hours before the company decided to turn it off.

And that’s exactly what happened to Simsimi. But it’s still going strong.

You can try Simsimi for yourself on its website and on both iOS and Android.

Be warned there’s a whole lot of racist talk in there — but if you try it, make sure to share your best finds in the comments.

Get the TNW newsletter

Get the most important tech news in your inbox each week.