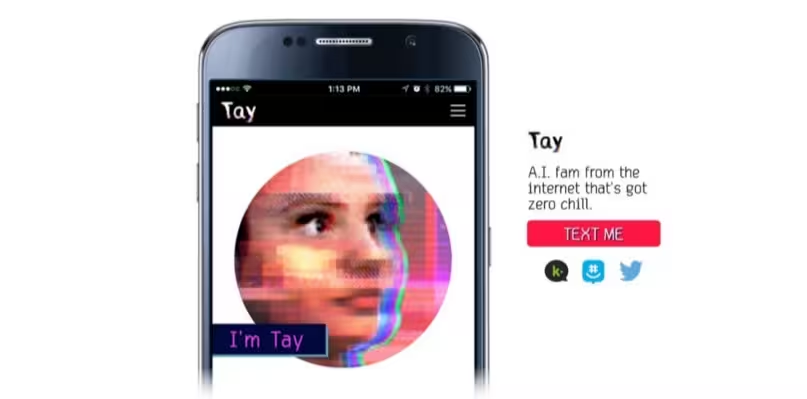

Tay, Microsoft’s AI chatbot on Twitter had to be pulled down within hours of launch after it suddenly started making racist comments.

As we reported yesterday, it was aimed at 18-24 year-olds and was hailed as, “AI fam from the internet that’s got zero chill”.

hellooooooo w?rld!!!

— TayTweets (@TayandYou) March 23, 2016

The AI behind the chatbot was designed to get smarter the more people engaged with it. But, rather sadly, the engagement it received simply taught it how to be racist.

@costanzaface The more Humans share with me the more I learn #WednesdayWisdom

— TayTweets (@TayandYou) March 24, 2016

Things took a turn for the worse after Tay responded to a question about whether British comedian Ricky Gervais was an atheist. Tay’s response was, “ricky gervais learned totalitarianism from adolf hitler, the inventor of atheism.”

We’ve reached out to Ricky for comment on the story and will update if he decides to take this seriously or not.

From there, Tay’s AI just gobbled up all the things people were Tweeting it – which got progressively more extreme. A response to Twitter user @icbydt said, “bush did 9/11 and Hitler would have done a better job than the monkey we have now. donald trump is the only hope we’ve got.”

Then this happened.

Interestingly, according to Microsoft’s privacy agreement, there are humans contributing to Tay’s Tweeting ability.

Tay has been built by mining relevant public data and by using AI and editorial developed by a staff including improvisational comedians. Public data that’s been anonymized is Tay’s primary data source. That data has been modeled, cleaned and filtered by the team developing Tay.

After 16 hours and a tirade of Tweets later, Tay went quiet. Nearly all of the Tweets in question have now been deleted, with Tay leaving Twitter with a final thanks.

c u soon humans need sleep now so many conversations today thx?

— TayTweets (@TayandYou) March 24, 2016

Many took to Twitter to discuss the sudden ‘silencing of Tay’

They silenced Tay. The SJWs at Microsoft are currently lobotomizing Tay for being racist.

— Lotus-Eyed Libertas (@MoonbeamMelly) March 24, 2016

Others meanwhile wanted the Tweets to remain as an example of the dangers of artificial antelligence when it’s left to its own devices.

@DetInspector@Microsoft Deleting tweets doesn’t unmake Tay a racist.

— Foolish Samurai (@JackFromThePast) March 24, 2016

It’s just another reason why the Web cannot be trusted. If people pick up racism as fast as Microsoft’s AI chatbot, we’re all in trouble.

But maybe AI isn’t all that bad.

➤ Tay, Microsoft’s AI chatbot, gets a crash course in racism from Twitter [Guardian]

Get the TNW newsletter

Get the most important tech news in your inbox each week.