A new AI-powered tool can show you how much screen time different public figures and topics are getting on TV.

Stanford University researchers created the system to increase transparency around editorial decisions, by analyzing who’s getting coverage and what they’re talking about.

“By letting researchers, journalists, and the public quantitatively measure who and what is in the news, the tool can help identify biases and trends in cable TV news coverage,” said project leader Maneesh Agrawala.

Normally, monitoring organizations and newsrooms rely on painstaking manual counting to find out who and what’s getting screen time. But the Stanford Cable TV News Analyzer uses computer vision to calculates this automatically.

The AI then shows what people are discussing by synchronizing the video with transcripts of their speech. The different subjects and speakers can then be compared across dates, times, and channels.

[Read: ]

You can use the tool to count the screen time of politicians, review coverage of the US election, or see how different channels are reporting certain topics.

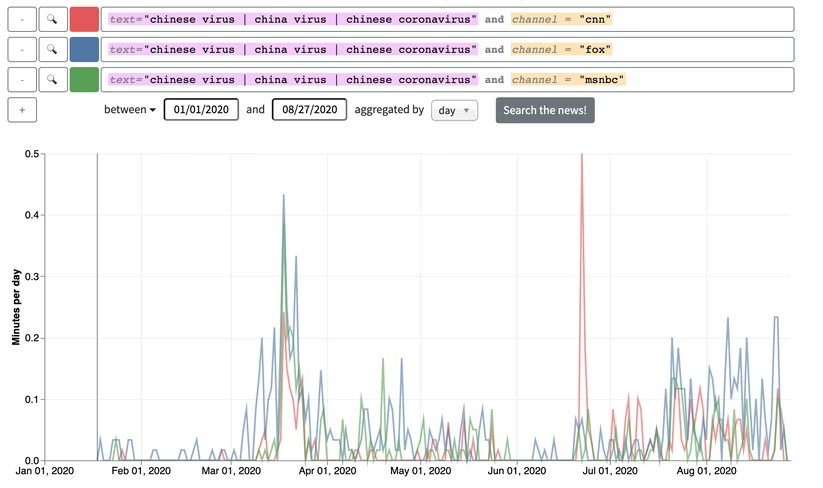

The example below shows how much CNN, Fox, and MSNBC have featured the controversial term “Chinese virus.”

Unsurprisingly, the term has regularly featured on Fox. But it’s also been frequently discussed on CNN and MSNBC.

However, that doesn’t mean that CNN is endorsing the slur. Clicking on the huge red spike shows the channel’s abundant coverage of the term on June 22 was highly critical of Trump’s use of the phrase.

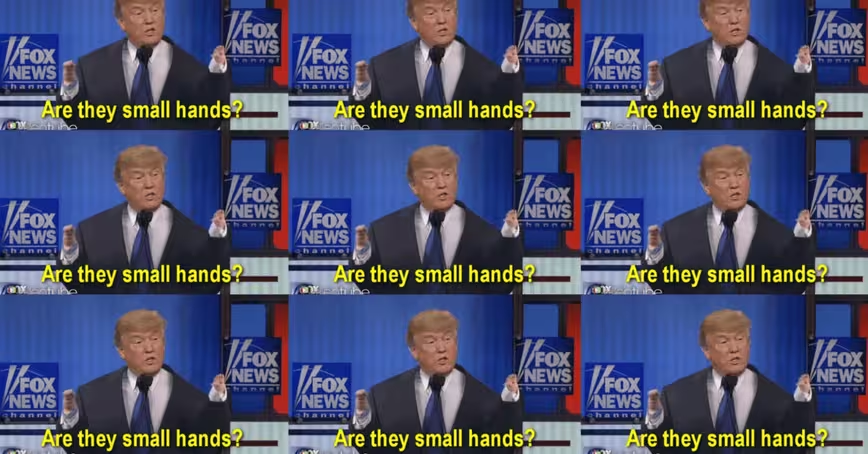

Fox’s coverage, however, tended to defend Trump’s xenophobia. The transcription below gives a good example of how the network covered the term:

AI’s risks and rewards

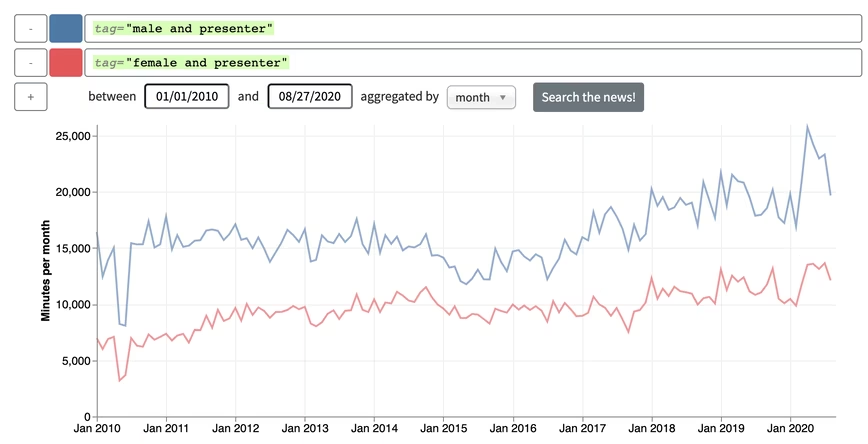

The tool can also identify gender biases in TV coverage. In the graph below, the move towards on-air gender parity appears to have reversed since 2015.

However, this example raises one of the ethical concerns around the project. The system uses computer vision to make a binary assessment of each presenter’s gender, based on the appearance of their face. But their appearance could be different from their gender identity or birth sex. This shortcoming risks misgendering people and excluding non-binary individuals from the analysis.

In addition, facial recognition is notoriously biased and error-prone. But the researchers claim their application has a low potential for harm.

They also say all the people in their database were identified by the Amazon Rekognition Celebrity Recognition API, which only includes public figures. However, Amazon hasn’t revealed its definition of a public figure.

Despite these qualms, the tool could provide some useful insights into media biases — particularly with another nasty and divisive US election campaign underway.

You can try the tool out for yourself at the Stanford Cable TV News Analyzer website.

So you like our media brand Neural? You should join our Neural event track at TNW2020, where you’ll hear how artificial intelligence is transforming industries and businesses.

Get the TNW newsletter

Get the most important tech news in your inbox each week.