Today we launched Neural, our new home for human-centric AI news and analysis. While we’re celebrating the culmination of years of hard work from our behind-the-scenes staff, I’m taking the day to quietly contemplate the future of AI journalism.

The Guardian’s Oscar Schwartz wrote an article in 2018 titled “The discourse is unhinged: how the media gets AI alarmingly wrong.” In it, he discusses the 2017 hype-explosion surrounding Facebook’s AI research lab developing a pair of chat bots that created a short-hand language for negotiating.

In reality, the chat bots’ behavior was remarkable but not entirely unexpected. Unfortunately the media at-large covered the interesting event as though SKYNET from the “Terminator” movies had been born. Dozens of headlines popped up declaring that Facebook’s AI had “created its own language that humans can’t understand in order to communicate with itself” and other nonsense. Dozens others added that Facebook’s engineers were terrified and “pulled the plug” after realizing what they’d done.

Sadly, this level of hyperbole isn’t the exception. While it isn’t always as extreme as it was in the case of Facebook’s chat bots, you never have to look very far to find outlandish claims when it comes to AI reporting.

Schwartz’ article ultimately ends on a somber note, with emerging technologies expert Joanne McNeil essentially postulating that journalists would write better articles if they made more money. Aside from paying us more – which seems like a perfectly reasonable strategy to me – there might be other ways we can up the wholesale quality of AI articles by taking a clear stance against hype and misinformation.

Gary Marcus, CEO of Robust.AI and co-author of the book “Rebooting AI: Building Artificial Intelligence We Can Trust,” has gone to great pains to offer his expertise to the journalistic community. In a recent article on The Gradient he reprinted his recommendations for AI writers, beginning with:

Stripping away the rhetoric, what does the AI system actually do? Does a “reading system” really read?

Figuring out exactly what an AI does can be hard to do, even for the most seasoned journalist. But it can be hard for researchers and scientists to do as well. I called out the “gaydar AI” research published by a Stanford researcher in 2018 because it claims to do something no human can do (predict another human’s sexual orientation based on their looks).

While it’s true that there’s a resource gap between AI journalists and the researchers and developers we cover, there’s also, typically, an education gap too. It’s not easy for a writer-by-trade to pick apart research conducted by PhD-level scholars. Often this is mitigated by seeking other experts and contrasting their opinions, but sometimes that’s not an option.

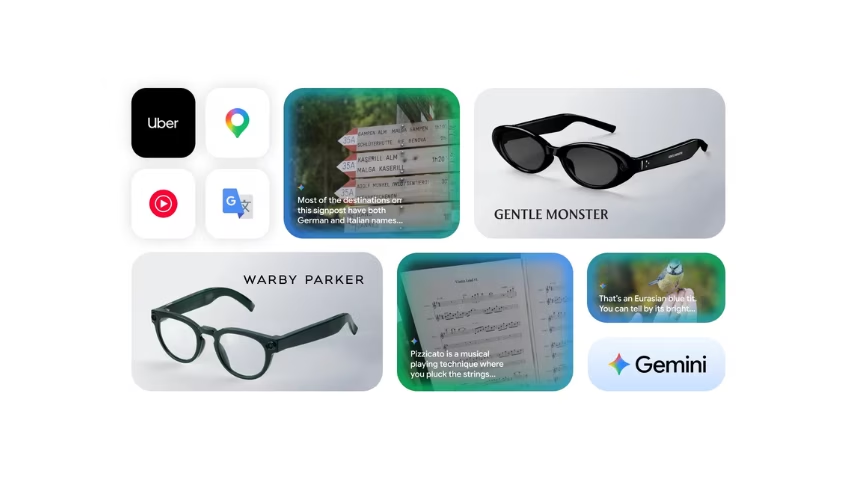

Worse, at the other end of the spectrum journalists are beset upon by the sparkly, high-end PR campaigns conducted by the likes of Google and Facebook. These slick presentations come with pre-formatted commentary from famous AI figures, related links, excerpts from research papers, and plain-language explanations on why their product will “change everything” and be the best thing ever.

It doesn’t take journalistic malice or a lack of integrity to publish a hyperbolic story, just a lack of perspective. It’s hard to argue against Google’s marketing team, for example, when you’re sitting at I/O watching the company unveil the Google Assistant feature that calls real businesses and makes appointments for you. At the time it seemed like the future had arrived. Here we are two years later and that future looks further away than ever. It’s been the same with driverless cars.

Related: I took a slow-moving bus to watch Hyperloop One’s fast-moving train

Experts can point to the inevitable failure of the most hyperbolic claims to manifest as proof that journalists are messing up, but it takes more than just due diligence and rigor to avoid the toughest trappings. Many of us aren’t trained developers or researchers, we’re technology journalists who cover AI. And, let’s face it, even the smartest AI experts don’t always agree.

No, it’s not just wading through the research papers to find the truth and being cynical anytime a PR offers an embargo. Both of those are key ingredients, but it’s also about realizing we’re writing for a smarter audience that’s no longer impressed by the mere existence of AI.

A few years ago we could get away with a shocking headline and lead followed by marketing rhetoric and basic AI terminology explanations for three paragraphs. Now, the average reader knows what a neural network is because they’ve got one inside their phone. Most of TNW’s readers have probably chatted with an AI, played with Transformer, or used an AI to test how British or American their accent is.

The point is: I believe a large portion of tech news readers are ready for smarter, more reasonable, human-centric AI coverage. And the journalists who give it to them now are the ones who’ll still be covering AI when the rest catch up.

Visit the Neural homepage for the latest artificial intelligence news, analysis, and opinion.

You’re here because you want to learn more about artificial intelligence. So do we. So this summer, we’re bringing Neural to TNW Conference 2020, where we will host a vibrant program dedicated exclusively to AI. With keynotes by experts from companies like Spotify and RSA, our Neural track will take a deep dive into new innovations, ethical problems, and how AI can transform businesses. Get your early bird ticket and check out the full Neural track.

Get the TNW newsletter

Get the most important tech news in your inbox each week.