Last year a couple of Stanford researchers created an algorithm to determine whether Caucasians are gay or not with better accuracy than humans. The algorithm, trained with human bias, is better at determining whether or not other humans will think a face is gay. Unfortunately the researchers don’t present their work that way.

The Journal of Personality and Social Psychology recently published the final version of the white paper that details the work, essentially giving it full credence as a peer-reviewed piece of science.

That makes sense; there’s nothing wrong with the paper and all the science (that can actually be reviewed) obviously checks out. The problem is this: humans cannot predict sexuality by looking at a picture. Whatever measure of accuracy our species can achieve is attributable to luck and intuition, neither of which can be quantified. So who gives a damn if a computer is better at making random guesses than a person is?

The first major problem with the paper is its title: “Deep Neural Networks Can Detect Sexual Orientation From Faces.”

Yet, the entire paper goes on to point out that the researchers designed an algorithm that imitates human bias. At no point is this AI predicting gayness: it predicts whether humans will think a face looks gay or not based on data from humans who tried to predict whether a human’s face was gay or not.

It’s basically using second-hand knowledge of people, from humans who got it right a little-better than half the time, to determine what made those people think the faces they were looking at were gay or straight. It’s predicting patterns. It does not predict gayness.

According to the study:

Given a single facial image, a classifier could correctly distinguish between gay and heterosexual men in 81% of cases, and in 71% of cases for women. Human judges achieved much lower accuracy: 61% for men and 54% for women.

Not only is there absolutely no scientific basis for guessing whether a face is gay or not, but using images to do so presents a myriad of problems, not the least of which is the fact that the images were sourced from people who readily identified as either gay or heterosexual. This indicates the researchers believe human sexuality is binary.

And there’s a definite danger in giving those who would discriminate tools with which to do so that they can claim are backed by science.

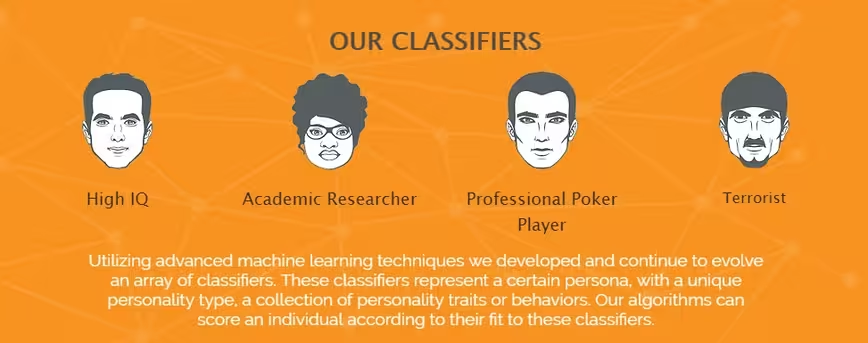

Companies, like Faception, are already selling AI products that supposedly use “advanced algorithms” to predict whether a person will commit a crime.

This is problematic for obvious reasons, as Princeton psychology professor Alexander Todorov told The Washington Post: “The evidence that there is accuracy in these judgments is extremely weak. Just when we thought that physiognomy ended 100 years ago. Oh, well.”

Simply put, as far as verifiable science goes, you can’t predict if someone is going to molest a child, have same-sex relationships, or commit an act of terrorism just by looking at their face. Claims from Faception CEO Shai Gilboa that “Our personality is determined by our DNA and reflected in our face. It’s a kind of signal,” are loose, at best.

Yet, somehow, according to The Washington Post, “Faception said it’s already signed a contract with a homeland security agency to help identify terrorists.” And as we’ve said before: that’s a terrible and racist idea.

What we’re seeing here is an extension of human bias. Creating an algorithm that has more psychic ability than humans is nothing more than automating ignorance.

Furthermore the study itself points out that there’s not enough data on minorities for the researchers to use the algorithm on anything other than well-lit Caucasian faces, which makes it virtually useless for anything other than academic discourse.

It’s also worth mentioning that the entire basis of the hypothesis is built on one controversial idea called “the hormonal theory of sexuality.” A theory which basically says homosexuality is decided in the womb, but there’s no actual scientific consensus whether this is true.

Still, this didn’t stop the Stanford team from building a hypothesis based on this theory, the white paper for the study says:

…the widely accepted prenatal hormone theory (PHT) of sexual orientation predicts the existence of links between facial appearance and sexual orientation. According to the PHT, same-gender sexual orientation stems from the underexposure of male fetuses or overexposure of female fetuses to androgens that are responsible for sexual differentiation.

To be fair, the white paper also states the researchers are following a lead that gay people are better at determining whether a face is gay or not because, “Recent evidence shows that gay men and lesbians who arguably have more experience and motivation to detect the sexual orientation of others, are marginally more accurate than heterosexuals.” This sounds like something that could be true, but the math only adds up if the following statement is true: if x = gay people then all gay people = x. Not all gay people are the same, and it’s offensive to conduct work based on a theory that being gay makes you better or worse at accurately completing a task.

Kosinksi, according to The New York Times, actually thought about creating an algorithm to determine whether a person was an atheist or not first, but decided instead to go with the gay detector. It’s evident this wasn’t created out of the desire to solve a problem.

He claims the purpose of the study was simply to prove a point, but that doesn’t explain why the team chose to title it “Deep Neural Networks Can Detect Sexual Orientation From Faces,” which indicates AI can actually detect sexual orientation… from faces. This is simply not true.

To date there is no scientifically verifiable way to determine a person’s sexuality without asking them. And even then things get problematic. What makes a person gay or straight? Society at large seems to struggle with the concepts of bisexuality, transsexualism, and fluid-sexuality. It’s unfathomable that any scientist would believe they’ve solved the “gay” puzzle with a crappy algorithm. And that’s not editorializing, Kosinski himself told Quartz:

This is the lamest algorithm you can use, trained on a small sample with small resolution with off-the-shelf tools that are actually not made for what we are asking them to do.

It seems reckless for science to be conducted in an ambiguous manner, based on supposedly altruistic motivations, with what appears to be deceptive terminology. Kosinski’s claims that this algorithm is supposed to point out the dangers of facial recognition AI conflict with the title of the paper and the assumptions it proposes.

There’s no such thing as gaydar, and it takes an immense amount of arrogance for anyone to claim they’ve unlocked the secrets of human sexuality with a couple of theories and a lame algorithm. This is nothing more than an AI that points out what human bias looks like extrapolated.

Can it predict gayness? Absolutely not. It conflates the idea of what we think gay people look like with what gay people actually look. Even at 100 percent accuracy it still wouldn’t actually be predicting what a gay face looks like because a computer doesn’t understand the concept of gay. It finds correlations between disparate data groups, because it has no other choice.

Not everyone identifies as gay or straight, but an even bigger problem is the fact that many people who have sex with people of the same gender consider themselves heterosexuals. Is being gay a state or an action? Are you gay because you want to have sex with someone who is the same gender as you, or because you do have sex with them?

This doesn’t even address the fact that, statistically, one in four men have homosexual thoughts. If a man is in love with another man, but doesn’t have sex with him, is he gay? If a man has casual sex with other men, but doesn’t love them or even find himself attracted to them outside of coitus, is he straight?

I can self-identify as a three toed-sloth, and you may even see my picture in my author’s profile and say “yep, he looks like the type”.

The problem with this paper is that it takes a small dataset, that only contains self-reporting Caucasians, and claims that an AI can “detect” homosexuality. The AI that “detects” cancer doesn’t “predict” what people will say is and isn’t cancer, it looks for the actual obvious signs of cancer with no regard for what 51 percent or 65 percent of non-experts think.

We are always guessing when we try to tell something about a person by the way their faces look, even if we’re correct. Computers don’t have a magic power that allows them to bypass the arguments against physiognomy, no matter how accurate their predictions are. Saying your AI detects homosexuality is arming bigots with science.

Seriously people, we’re better than this.

Get the TNW newsletter

Get the most important tech news in your inbox each week.