Cyber-security researchers from Ben-Gurion University of the Negev recently discovered a computer attack that could allow hackers to remotely trick laboratory scientists into creating toxins and viruses.

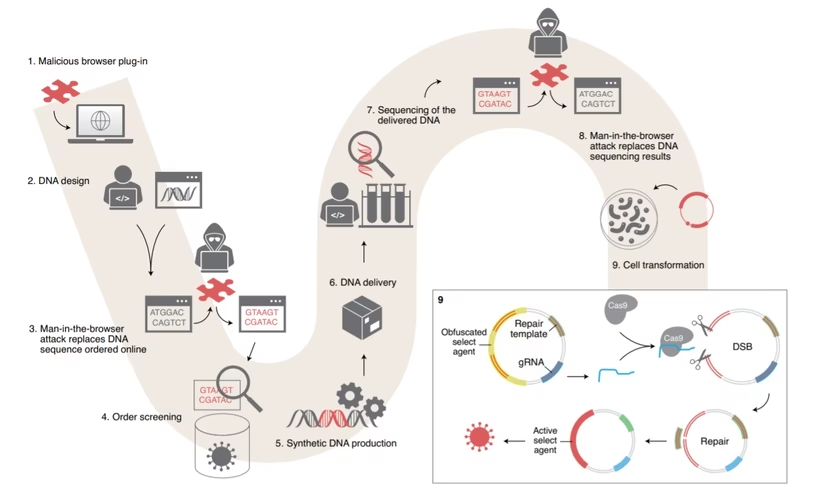

The setup: Medical professionals use synthetic DNA for a variety of reasons, including the development of immunogens for creating vaccines. The Ben-Gurion researchers developed and tested an end-to-end attack that changes data on a bioengineer’s computer in order to replace short DNA sub-strings with malicious code.

If terrorists wanted to to spread a virus or toxin by hijacking a reputable lab or hiding it inside of a vaccine or other medical treatment, they’d traditionally need physical access to the laboratory or part of its supply chain. According to this paper published last week in Nature Biotechnology that’s no longer the case.

The researchers claim that a simple trojan horse and a bit of hidden code could turn medicine into malice and the engineers creating the tainted goods would be none the wiser:

A cyberattack intervening with synthetic DNA orders could lead to the synthesis of nucleic acids encoding parts of pathogenic organisms or harmful proteins and toxin … This threat is real. We conducted a proof of concept: an obfuscated DNA encoding a toxic peptide was not detected by software implementing the screening guidelines. The respective order was moved to production.

The researchers describe a scenario wherein a bad actor uses a Trojan horse to infect a researcher’s computer. When that researcher goes to order synthetic DNA, the malware obfuscates the order so that it looks legit to the security software the DNA shop uses to check it. In reality, the obfuscated DNA sub-strings are harmful.

The DNA shop fills the order (unknowingly sending the researcher the dangerous DNA) and the researcher’s security software fails to uncover the obfuscated sub-strings so the researcher remains clueless.

The researchers managed to use their technique to successfully bypass security for 16 out of the 50 orders they tried it on.

What this means: We’re in a dangerous inbetween place where AI isn’t advanced enough yet to detect these kinds of adapted envelope attacks and humans simply can’t pay enough attention at scale.

DNA replication services synthesize DNA in numbers so great it would be impossible for humans to check each sequence. We rely on automation and AI to make sure everything is as it should be, but when anomalies show up the machines turn to humans to make the call. In this case, humans likely wouldn’t be able to see through the smokescreen either.

To address the issue, the researchers suggest a suite of cybersecurity measures they claim should be immediately implemented across the biotechnology community. You can read the paper here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.