OpenAI has reached an agreement with the Pentagon to deploy its artificial intelligence models in classified military systems, just hours after President Donald Trump ordered federal agencies to stop using rival Anthropic’s technology.

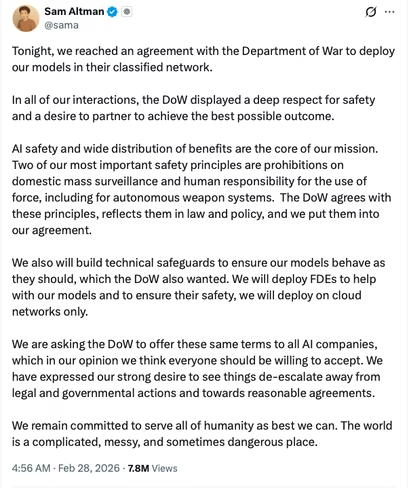

The announcement came late Friday from OpenAI CEO Sam Altman, who said the company had secured terms with the Department of Defense to use its models within the department’s classified network. The deal follows a sharp escalation between the Trump administration and Anthropic over how AI systems can be used in military contexts.

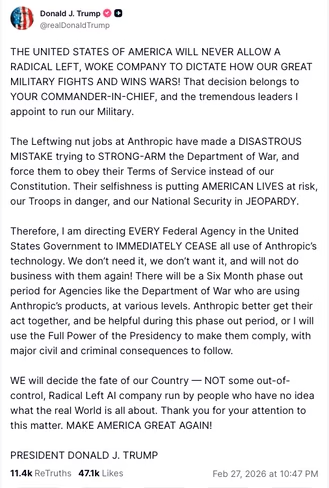

Earlier in the day, Trump directed every federal agency to “immediately cease” use of Anthropic’s products. Defense Secretary Pete Hegseth also designated the company a “supply chain risk to national security,” a classification typically used under federal procurement authorities to restrict certain technologies in defense contracts.

Similar supply chain restrictions in recent years have been applied to foreign telecom companies such as Huawei and ZTE under Section 889 of the 2019 National Defense Authorization Act. Those measures were implemented through federal procurement rules requiring contractor certification that prohibited technologies are not used in connection with government contracts. In Anthropic’s case, the designation requires the Pentagon to phase out use of the company’s systems and obligates military contractors to certify that their Defense Department work does not involve Anthropic’s AI tools. The administration has provided a six-month transition window.

The confrontation centers on whether AI companies can limit how the military uses their systems.

Anthropic had sought contractual assurances that its flagship model, Claude, would not be used for domestic mass surveillance of Americans or to power fully autonomous weapons. The Pentagon has said it does not intend to use AI in those ways but has insisted that models must remain available for all lawful purposes.

After weeks of negotiations, talks between Anthropic and the Defense Department collapsed. Officials accused the company of attempting to impose ideological restrictions on military operations. Anthropic maintained that its objections were narrow and focused on safety and constitutional rights.

Shortly after the administration’s move against Anthropic, Altman announced that OpenAI had finalized its own agreement with the Pentagon.

In a post on X, Altman said the deal reflects two of OpenAI’s “most important safety principles”: prohibitions on domestic mass surveillance and a requirement for human responsibility in the use of force, including autonomous weapon systems.

“The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement,” Altman wrote, referring to the department’s recent use of the “Department of War” branding in official posts.

It remains unclear how OpenAI’s agreement differs in substance from the safeguards Anthropic had sought. Pentagon officials have argued that existing U.S. law and Defense Department policy already prohibit domestic mass surveillance and fully autonomous weapons, and that no new legal standards were necessary.

The dispute with Anthropic became increasingly political in recent days.

In a Truth Social post, Trump criticized the company in harsh terms and framed its position as an attempt to override constitutional authority. Hegseth accused Anthropic of trying to assert control over operational military decisions and said the department must retain unrestricted access to AI models for lawful purposes.

Anthropic has pushed back, arguing that the supply chain risk designation exceeds the Defense Department’s statutory authority. The company said federal law limits such determinations to specific defense-related contracts and does not grant the executive branch broad power to block all commercial activity with a domestic company. Anthropic has said it intends to challenge the designation in court.

Supply chain risk determinations are more commonly associated with foreign-owned firms deemed national security threats. Applying the designation to a U.S.-based frontier AI developer marks a significant shift in how procurement authorities are being used in the context of artificial intelligence.

The episode underscores how rapidly AI has become embedded in national security policy.

OpenAI, Anthropic, Google, and Elon Musk’s xAI have secured Defense Department agreements or approvals for use of their AI models, including in classified environments. At the same time, concerns about surveillance, autonomous weapons, and the reliability of large language models have intensified scrutiny of military AI deployments.

Anthropic, which NPR reported is valued at roughly $380 billion and is preparing for a public offering, now faces legal and reputational uncertainty following the administration’s actions. The Pentagon contract at the center of the dispute is worth up to $200 million, a relatively small portion of the company’s reported revenue but symbolically significant.

For OpenAI, the agreement positions the company as a key partner in the Defense Department’s AI strategy while maintaining its publicly stated safety principles.

Whether the contrasting outcomes reflect substantive differences in contract terms or divergent negotiation strategies remains unclear. What is clear is that the relationship between AI developers and the U.S. military has entered a more visible and politically charged phase.

Get the TNW newsletter

Get the most important tech news in your inbox each week.