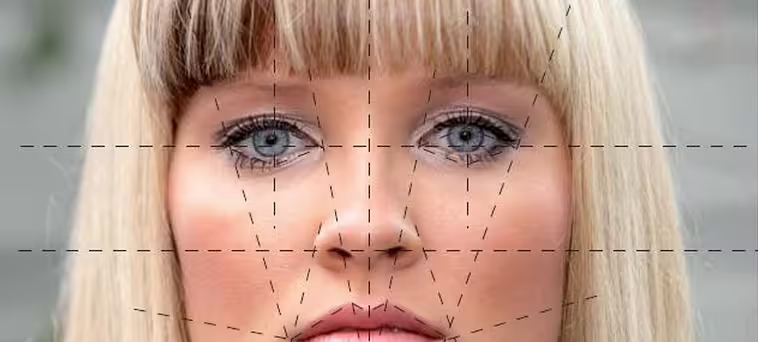

Computer graphics are steadily becoming more realistic in both movies and video games, but Disney’s Brave and Rockstar Games’ L.A. Noire will one day look like amateur’s work. Microsoft researchers have taken 3D modeling a step further with a new technique that leverages both motion capture and 3D scanning. It creates high-fidelity 3D images of the human face that depict not only large-scale features and expressions, but also the accompanied movement of human skin (such as wrinkling).

The researchers start by recording 3D facial performances made by an actor using a marker-based motion capture system (100 reflective dots are applied to the actor’s face). This recorded data then undergoes a facial analysis to identify a set of face scans needed to reconstruct certain facial features, and to determine the minimal set of face scans required. The scientists then use a laser scanner to capture high-fidelity facial scans. Finally, they combine the motion capture data with the minimal set of face scans.

Xin Tong of Microsoft Research Asia Tong is leading the research, with help from Jinxiang Chai, a Texas A&M professor, as well as Haoda Huang and Hsiang-Tao Wu, also both from Microsoft Research Asia. Tong says realistic face animation is the “holy grail” of computer graphics – the human face is powered by 52 muscles and is capable of so many facial expressions that current technology often makes the resulting animations look fake.

“We are very familiar with facial expressions, but also very sensitive in seeing any type of errors,” Tong said. “That means we need to capture facial expressions with a high level of detail and also capture very subtle facial details with high temporal resolution.”

The most obvious place Microsoft can use this technology is Avatar Kinect, which unsurprisingly also came out of Microsoft Research. If you were to wink, Kinect could detect the facial expression and have your avatar on the screen do the same, for example. Not every Microsoft Research project turns into a final product, but this is the most likely candidate.

“The character would be virtual, but the expressions real,” Tong said. “For teleconference applications, that could be very useful, for example, in a business meeting, where people are very sensitive to expressions and use them to know what people are thinking.”

Tong admits that there’s still work to be done. His team’s technology doesn’t yet capture synchronized eye or lip movements, for example. It also takes a lot of computing power and time – this is expected, but of course the researchers would like to reduce both (Tong wants the process to occur in real-time).

Chai, Huang, Tong, and Wu will present their paper, titled “Leveraging Motion Capture and 3D Scanning for High-fidelity Facial Performance Acquisition” (PDF), at the SIGGRAPH 2011 computer graphics conference (August 7 to August 11, 2011) in Vancouver, British Columbia. If you want more technical details, but don’t want to read the whole 10-page paper, here’s the abstract:

This paper introduces a new approach for acquiring high-fidelity 3D facial performances with realistic dynamic wrinkles and finescale facial details. Our approach leverages state-of-the-art motion capture technology and advanced 3D scanning technology for facial performance acquisition. We start the process by recording 3D facial performances of an actor using a marker-based motion capture system and perform facial analysis on the captured data, thereby determining a minimal set of face scans required for accurate facial reconstruction. We introduce a two-step registration process to efficiently build dense consistent surface correspondences across all the face scans. We reconstruct high-fidelity 3D facial performances by combining motion capture data with the minimal set of face scans in the blendshape interpolation framework. We have evaluated the performance of our system on both real and synthetic data. Our results show that the system can capture facial performances that match both the spatial resolution of static face scans and the acquisition speed of motion capture systems.

Get the TNW newsletter

Get the most important tech news in your inbox each week.