Google has been working on a wide range of AI-based projects lately – earlier this week, it showed off one that can identify what you’re trying to draw and surface clean clipart that resembles your doodle.

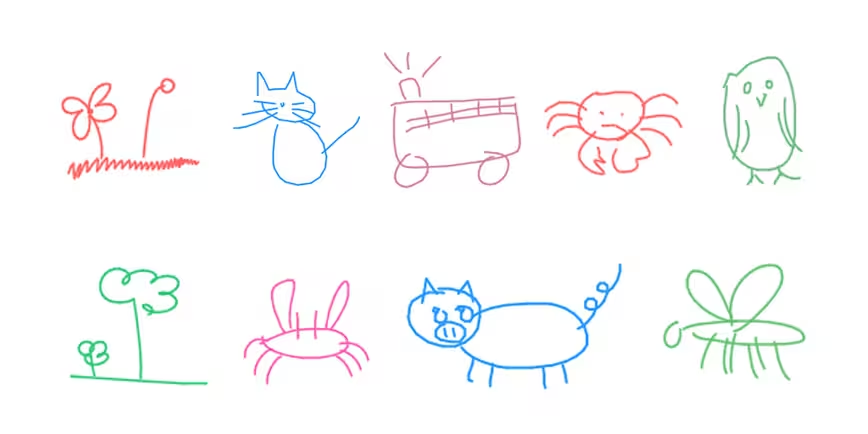

Its latest experiment is called Sketch-RNN, and it’s a neural network system that has learned to draw on its own by looking at roughly 5.5 million sketches from people who played Pictionary with Google’s AI-powered Quick, Draw! game from last year.

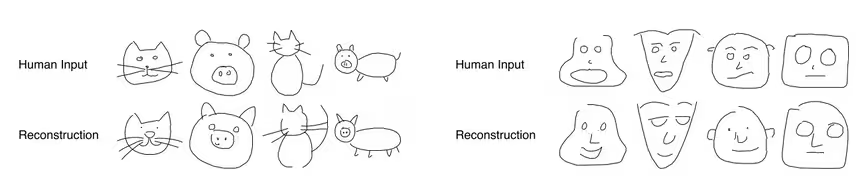

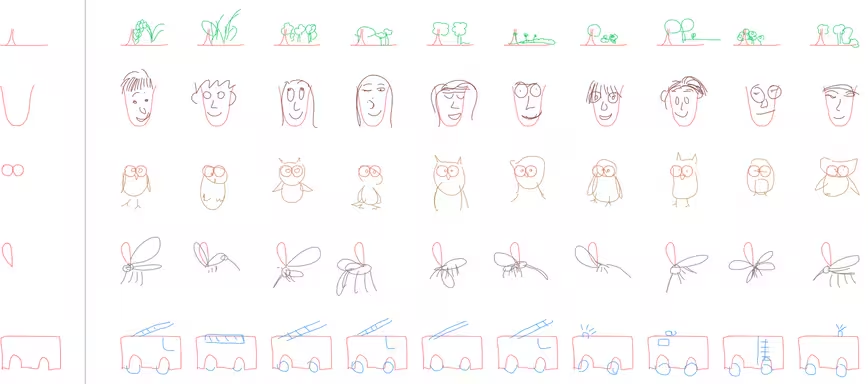

By triaging sketches in 75 different categories like cats, pigs and trucks, the AI can now draw basic representations of these things when presented with hand-drawn sketches. It’s not merely copying what it’s fed; instead, it’s identifying what the input stands for and is trying to create a unique doodle based on what it knows about each object.

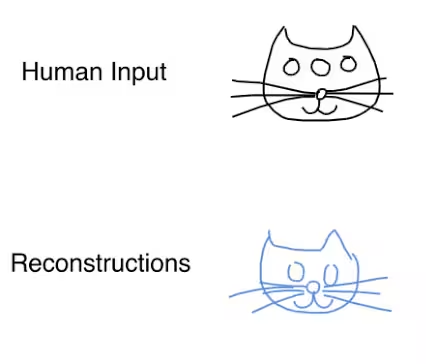

For example, you could give Sketch-RNN a drawing of a cat’s face with three eyes, and it’ll spit out another version with two eyes – because it’s been trained to understand that cats only have a pair of eyes.

Sketch-RNN can also draw without the help of a starting sketch, and can even complete sketches that a human has started, but not finished.

Sure, the sketches aren’t exactly photorealistic, but the idea here is to ‘train a machine to draw and generalize abstract concepts in a manner similar to humans’, and Google has achieved that.

The researchers behind the project believe that this tech could lead to interesting applications, such as using computers to teach people how to draw, and generating patterns with similar but unique shapes for textile designers.

The full paper is available here (PDF).

Get the TNW newsletter

Get the most important tech news in your inbox each week.