Unlike most premium phones released over the past year, Google’s Pixel 2 and Pixel 2 XL don’t come with a dual rear camera – but they still manage to pull off impressive depth-of-field effects that separate the background from the foreground with natural-looking blur.

It actually uses AI to pull that off – and now, the company has open-sourced a similar technology that developers can use in their third-party camera apps for high-quality Portrait Mode-style shots like the one above.

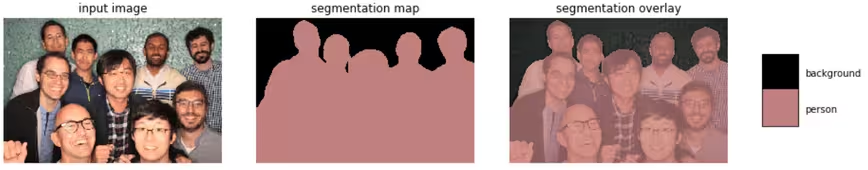

It’s called DeepLab v3+, and it’s essentially an image segmentation technology that uses a neural network to detect the outlines of objects in your camera’s field of view. The most obvious utility of this is to create depth-of-field effects, but it can also help identify objects.

Google has been working on it for the past three years, and says that this version allows for significantly more refined boundary detection. And to be clear, this isn’t the exact same tech that powers the Pixel 2 camera (which also has a dedicated custom chip for image processing), but developers should be able to get similar results.

You can read more about how DeepLab v3+ works on this page, and find the code over in this GitHub repository.

The Next Web’s 2018 conference is just a few months away, and it’ll be ??. Find out all about our tracks here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.