The minute hand on the robot apocalypse clock just inched a little closer to midnight. DeepMind, the Google sister-company responsible for the smartest AI on the planet, just taught machines how to figure things out for themselves.

Robots aren’t very good at exploring on their own. AI that only exists to parse data, such as neural networks that decide whether something is a hotdog or not, have relatively little to concentrate on compared to the near-infinite number of things a physical robot has to figure out.

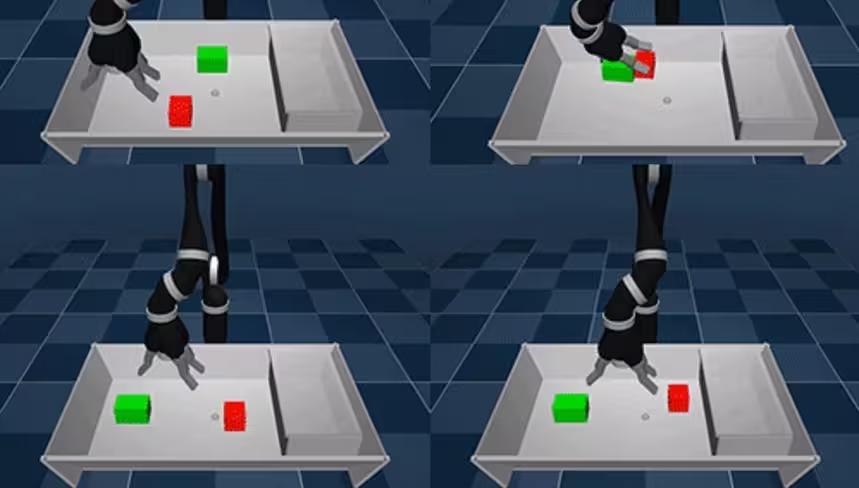

To solve this problem DeepMind built a new learning paradigm for AI-powered robots called ‘Scheduled Auxiliary Control (SAC-X).’ This new paradigm gives robots a simple goal like ‘clean up this playground’ and rewards it for completion.

According to a DeepMind blog post:

The auxiliary tasks we define follow a general principle: they encourage the agent to explore its sensor space. For example, activating a touch sensor in its fingers, sensing a force in its wrist, maximising a joint angle in its proprioceptive sensors or forcing a movement of an object in its visual camera sensors.

The researchers don’t tell the robot how to complete the task, they simply equip it with sensors (which are initially turned off) and let it fumble around until it gets things right.

By exploring its environment and testing the functionality of its sensors, the robot is able to eventually earn its reward: a point. If it fails, it doesn’t get a point.

Watching a robot arm fumble around in a box may not seem impressive at first, especially if you’ve seen similar looking robots build furniture. But the amazing part is that this particular machine isn’t following a program or doing something it was designed for. It’s just a robot trying to figure out how to make a human happy.

And this work is important: it’ll change the world if DeepMind or another AI company can perfect it. Right now, there isn’t a robot in existence that could walk/roll into a strange house and tidy up. Making a bed, emptying the trash, or putting on a pot of coffee are all extremely complex tasks for AI. There’s a near infinite amount of ways each task could be performed – more if the robot is allowed to use flamethrowers and make insurance claims.

At the end of the day, we’re still a long ways away from “Rosie the Robot” from “The Jetsons.” But, if DeepMind has anything to say about it, we’ll get there. And it all starts with a robot arm learning how to play with blocks by itself.

Want to hear more about AI from the world’s leading experts? Join our Machine:Learners track at TNW Conference 2018. Check out info and get your tickets here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.