Since the days of punch cards, the human-computer interaction landscape has undergone great developments. Scientists are constantly trying to find new ways to bridge the gap between man and machine. The effort has led to the invention of keyboards, mice and touch screens, which made computational power more accessible.

Computers themselves have undergone great changes. We gradually moved from mainframe computers to PCs, laptops, smartphones and beyond. There is now practically a computer in everything, from coffee makers to vehicles, airplane engines and the human body.

Now, Artificial Intelligence breakthroughs are causing a revolution in the space. A quick look back shows how far we’ve come in the past few years. These AI-related technologies are silently redefining the way we make our intentions known to computers.

Natural language processing (NLP)

For decades, we’ve dreamed of computers that understand human speech as it is spoken. Natural Language Processing (NLP) is the technology that is making that wish come true.

Thanks to NLP, we no longer need to interact with computers through rigid user interfaces and limited voice commands. NLP has helped Google’s search engine become much smarter at pulling up results. It’s now much better at answering questions such as “how do I get to the nearest hospital?” or “Give me the best songs of 2017.”

NLP as well as AI-enhanced speech recognition have contributed greatly to the rise in popularity of voice search.

You can see the power of NLP in AI-powered chatbots. The fluid and natural experience these chatbots provide can potentially make messaging apps a replacement for specialized apps.

NLP is the technology that makes it possible for Alexa, Siri, Cortana and other smart assistants to do their magic. Last year, the creators of AI assistant Viv showed how NLP would facilitate interaction with various online services. As the presentation shows, the AI assistant is smart enough to process loosely formed questions about the weather, or knows what to do when you send $20 to a friend.

NLP is also changing the way we interact with more complex systems. For instance, NLP-powered analytics tools make it much easier to run queries against datasets. In this way, Artificial Intelligence can help put more people in tech jobs.

Computer vision

Scientists have been tackling the challenge of making computers understand the content and context of images for decades. Thanks to progress in deep learning and neural networks, we’ve made great inroads in the field of computer vision. Though we still have a long way to go, the results so far are amazing.

Eye tracking, the technology that understands and measures the activities of human gaze, has benefited immensely from these advancements. While it had been around for decades, eye tracking was previously secluded in research labs due to its associated costs.

Now, Artificial Intelligence and computer vision have made eye tracking more efficient and affordable. Machine learning algorithms can turn consumer-level mobile and web cameras into eye tracking devices. This puts the benefits of eye tracking at the disposal of more people and opens up entirely new possibilities.

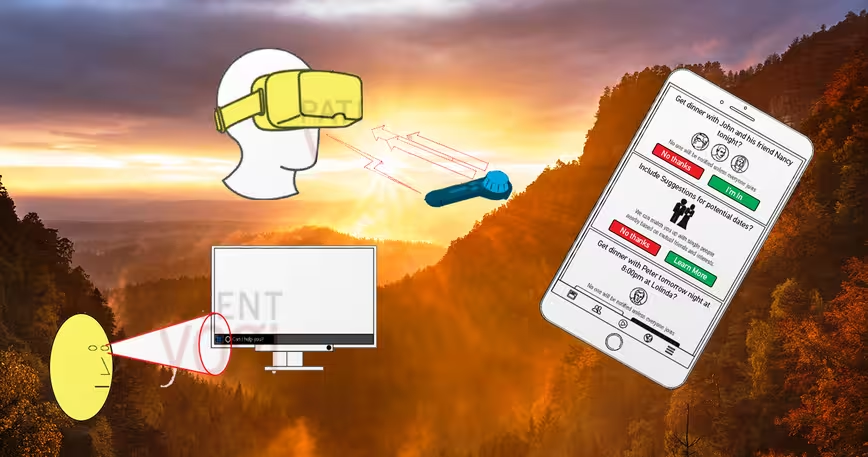

![]() Eye tracking technology can enhance many domains, including gaming, virtual reality and marketing research. But perhaps more importantly, eye tracking can make it possible for people with physical disabilities to interact with computers. A number of innovations such as gaze keyboards and eye-tracking–powered control panels are making this possible.

Eye tracking technology can enhance many domains, including gaming, virtual reality and marketing research. But perhaps more importantly, eye tracking can make it possible for people with physical disabilities to interact with computers. A number of innovations such as gaze keyboards and eye-tracking–powered control panels are making this possible.

Computer vision is also making it possible to interact with computers through hand gestures instead of using controllers and props.

Thanks to computer vision, computers can infer human intent without the active involvement of the subject. You can see this in smart vehicles that detect driver drowsiness and distraction. It’s also enabling software to read and react to facial expressions. In an eerie manner, you might be interacting with computers without knowing it. Amazon’s cashierless retail store gives a glimpse of what computer vision might accomplish in the near-future.

Neurotechnology

Being able to send commands to your computer through your thoughts used to be the stuff of sci-fi movies. But developments in the past couple of years show that we might not be too far from willing our computers to do our bidding.

Facebook recently revealed an initiative to build a computer interface for the human brain. The company aims to enable you to type a hundred words per minute with your mind.

A big investor in Augmented and Virtual Reality, Facebook also wants to let users navigate AR/VR environments with their minds instead of controllers. Instead of using implants, Facebook hopes to use sensors that can read brain activity through optical imaging technology.

A big investor in Augmented and Virtual Reality, Facebook also wants to let users navigate AR/VR environments with their minds instead of controllers. Instead of using implants, Facebook hopes to use sensors that can read brain activity through optical imaging technology.

Elon Musk, the man known as the real-life Tony Stark, is also trekking into neurotech. The CEO of Tesla recently launched Neuralink, a company that is researching methods to upload and download thoughts.

Other interesting projects include Emotiv, a headgear that is supposed to help you manipulate objects with your mind.

There are numerous ways these technologies can enhance the human-computer experience, including in helping out people with physical disorders. But they also open up a Pandora’s box of new malicious uses. This can include stealing sensitive information from your brain after manipulating your mind to think about them.

What the future holds is anyone’s guess. I don’t think your keyboard and mouse will go away anytime soon. But they will become less prominent as we’ll find more ways to wittingly or unwittingly interact with computers. The promises of AI-powered interfaces are great, but so are the challenges.

Get the TNW newsletter

Get the most important tech news in your inbox each week.