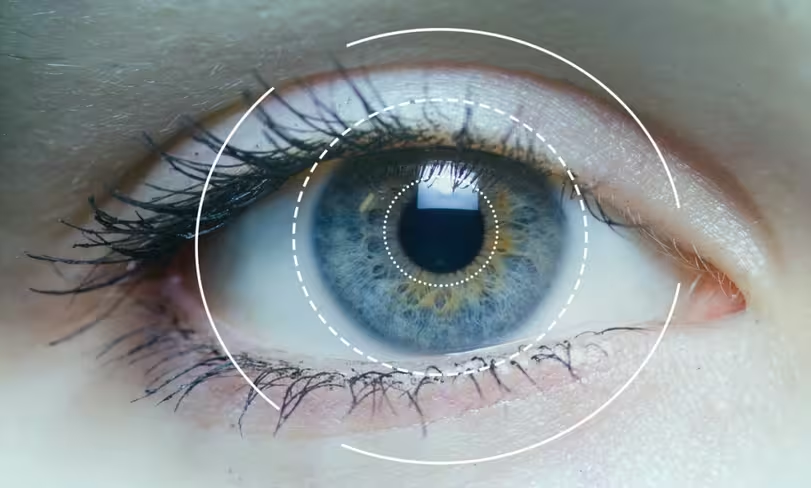

Thanks to advances and breakthroughs in hardware, software and artificial intelligence, eye tracking technology has progressed immensely in the past year, and is now drawing the attention of tech industry’s biggest players.

The acquisition of eye tracking companies EyeFluence and Eye Tribe by Google and Facebook respectively, as well as the move toward mobile eye tracking by Tobii Tech are prelude to how human-computer interaction is bound to be transformed in the near future.

From monitors and laptops to smartphones and VR headsets, as eye tracking tech slowly finds its way into more and more devices, here are four key areas that will likely be immensely affected by the technology that measures and responds to human eye motion.

Virtual reality

2016 was certainly a huge year for VR, with major product releases such as Oculus Rift, HTC Vive and PlayStation VR. But VR is all about immersion, and in this respect, eye tracking technology is likely to be a crucial component of next generation headsets.

2016 was certainly a huge year for VR, with major product releases such as Oculus Rift, HTC Vive and PlayStation VR. But VR is all about immersion, and in this respect, eye tracking technology is likely to be a crucial component of next generation headsets.

“A VR headset without eye tracking will assume that I am speaking to the person in front of my forehead,” says Oscar Werner, VP of Tobii. “Our real interest is where I am looking, and there is often a difference between where I look and the direction of my head. VR headsets need to take your gaze into account be become truly immersive.”

The use of eye tracking technology will enable VR rendering engines to eliminate current graphics distortions caused from not being able to calculate gaze direction, Werner says.

Moreover, eye tracking is a key element in foveated rendering, the technique which allocates more resources to the area under the direct gaze of the user, and renders the rest of a frame in lower quality. The savings in memory and resources is enormous (Werner approximates at a 30 to 70 percent decrease in the number of pixels drawn) and could enable manufacturers and developers to create realistic quality graphics with much less processing power.

Fove, a Kickstarter-funded project, is the first VR headset to have embedded eye tracking. Google and Oculus are also working on incorporating eye tracking into their next line of VR products. And eye tracking company SMI is partnering with VR manufacturers to bring the technology to both standalone VR HMDs and smartphone slot-ins.

Gaming

The biggest challenge gamers have to overcome is to make their intentions known to computers and consoles. And a large part of that is to make the computer understand where we’re looking at. eye tracking has the potential to remove that hurdle, which is one of the most challenging aspects of engaging with games.

The biggest challenge gamers have to overcome is to make their intentions known to computers and consoles. And a large part of that is to make the computer understand where we’re looking at. eye tracking has the potential to remove that hurdle, which is one of the most challenging aspects of engaging with games.

Considerable efforts in the development of peripherals is allocated to easing the navigation of gaming worlds and interfaces. With eye tracking navigation and interaction with gaming interface will be as easy as looking in the direction or at the item of choice.

Whether you want to hack at an object, aim at a target in an FPS, designate a location for your troops to displace, or simply change the direction of the point-of-vue camera, eye tracking will make it a whole lot easier to interact with games. This can potentially be the end of controller and mouse handling.

Tobii has already integrated the technology into several games, including Rise of the Tomb Raider, Deus Ex and Watch Dogs 2. While eye tracking integration will probably make games less challenging, it will pave the way for creating faster paced games.

Aside from that, eye tracking can make game UI interfaces less cluttered and create less intrusive interfaces. For instance, maps, control panels and other UI elements can remain hidden, providing gamers with a richer view of the game environment, and only become visible visible only when the user’s gaze is directed toward them.

You can also expect the next generation of games to feature gaze-aware objects and characters. For instance, you might incite a fight at a tavern if you stare too long at some surly mercenary, or at his purse maybe.

Advertising

Eye tracking can also revamp the entire advertising industry. In their current state, ads are measured by impressions, which is not a very precise metric.

“The advertising industry is currently in the midst of some major upheaval when it comes to universal standards for measuring ad impact,” says Dominic Porco, CEO at Impax Media, a digital advertising company. “The whole concept of ‘viewability’ is now being redefined to make more sense in the age of ad blockers and bot traffic.”

When eye tracking becomes an inherent part of all computing devices, ad campaigns can also take into account the amount of actual eye views that ads get. That’ll take a while to become reality, but until then, eye tracking is already showing promise in other fields where digital ads are involved.

Impax Media is using eye tracking technology along with other computer vision techniques to collect attention metrics from its proprietary in-store advertising screens, called Tru View. “We’re big believers that the future of the ad industry is going to be grounded in attention metrics, as opposed to impressions, and eye tracking is, hands down, the best way to track attention,” Porco says.

Thanks to eye tracking technology, Tru View measures total views and view durations for any piece of content in the ad loop. Leveraging other image analysis tools, Tru View also extracts the age range and gender of viewers. The data helps advertisers and location partners to assess audience interest in various messaging angles, and to correlate this information with parameters like location, timing and demographics.

User information collection is always a gray area that that falls across privacy concerns and regulations. Porco says that the technology avoids collecting any data that would uniquely identify viewers, such as the space between multiple facial features.

Market research

A large part of the interaction that customers make with products and services is through their gaze. By measuring customer sight, eye tracking is opening up unprecedented possibilities for both lab and real world neuromarketing tests.

It is important for market researchers to “evaluate people’s interactions and expectations across the whole omnichannel customer journey and its key touchpoints,” says Simone Benedetto, UX researcher at TSW, an Italy-based market research lab.

With eye tracking, Benedetto explains, instead of relying on surveys, you’ll be able to collect objective data from the eyes of users while they’re interacting with a product or service.

TSW uses mobile eye tracking units along with other wearables in order to collect customer metrics on a wide variety of products and services, both digital (such as online ads, mobile apps, websites, software and device control panels) and physical (such as print material, product packages, cars, home furniture and retail stores).

Gaining insights on natural interaction with products and services enables researchers to identify real usability problems and frustration points and make decisions that improve customer satisfaction and engagement.

“From my perspective there’s a huge market behind the exploitation of eye tracking into UX-neuromarketing investigations,” Benedetto says. “Eye tracking allows the implicit measurement of user behavior, and turns that measurement into quantitative objective data. We have only relied on subjective data for years, and it’s definitely time for a change.”

Gazing ahead

As sight is perhaps the most used human sense, being able to transform it into a human-computer interaction medium can have huge implications for the future of computing.

As sight is perhaps the most used human sense, being able to transform it into a human-computer interaction medium can have huge implications for the future of computing.

Werner says he believes a new paradigm of PC usage will emerge, where eye tracking is a fifth modality that, in combination with touch screens, mouse/touchpad, voice and keyboard, will make computers much more productive and intuitive.

“Gaze always precedes any kind of action that you do with mouse, keyboard and voice, so much smarter user interactions will be designed using these technologies,” he says.

Get the TNW newsletter

Get the most important tech news in your inbox each week.