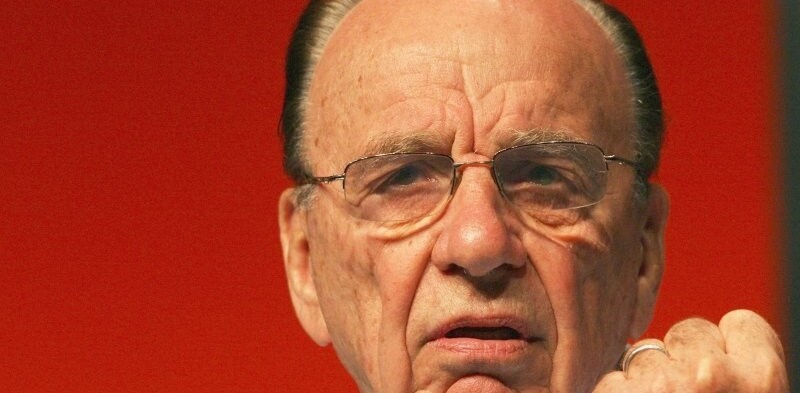

Remember when Rupert Murdoch caused a stir by saying that he was going to start blocking news search services like Google News from carrying his sites’ stories? Well, it looks like he’s started.

Remember when Rupert Murdoch caused a stir by saying that he was going to start blocking news search services like Google News from carrying his sites’ stories? Well, it looks like he’s started.

News aggregator NewsNow is claiming that Murdoch’s UK-based Times Online website has started blocking it from indexing stories.

The block has been put in place via TimesOnline’s Robots.txt file, a simple change for any website owner to make. NewsNow’s Struan Bartlett said “It is lamentable that (Murdoch’s company) News International has chosen to request we stop linking to their content and providing in-bound traffic and potential subscribers to the Times Online and right now it looks as though NewsNow has been singled out”.

It’s understandable that NewsNow would feel aggrieved by the situation – their site is essentially a list of links to news stories. It more resembles a link sharing service like Reddit than the almost magazine-like Google News.

NewsNow is a minnow in the news search business compared to Google News and it could very well be that Murdoch is ‘testing the water’ by blocking one of his many news sources from being accessed by one small aggregation service.

Murdoch has said previously that he would put paywalls up around much of his online news content, accusing aggregators like Google News of “stealing” the hard work of his journalists. How successful his plans will be remains to be seen, but if his plans are running to course the first of his UK news properties should be behind a paywall within months.

UPDATE:

Indeed, checking the contents of www.timesonline.co.uk/robots.txt reveals the following code.

#Agent Specific Disallowed Sections User-agent: NewsNow Disallow: /

We’ll be keeping an eye on that file to see if it grows over time…

Get the TNW newsletter

Get the most important tech news in your inbox each week.