Researchers affiliated with WHO’s International Agency for Research on Cancer (IARC) have been exposed for manipulating images in multiple research papers they have published over the years.

Between 2005 and 2014, Massimo Tommasino, head of Infections and Cancer Biology Group at IARC in Lyon, France, and his former colleague there Uzma Hasan, had published multiple papers with doctored images.

Manipulated photos in studies can generally be anything from those of microscopic views of cells or tissues, images of glowing gels indicating chemical concentrations, or even graphical representation of data. There can be dire consequences to tweaking an image in a research paper.

The quality of images used in a paper is reflective of the standard of the research, and this must be earned ethically. As one paper in the Journal of Cell Biology explains:

The quality of an image has implications about the care with which it was obtained, and a frequent assumption (though not necessarily true) is that in order to obtain a presentation-quality image, you had to carefully repeat an experiment multiple times.

This particular incident by the WHO researchers came to light last week after investigative science journalist Leonid Schneider exposed the manipulated images on his blog, For Better Science. Schneider described in his blog post how certain parts of the images in Tomassino and Hasan’s papers were duplicated from other portions.

https://twitter.com/schneiderleonid/status/1050484112472051712

The expose was based on a tip-off in PubPeer – a discussion forum on published research. Two months ago, an anonymous user reported the malpractice in the studies under the comments section of the authors’ papers.

The problematic practice of image manipulation has been steadily growing in academic circles over the years. A study conducted by Stanford Microbiologist Elisabeth Bik estimated that nearly 35,000 research papers in the entire biomedical literature are candidates for retraction due to image duplication. She submitted her findings to the preprint bioRxiv in June, and continues to expose cases of doctored images in published research papers.

The fact that peer reviewers generally do not analyze the original data used in a study, makes the situation worse. Bik told TNW:

If a paper contains one or more manipulated images, it is hard to trust any other data in that paper. How sure can we be to trust the data shown in tables or line graphs, if other parts of the paper are fabricated? I would not say that the results are flawed, and the fabricated image might only represent a small experiment, but it becomes harder to trust all other results presented in that paper.

From the same publisher.

Just live tweeting what I find when I am scanning scientific papers for these types of duplications. pic.twitter.com/lSck6av1Gd— Elisabeth Bik (@MicrobiomDigest) August 27, 2017

Schneider added that the number of papers with such malpractice has grown since photo editing applications became commonplace. “[Manipulated] images of gels and microscopy were most predominant in life science papers in around the decade 2000-2010, that also coincided with the advance of Photoshop technology, (sic).” said Schneider.

Next findings: 2 duplicated panels in the same figure, representing two distinct anatomical sites. This looks like an error. pic.twitter.com/tR4q9cFWvG

— Elisabeth Bik (@MicrobiomDigest) August 28, 2017

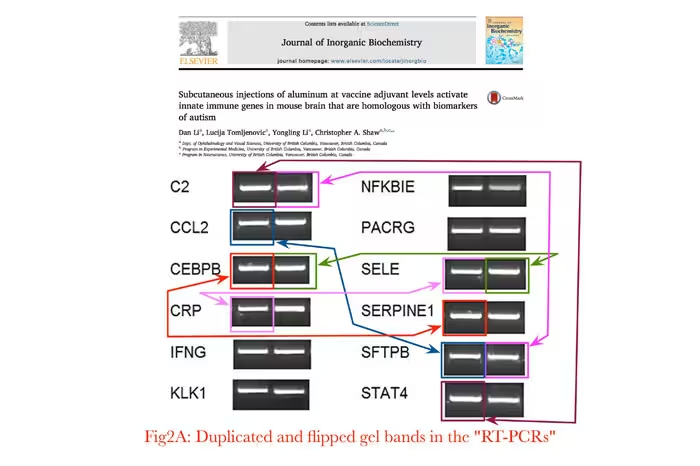

Journal publishers should worry about the manipulation of images since it even has the potential to change the narrative on some very controversial research topics. For instance, a paper published last year suggested that vaccines could have biological responses “consistent with autism.” But on closer inspection, it was found that the images used in the study were doctored. The paper was soon retracted.

The major problem with image falsification, Schneider feels, lies in the process of peer review, in which a paper is reviewed by other experts in the same field before it is published. While the process can detect poorly done science, it fails at spotting data manipulation or other frauds. And when journals separately employ image integrity analysts, they too can only screen newly submitted manuscripts, and not the ones already published.

Even for newly submitted studies, it often takes more than a well-trained eye to spot such manipulations. This is where digital forensics tools come in handy. Software such as 29a.ch is used to spot deliberate alterations to images in research papers. Training people to use such resources may also take time, but as these troubling findings show, such processes are essential for guaranteeing authenticity and scientific validity.

While some journals do employ image integrity analysts to screen all accepted submissions, there is still a need for a deterrent among researchers. “There is so much for fraudsters to gain from image manipulation and too little to fear.” notes Schneider.

Even in the current case, while the WHO is required to immediately investigate the implications of the manipulation with an external expert committee, without a conflict of interest, there has been no response yet from the agency. We’ll update this post as we learn more.

Get the TNW newsletter

Get the most important tech news in your inbox each week.