It’s kind of poetically funny to post an iPhone camera review during the week of the new Pixel launch. Google’s phones have been fierce, and often overpowering rivals to iPhones, especially in the camera department.

Last month, when Apple announced its new set of iPhones, it talked about cameras and their new features a lot. So, it was time to test out whether the company’s tall claims were true. Just so you know, we’ve used the iPhone 11 for this review.

Camera specs

- Rear camera: 12-megapixel wide sensor with f/1.8 aperture + 12-megapixel ultra-wide sensor with f/2.4 aperture, 120° field of view

- Front camera: 12-megapixel true depth sensor with f/2.2 aperture

Daylight photos

Photos snapped from the iPhone in good lighting conditions have always been good. But in the past, a lot of people found them a bit dull in comparison to snaps taken with Pixel or Samsung Galaxy phones.

What’s great about the daylight photos is that even when you see them on a bigger screen, colors seem true to life. Plus, iPhone’s algorithm doesn’t try to smooth out anomalies in an object or skin of a person, so photos look more impressive.

But as my colleague Mix pointed out in his review of the Huawei P30 Pro for street photography, there’s a certain lag between when you tap the capture button and when the photo is taken on iPhone 11, too. So, you might not want to capture photos of moving objects much.

The primary sensor is a definite improvement from the iPhone Xs’ camera: pictures are no longer the hazy mess they were on last year’s phone. While I haven’t been able to compare it with the latest Pixel 4 — as it’s not landing in India — image quality is comparable, and even better in many instances, to the Pixel 3.

Selfies are great in daylight, but suffer a bit in low-light. NeuralCam app offers a night mode through the front camera if you want to try out, and it’s not bad.

Wide-angle camera

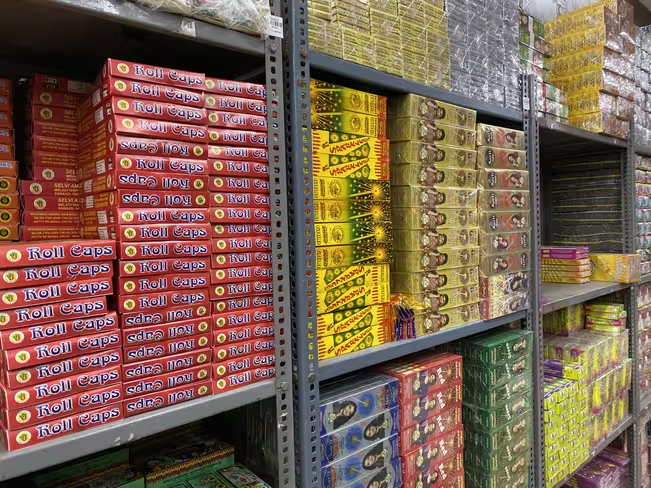

Apple added a wide-angle camera to both the iPhone 11 and iPhone 11 Pro. I really love wide-angle cameras. They add a lot of drama and fun to a photographer’s arsenal. The new 12-megapixel wide-angle lens shoots at 120-degree field-of-view. While photos taken with this lens do have a bit of distortion at the edges, it’s not a disturbing amount. With the right angle, you can actually get a great fish-eye effect too.

However, wide-angle photos do suffer from a bit of highlight clipping and loss of details (human skin, objects etc). But I’m sure Apple can fix this with a software update.

Night mode

This was the mode everyone was waiting for, and I can tell you it was worth the wait.

Apple engineers deserve a pat on the back for the way the new camera handles light sources in low-light conditions. There’s a minimal amount of contrast or highlight clipping. I didn’t notice too much lens flare either.

An app called NueralCam tried to bring night mode to older iPhones, and it did a decent job. But Apple‘s efforts are much more refined. You find there over-saturation and blown-out highlights in pictures taken in very low light. However, that’s mostly the state of the night mode algorithm in every phone.

Here’s what you need to know when using this mode: You can’t trigger this mode, it’ll get automatically activated at a light level lower than 10 lux. You can adjust exposure time with a slider or turn it off entirely.

In the night mode, the camera takes multiple frames at varying exposures, and combines them using an algorithm similar to smart HDR to reduce noise and adjust color. You can read about how the algorithm works here.

As compared the Pixel 3, the iPhone 11’s night mode gives better respects to shadows and colors. The new Pixel 4 might be able to beat Apple at this game, but it’s not going to be an easy job.

The iPhone 11’s night mode promises to get you a great shot if the scene is well lit. For bonus fun, try shooting a still object with moving stuff around it, you’ll get a nice motion blur effect. Oh, and don’t use flash to try and get the same effect.

Portrait mode

Smartphone makers introduced portrait mode a couple of years ago, first in flagships, and later in mid-range and budget phones. While they made slight improvements in areas of edge detection and shallow depth of field to create the bokeh effect, the portrait mode always had some faults such as blurring faces or lacking support for objects and pets.

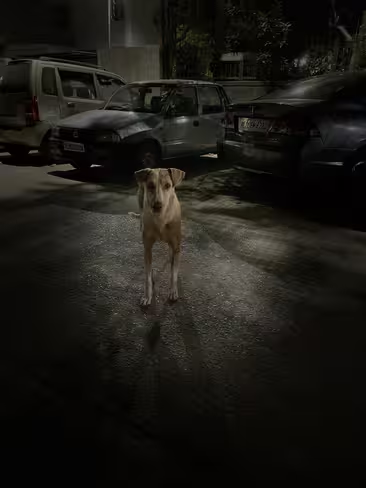

Last month, when Apple announced the new iPhones were going to add support for objects and pets for this mode, I’m pretty sure I (and other pet owners) had a smile on their faces.

The company has certainly improved the detection of humans; there are less blurred edges, and you might notice anomalies only when you zoom into the picture. Whenever I’ve taken portrait pictures of humans, I’ve seen near-perfect edge detection, photos full of great details capturing texture and color of the skin.

Portrait mode for pets and objects is not perfect. But it’s good to start. Apple uses both wide and ultra-wide cameras for a better perspective and greater amount of details in portrait photos.

Video

iPhones have always been a notch above Android phones when it comes to video. This year Apple has added the ability to capture 4K videos at 60 frames per second with a wide-angle camera as well. This gives you a chance to shoot cinematic scenes such as a football match or a drive with friends. With slow-motion mode, you can record full HD videos at 240 frames per second, and it’s quite fun.

Reaching the podium again

Just after the launch, I had written about how the iPhone 11 is trying to overtake Android phones with these new features.

When iPhone 11 reviews came out, everyone had one thing in mind. How will it stack up against the Pixel 4. We’re yet to see detailed head-to-head comparisons, but as my colleague Napier rightly said, Google kind of missed the boat by going with a telephoto lens instead of a wide-angle one.

Plus, this time Apple has another card to play – Deep Fusion. We’ve tested it in a developer beta version, and results are quite impressive. Apple might just take the camera crown this year.

Get the TNW newsletter

Get the most important tech news in your inbox each week.