As tech writers, we get to play with a lot of phones. But, it’s not often that we get to peek behind the curtain to see how engineers are working on the product. That’s why I was pretty excited when OnePlus invited us to visit its camera lab in Taiwan.

This testing lab, which is the center of OnePlus’ camera development, was established in 2014 under Simon Liu, who heads the company‘s imaging team now. Carl Pei, OnePlus’ co-founder, told a bunch of reporters at a briefing in Taiwan the company‘s philosophy behind its camera is to take ‘natural’ photos that are close to what your eyes see, but they shouldn’t be flat and should ‘have some emotions.’

“We want our consumers to take the phone out of their pocket, and without fiddling with the setting take a great photo in the auto mode,” he opined on the aim of OnePlus’ phone cameras.

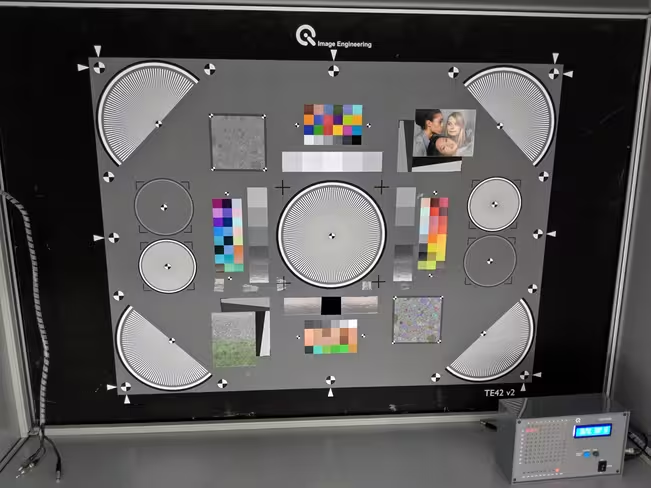

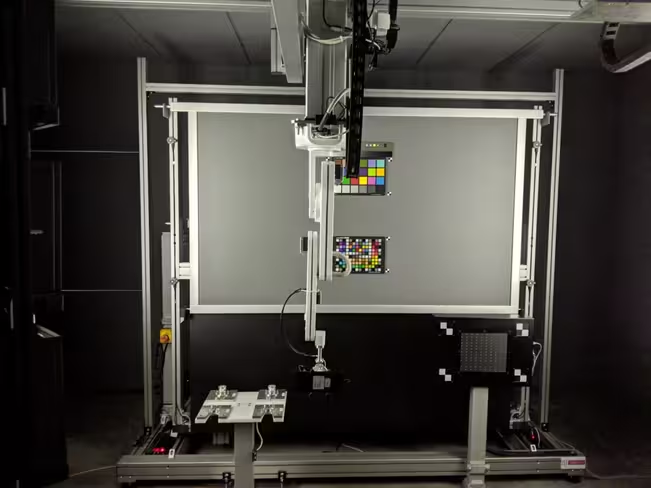

The company applies two testing methods to shape its camera hardware and software into the final product. First, an objective test that takes place in the camera lab, and then a subjective test involving multiple people, scenes, and locations. OnePlus’ camera lab in Taipei hosts a bunch of high-end machines and charts to test out the camera‘s capabilities. These machines test parameters like saturation, white balance, brightness, speed of autofocus.

For instance, equipment in the picture below – a model of a rotating joyride and a LED machine – are used to test the autofocus function of the camera.

The company also uses charts to measure ISO (light sensitivity), texture, color accuracy, and noise. You can go through types of charts used in camera testing here or here. To test out different light conditions the camera team uses Xrite’s proven SpectraLight QC light booth that simulates various scenarios.

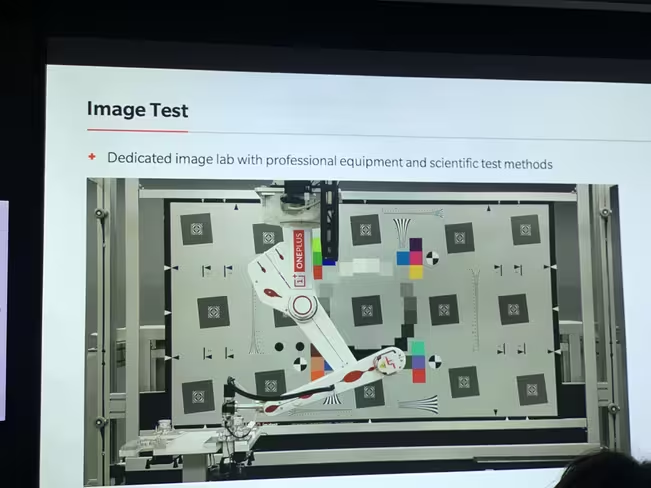

But the star of the show was the team’s automated arm setup which can be controlled by a programmatic test software to capture various photos of different charts without any human intervention and instantly display results.

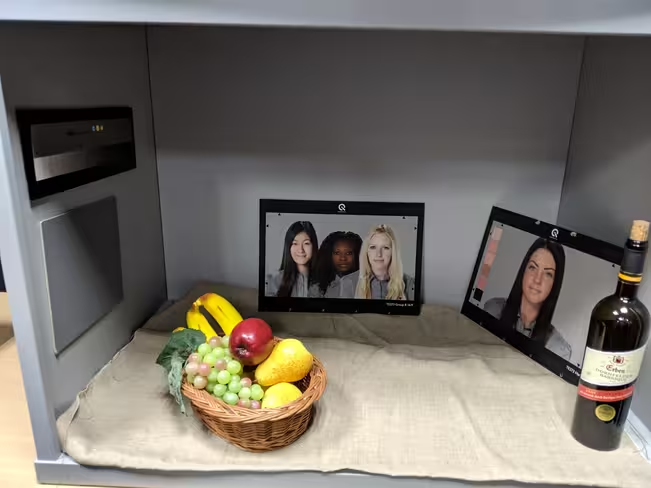

The company has also set up different mannequins to test out portrait mode, and has developed its own chart (which we were not allowed to photograph because it’s patent is pending) to measure bokeh effects. The team said it plans to add more mannequins to test for different colors and facial hairs.

Outside all these testing rooms, there’s a rig with several phones wired up, that opens the camera app, clicks the photo and captures the data of speed and performance for continuous usage.

For subjective tests, the company has four locations – mainland China, Taiwan, India, and Germany – where its engineers take photos over 100+ scenarios in different categories. This exercise is to measure and tune the camera‘s performance in different environments, color temperature, and lighting conditions in real life.

Since OnePlus doesn’t have the AI prowess of Google or Huawei, or team strength of Apple or Samsung, it relies on the community for feedback. It regularly hosts meetups under Open Ears Program where the product team meets users to hear from them directly. Based on those suggestions the team decided to work on improving or adding certain camera features.

The company‘s product manager, Zack Zhang, told TNW in an interview that after the team works on these updates, it ships the software to employees every week for testing and review. And once it’s satisfied with the result, the software is provided to users via over-the-air updates.

Despite OnePlus’ commercial success, it remains a challenger to bigger brands, largely because it’s yet to create a camera that’s truly stunning. Compared to other mobile giants, the company‘s team size is still small, and it has to rely on user feedback to drive its camera‘s direction.

All the lab tests and user inputs will help it collect data over time to improve its camera. But the results of this exercise might take a couple of iterations to show up on a phone that has a great camera from the get-go.

Get the TNW newsletter

Get the most important tech news in your inbox each week.