Over the past few weeks, I’ve written extensively about the Cambridge Analytica scandal that rocked Facebook, after it was discovered some 87 million users’ data was scraped by an analytics firm without their knowledge, for the purpose of influencing voters ahead of an election.

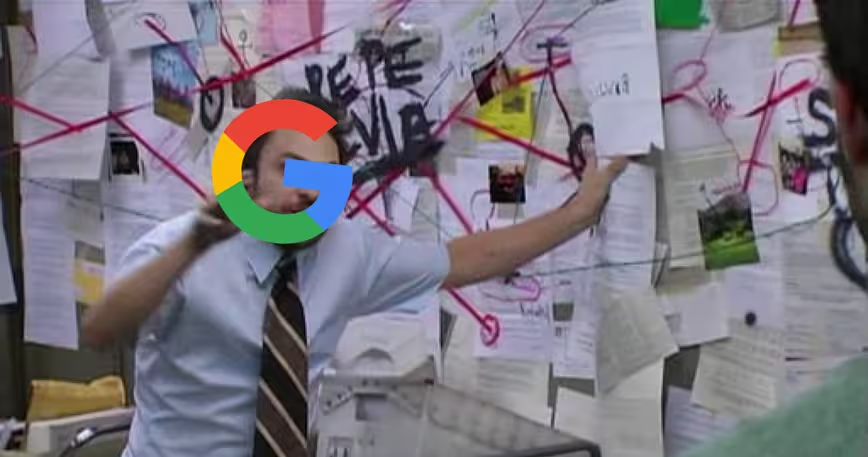

I feel that, in the heat of the moment, we may have missed out on adequately addressing the question of exactly why we should be concerned and outraged by this incident. Following a recent article in the Wall Street Journal by columnist Christopher Mims, which argues Google may have just much data about us as Facebook, I think now’s a good time to give that a go.

So, why should we care about companies having a lot of data on us? Because it makes large numbers of us vulnerable to exploitation in different ways that can benefit companies and governments, and because the possibilities of what can be achieved with massive quantities of data are largely unknown.

It’s important to understand that all sorts of data can be collected about you when you use a web browser or a mobile phone, and a lot of that can take place without your knowledge. Heck, it happens even when you’re not signed up or logged into some services.

With the tools available to them today, a company can craft a detailed profile on people who use the internet – and by signing up for various online services, we’re just making things easier for them. The majority of us are fundamentally blind to the terms of use stipulated by the Facebooks and Googles of our world. We can’t be realistically expected to give informed consent – but here we all are, clicking ‘I agree’ on scores of apps’ registration pages without a second thought.

This data is immensely valuable to those who know what to do with it – and that value has a lot to do with scale. The more data that a company or group has to play with, the higher its chances of achieving its goals, either by identifying a larger number of people who might be interested in what it has to say, or by figuring out exactly what they’re thinking, and speaking to their views specifically.

Let’s take the example of Russia meddling with the last US presidential election to explain this: you may not be immediately concerned about being targeted by a foreign government trying to influence your political opinion with fake news and misleading ads on a personal level, because you’re woke and aware of such campaigns.

But there are countless others who are either less aware of such programs, more suggestible, or in circumstances that are conducive to being swayed by this kind of messaging. So it’s not just about trying to convince one person, but rather about looking at trends in data to determine which groups of people may be most susceptible to such manipulation, and bombarding large numbers of these people with misleading information to achieve some degree of success in influencing several of them at once. Unfortunately for us, this strategy works.

A company like Cambridge Analytica, which specializes in influencing political outcomes by studying voters, exists today only because data is widely available. Its business model didn’t exist a few decades ago. And if this is what a company that wasn’t on your radar is already capable of, imagine what data analysis firms will be doing five years or a decade from now.

Ultimately, we need to be more aware of the consequences of signing away the rights to our data, and be more conscious about who we give it to. But that seems like more of a reactionary approach than one that would prevent companies from exploiting our information.

That’s why we should strongly consider regulating the ways companies gather, use, share, and sell our data. Governments and courts can hold companies accountable for the misuse of our data in a way that individuals can’t – and they can do so with the backing of frameworks and standards agreed upon at a national or societal level.

The way a company collects and uses data today may seem innocuous, but it might change its ways a few years from now, with disastrous consequences for users. We shouldn’t allow privately held companies – which only have their own best interests in mind – to decide whether they’re in the wrong. The Cambridge Analytica-like scandal that will break in 2023 will be a lot worse than the one we’re dealing with right now.

The European Union’s GDPR laws are a good example of the sort of regulations that could prevent people from being exploited – they spell harsh penalties for companies that don’t protect people’s privacy. It was, therefore, disappointing to learn that Facebook decided not to extend those protections to some 75 percent of its users worldwide.

That means that if any of those users find Facebook violating their trust over a data privacy issue, they won’t be able to take the company to court – not easily, anyway.

This is important because the web enables companies with physical headquarters in one country (and therefore, legal liability only in that country) to reach people across borders and utilize their data, without being to subject to the laws in their countries.

As technology advances, the value of data will continue to increase, and unless we change the way we regulate businesses, it’ll also become harder to protect our privacy. The conversations we’re now having about how companies like Facebook should operate will likely form the foundations of how they will be run in the future, and that’s why it’s important to care about who has your data, how much of it, and what they’re doing with it.

The Next Web’s 2018 conference is just a few weeks away, and it’ll be ??. Find out all about our tracks here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.