When we think about artificial intelligence, it’s usually something involving robot overloads and the answer to how many tablespoons are in a cup. Less often do we think about their emotional intelligence, something so intrinsic to human interaction that we take it for granted.

Pamela Pavliscak, CEO of Change Sciences to took the stage at TNW Conference 2018 to discuss the “emotion revolution” we’ll see in our machines and software within the next years. For AI and virtual assistants to make the most positive impact, they’ll need to know how to understand and behave within the framework of our very human emotional cues.

This concept isn’t new; technology companies have tried to humanize technology for ages, to varying degrees of success. One of the most infamous examples is Microsoft’s Clippy – an early example of virtual assistant that might’ve been ahead of its own time. “Clippy lacked emotional intelligence, and didn’t learn from you. That was before AI though,” says Pavliscak. Maybe if Clippy were better at knowing when you wanted advice, it wouldn’t be such a pain.

Now that voice assistants are more intelligent, people are warming up to frequent interaction. According to Pavliscak, 37 percent of voice assistant users wish the AIs were a real person – and not just kids, she notes, although it doesn’t take much for a kid to love a robot – or even a water heater that looks like one.

“We don’t need much to humanize our technology. If it has eyes; if there’s a hint of a gesture; if it has a voice; if has a backstory, or any kind of irregular erratic movements… we humanize everything.” This is so pervasive that 27 percent of people aged 18-24 would have a relationship with a robot (no, not necessarily that kind of a relationship).

So then how do we leverage this innate desire to emotionally relate with AI? In part, by making AI better at understanding us.

For example, facial recognition (and interpreting the emotional data within faces) has gotten really good. Though it has some worrying applications – China’s pervasive facial recogntion comes to mind – the benefits are potentially even more expansive. For example, researchers are studying how to use AI to recognize and help prevent road rage – and the accidents it causes.

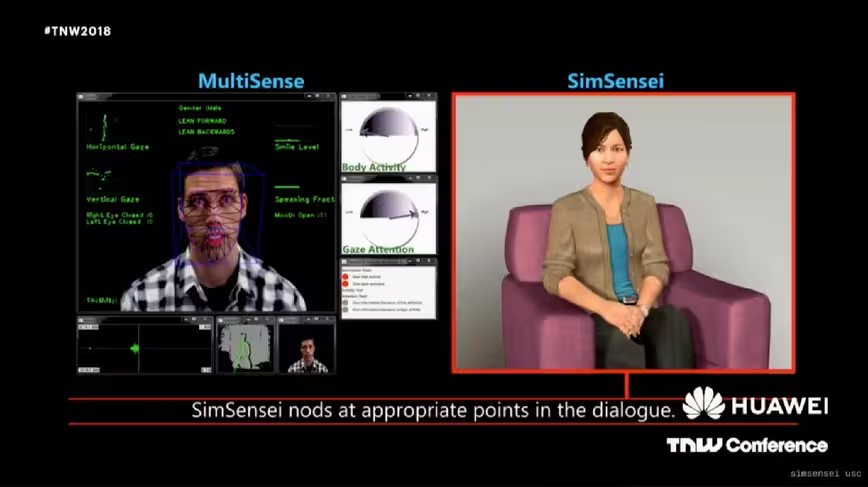

Humans are toolmakers, and in the same way a calculator helps you solve math problems, it’s possible AI of the future will help us leverage our emotions – and even understand them better. USC is developing a virtual therapist that can identify emotional triggers in patients suffering from PTSD, for instance. It’s not a stretch to imagine such a therapist would eventually be able to identify such signs as well as a human can – while also adding a degree of anonymity you can’t get with a real person.

Artist Lauren McCarthy created an app called People Keeper that would help you notice how people in your life affect your mood and stress levels. You could cut out people who have a negative impact, and embrace those who have a calming effect. That has some Black Mirror-like implications, but it also shows emotional intelligence can help people make better decisions about life and social interactions, in the same way Google Maps helps you choose the best route to your favorite taco place. It’s a real iOS app by the way, if you want to give it a go.

It even has applications in gaming; a VR game called Nevermind, for instance becomes harder the more scared or anxious you feel. It not a stretch to imagine future VR games will leverage your emotions in similar ways.

While humans will likely always be better at understanding some aspects of emotions, there’ll come a point where machines are better at others. Ultimately, Pavliscak envisions a future where our machines help humans become emotionally smarter too.

“Maybe, just maybe, as the emotional intelligence of the machines go up, our emotional intelligence goes up too.”

TNW Conference 2018 is in full swing! Want to read more? Check out all of our coverage here. Haven’t heard of it yet? Read all about the greatest tech event in Europe (and the whole world) here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.