Last night, Twitter released its draft policy on deepfakes and opened up its feedback process for users. The policy is still in a very early stage and a lot of details, like determining and identifying manipulated photos and videos, are unstructured.

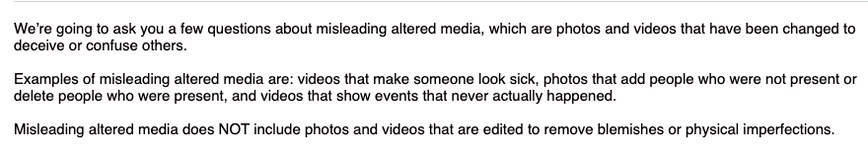

The company defines deepfakes as “any photo, audio, or video that has been significantly altered or fabricated in a way that intends to mislead people or changes its original meaning.” While the definition kind of makes sense, Twitter will need to make sure its AI wouldn’t classify memes are deepfakes.

Expanding on this in its survey, the social network says a piece of media that makes someone sick (huh?). Another example of violating the policy is adding or removing people from the original piece of content that can be considered as deepfake.

Twitter also outlined steps it might take to flag a tweet with doctored media:

- Place a notice next to tweets that share synthetic or manipulated media.

- Warn people before they share or like tweets with synthetic or manipulated media.

- Add a link – for example, to a news article or Twitter Moment – so that people can read more about why various sources believe the media is synthetic or manipulated.

The survey asks you basic questions such as “Should Twitter remove tweets with deepfakes or keep them with labels?” It also asks people about what should the platform do with various kinds of deepfakes which might harm someone’s physical safety, mental health, or reputation. If the company decides to take up these labels for categorizing deepfakes, it might have to deal with a lot of disputes from users.

Damien Mason, a digital privacy advocate at ProPrivacy, a comparison site for privacy tools, said labeling manipulated content just avoids political catastrophes:

It is also incredibly difficult to police, as algorithms have been questionably spotty in the past when detecting synthetic media and manually flagging items is sure to be time-consuming. Labeling the material is less than half the battle and does nothing to help the true victims of such attacks. It helps to avoid political catastrophes, such as defaming government officials with doctored videos but ignores the larger scope of deepfake content creators.

Till now, companies have had a hard time identifying deepfakes. However, in recent months, Google, Facebook, and Amazon have boosted efforts by releasing various datasets for researchers. Twitter has a huge task ahead of them when they finally shape the policy.

You can take Twitter’s survey – available in English, Hindi, Arabic, Spanish, Portuguese, and Japanese – here. If you have some kind of specific feedback, you can use #TwitterPolicyFeedback. Wonder who’ll sort through all those tweets.

Get the TNW newsletter

Get the most important tech news in your inbox each week.