Reading scientific papers is a tough job. It might contain language and sections that you might not understand, and not all of it would be interesting to you.

My colleague Tristan is great at traversing through these papers, but I’m just a novice. So, I would want a quick summary of a research document to decide if I want to dedicate more time reading it.

Thankfully, researchers at the Allen Institute for Artificial Intelligence have developed a new model to summarize text from scientific papers, and present it in a few sentences in the form of TL;DR (Too Long Didn’t Read).

[Read: Neural’s market outlook for artificial intelligence in 2021 and beyond]

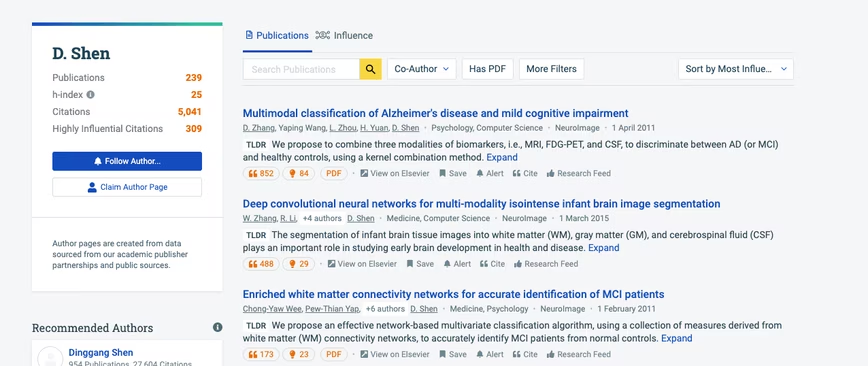

The team has rolled this model out to the Allen Institute’s Semantic Scholar search engine for papers. Currently, you’ll only see these TL;DR summaries on papers related to computer science on search results or the author’s page.

AI takes the most important parts from the abstract, introduction, and conclusion section of the paper to form the summary.

Researchers first “pre-trained” the model on the English language. Then they created a SciTLDR data set of over 5,400 summaries of computer science papers. It was further trained on more than 20,000 titles of research papers to reduce dependency on domain knowledge while writing a synopsis.

The trained model was able to summarize documents over 5,000 words in just 21 words on an average — that’s a compression ratio of 238. The researchers now want to expand this model to papers in fields other than computer science.

You can try out the AI on the Semantic Scholar search engine. Plus, you can read more about summarizing AI in this paper.

Get the TNW newsletter

Get the most important tech news in your inbox each week.