Tesla announced last month that it’s ditching radar sensors from its Model 3 and Model Y EVs for a camera-based autonomous driving system, called the Tesla Vision – but just for the North American market.

Tesla’s move made absolutely no sense and it even cost the company the safety recognition by the National Highway Traffic Safety Administration (NHTSA). But new details from the company’s Senior Director of Artificial Intelligence, Andrej Karpathy, shed at least some light into the automaker’s choice.

During his presentation at the 2021 Conference on Computer Vision and Pattern Recognition on Monday, Karpathy revealed that the reason behind the vision-only autonomous driving approach is the company’s new supercomputer.

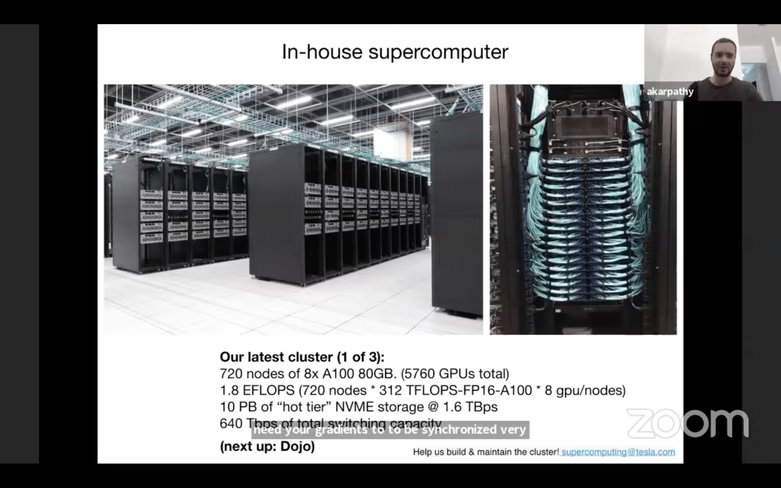

Tesla’s next-gen supercomputer has 10 petabytes of “hot tier” NVMe storage and runs at 1.6 terrabytes per second, according to Karpathy. With 1.8 EFLOPS, he claimed that it might well be the fifth most powerful supercomputer in the world. Simply put, it apparently has insane speed and capacity.

Regarding its function, Karpathy commented:

“We have a neural net architecture network and we have a data set, a 1.5 petabytes data set that requires a huge amount of computing. So I wanted to give a plug to this insane supercomputer that we are building and using now. For us, computer vision is the bread and butter of what we do and what enables Autopilot. And for that to work really well, we need to master the data from the fleet, and train massive neural nets and experiment a lot. So we invested a lot into the computer.”

The supercomputer collects video from eight cameras encircling the vehicle at 36 frames per second, which provides tremendous amounts of information about the environment surrounding the car.

Elon Musk has been teasing a neural network training computer called “Dojo” for some time now.

Dojo, our training supercomputer, will be able to process vast amounts of video training data & efficiently run hypersparce arrays with a vast number of parameters, plenty of memory & ultra-high bandwidth between cores. More on this later.

— Elon Musk (@elonmusk) February 2, 2020

But Tesla’s new supercomputer isn’t Dojo, just an evolutionary step towards it, and Karpathy didn’t want to elaborate on the company’s ultimate computer project.

Overall, we can now grasp better why Tesla turned to a camera-based system for its Autopilot.

But even though the supercomputer’s abilities are impressive, I feel that the company took a big leap of faith, given that the neural network which collects and analyzes image data is still in an experimental stage. What’s most worrisome is that this experiment relies on actual human drivers, with unknown safety measures for them.

If you’re interested in watching Karpathy’s full presentation, you can find it below.

Do EVs excite your electrons? Do ebikes get your wheels spinning? Do self-driving cars get you all charged up?

Then you need the weekly SHIFT newsletter in your life. Click here to sign up.

Get the TNW newsletter

Get the most important tech news in your inbox each week.