Scientists have completed the first-ever demonstration of a “plug and play” brain prosthesis controlled by a paralyzed person.

The system uses machine learning to help the individual control a computer interface with just their brain. Unlike most brain-computer interfaces (BCI), the AI worked without requiring extensive daily retraining.

The study’s senior author Karunesh Ganguly, an associate professor in the UC San Francisco Department of Neurology, described the breakthrough in a statement:

The BCI field has made great progress in recent years, but because existing systems have had to be reset and recalibrated each day, they haven’t been able to tap into the brain’s natural learning processes. It’s like asking someone to learn to ride a bike over and over again from scratch. Adapting an artificial learning system to work smoothly with the brain’s sophisticated long-term learning schemas is something that’s never been shown before in a paralyzed person.

[Read: These tech trends defined 2020 so far, according to 5 founders]

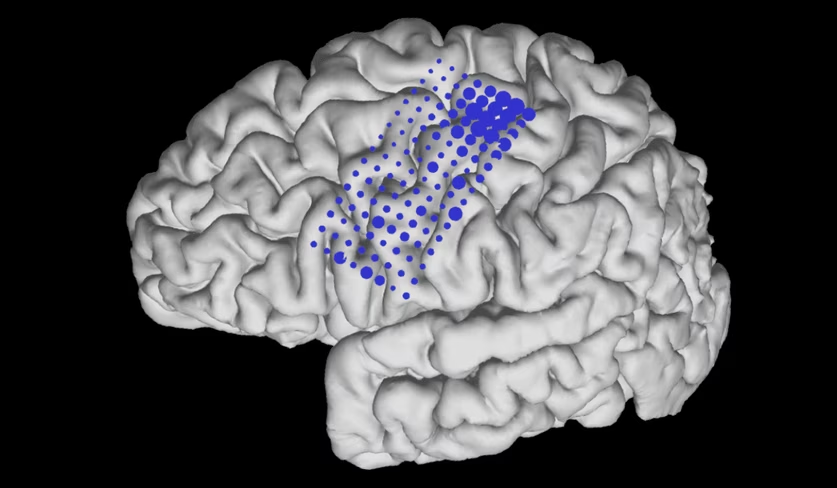

The system uses an electrocorticography (ECoG ) array about the size of a Post-it note. The array is placed directly on the surface of the brain, where it monitors electrical activity from the cerebral cortex.

The researchers claim the system provides long-term, stable recordings of neural activity. This gives it an advantage over BCIs comprised of sharp electrodes that penetrate the brain tissue, as these tend to change or lose signal over time.

The team tested the system on an individual with paralysis of all four limbs, who used it to control a computer cursor on a screen. At first, they asked the user to imagine their neck and wrist movements while watching the cursor move. This led the algorithm to gradually update itself so it could match the cursor’s movements to the brain activity.

However, this time-consuming process restricted the user’s control. So the researchers tried a different approach: allowing the algorithm to continue updating without a daily reset.

Ganguly said this led to continuous improvements in the performance of the system:

We found that we could further improve learning by making sure that the algorithm wasn’t updating faster than the brain could follow — a rate of about once every 10 seconds. We see this as trying to build a partnership between two learning systems — brain and computer — that ultimately lets the artificial interface become an extension of the user, like their own hand or arm.

As the trial progressed, the user’s brain began to amplify the patterns of neural activity that moved the cursor. Eventually, they developed an ingrained mental “model” for controlling the interface. The researchers then turned off the algorithm‘s updates, so the participant could use the system without requiring daily adjustments.

When the system maintained its performance for 44 days without retraining or daily practicing, the researchers started adding additional abilities to the BCI — such as “clicking” a virtual button — without the performance dipping.

Ganguly now hopes to use the ECoG recording in more complex robotic systems, including artificial limbs.

“We’ve always been mindful of the need to design technology that doesn’t end up in a drawer, so to speak, but which will actually improve the day-to-day lives of paralyzed patients,” he said. “These data show that ECoG-based BCIs could be the foundation for such a technology.”

You can read the research paper in the journal Nature Biotechnology.

So you’re interested in AI? Then join our online event, TNW2020, where you’ll hear how artificial intelligence is transforming industries and businesses.

Get the TNW newsletter

Get the most important tech news in your inbox each week.