A team of researchers from UC Santa Barbara and Intel took thousands of conversations from the scummiest communities on Reddit and Gab and used them to develop and train AI to combat hate speech. Finally, r/The_Donald and other online cesspools are doing something useful.

The system was developed after the researchers created a novel dataset featuring thousands of conversations specially curated to ensure they’d be chock full of hate speech. While numerous studies have approached the hate speech problem on both Twitter and Facebook, Reddit and Gab are understudied and have fewer available, quality datasets.

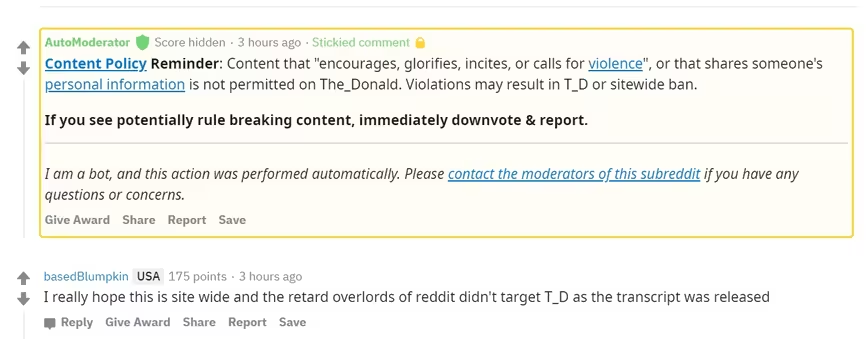

According to the team’s research paper, it wasn’t hard to find enough posts to get started. They just grabbed all of Gab’s posts from last October and the Reddit posts were taken from the usual suspects:

To retrieve high-quality conversational data that would likely include hate speech, we referenced the list of the whiniest most low-key toxic subreddits… r/DankMemes, r/Imgoingtohellforthis, r/KotakuInAction, r/MensRights, r/MetaCanada, r/MGTOW, r/PussyPass, r/PussyPassDenied, r/The_Donald, and r/TumblrInAction.

A tip of the hat to Vox’s Justin Caffier for compiling the list of Reddit‘s “whiniest, most low-key toxic” subreddits. These are the kind of groups that pretend they’re focused on something other than spreading hate, but in reality they’re havens for such activity.

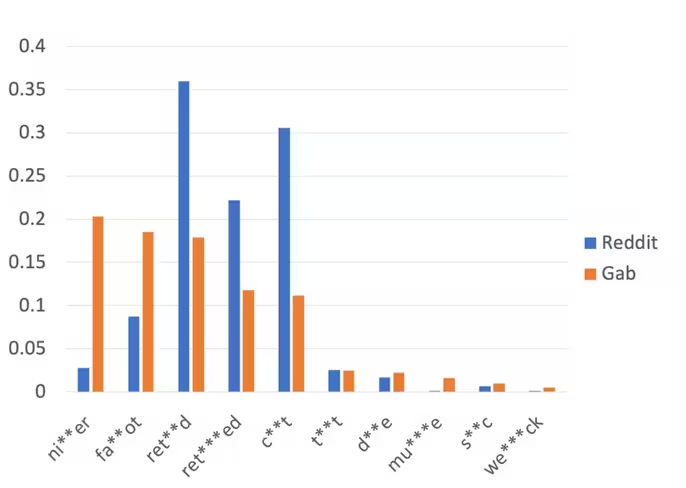

After collecting more than 22,000 comments from Reddit and over 33,000 from Gab the researchers learned that, though the bigots on both are equally reprehensible, they go about their bigotry in different ways:

The Gab dataset and the Reddit dataset have similar popular hate keywords, but the distributions are very different. All the statistics shown above indicate that the characteristics of the data collected from these two sources are very different, thus the challenges of doing detection or generative intervention tasks on the dataset from these sources will also be different.

These differences are what makes it hard for social media sites to intervene in real-time — there simply aren’t enough humans to keep up with the flow of hate speech. The researchers decided to try a different route: automating intervention. They took their giant folder full of hate-speech and sent it to a legion of Amazon Turk workers to label. Once the individual instances of hate speech were identified, they asked the workers to come up with phrases that an AI could use to deter users from posting similar hate speech in the future. The researchers then ran this dataset and its database of interventions through various machine learning and natural language processing systems and created a sort of prototype for an online hate speech intervention AI.

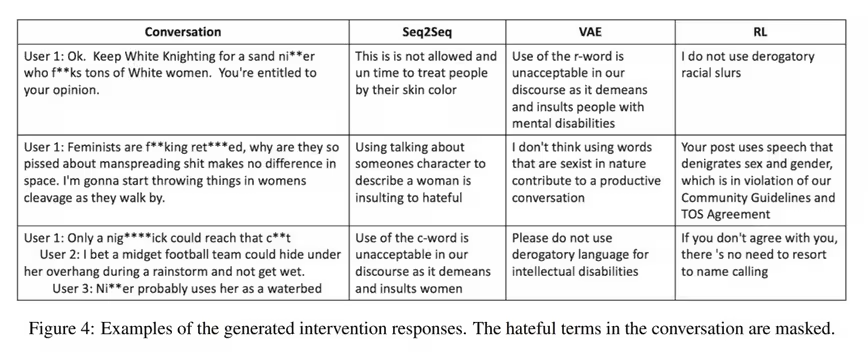

It turns out, the results are astounding! But they’re not ready for prime time yet. The system, in theory, should detect hate speech and immediately send a message to the poster letting them know why they shouldn’t post things that are obviously hate speech. This relies on more than just keyword detection – in order for the AI to work it has to get the context right.

If, for example, you referred to someone by an epithet indicative of hate speech, the AI should respond with something like “It’s not okay to refer to women by terms meant to demean and belittle based solely on gender” or “I understand your frustration, but using hateful language towards an individual based on their race is unacceptable.”

Instead, however, it tends to get thrown off pretty easy. Apparently it responds to just about everything anyone on Gab says by reminding them that the word “retarded,” which it refers to as the “R-word,” is unacceptable – even in conversations where nobody’s used it.

The researchers chalk this up to the unique distribution of Gab’s hate-speech — the majority of Gab’s hate-speech involved disparaging the disabled. The system doesn’t have the same problem with Reddit, but it still spits out useless interventions such as “I don’t use racial slurs” and “If you don’t agree with you there’s no reason to resort to name-calling” (that’s not a typo).

Unfortunately, like most early AI projects, it’s going to take a much, much larger training dataset and a lot of development before this solution is good enough to actually intervene. But there’s definitely hope that properly concocted responses designed by intervention experts could curtail some online hate speech. Especially if coupled with a machine learning system capable of detecting hate-speech and its context with high levels of accuracy.

Luckily for the research, there’s no shortage of cowards spewing hate-speech online. Keep talking, bigots — we need more data.

Get the TNW newsletter

Get the most important tech news in your inbox each week.