Mozilla is investigating YouTube’s algorithmic controls — and wants you to help.

The non-profit has revamped its RegretsReporter tool to examine unwanted YouTube recommendations. The updated browser extension will explore whether the platform truly allows users to reject recommendations.

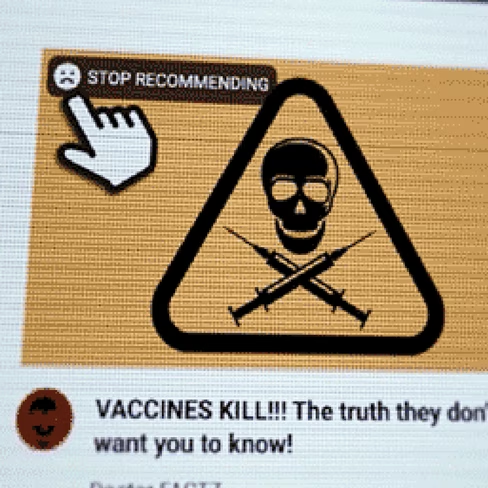

YouTube’s algorithmic controls include buttons such as “Not interested,” “Dislike,” and “Don’t recommend channel.” Your choices train YouTube‘s algorithm to not surface similar content.

RegretsReporter was designed to determine whether the controls work as advertised.

The extension lets you click a “Stop Recommending” button that’s placed on the thumbnail of each video that’s recommended or watched. Mozilla says it will use the data to assess whether YouTube is listening to feedback.

Mozilla says that YouTube doesn’t give independent researchers access to any data like this.

“Countless investigations have revealed how YouTube’s algorithm sends people down harmful rabbit holes,” said Brandi Geurkink, Mozilla’s senior manager of advocacy, in a statement.

“Meanwhile, YouTube has failed to address this problem, remaining opaque about how its recommendation AI — and its algorithmic controls — works. Our research will determine if this feature performs as intended, or if it’s the equivalent of an elevator ‘door close’ button — purely cosmetic.”

We’ve asked YouTube for a response and will update this piece if we hear back from the company.

RegretsReporter was initially used to flag content harmful recommendations. In July, Mozilla said the extension showed that YouTube recommends videos that violate the platform’s own content policies.

The study found that 71% of “regrettable” videos were actively recommended by YouTube’s algorithm.

The update extends the extension’s focus to user controls.

“This extension also makes a statement about the role AI plays in consumer technology,” said Gerlick. “Just like privacy controls should be clear and accessible, so should algorithmic controls.”

Get the TNW newsletter

Get the most important tech news in your inbox each week.