YouTube‘s recommendation algorithm drives more than 70% of the videos we watch on the site. But its suggestions have attracted criticism from across the spectrum.

A developer who’s worked on the system said last year that it’s “toxic” and pushes users towards conspiracy videos, but a recent study found it favors mainstream media channels and actively discourages viewers from watching radical content.

Mine, of course, suggests videos on charitable causes, doctoral degrees, and ethical investment opportunities. But other users receive less noble recommendations.

If you’re one of them, a new browser extension from Mozilla could offer you some insights into the horrors lurking “Up next.”

[Read: Are EVs too expensive? Here are 5 common myths, debunked]

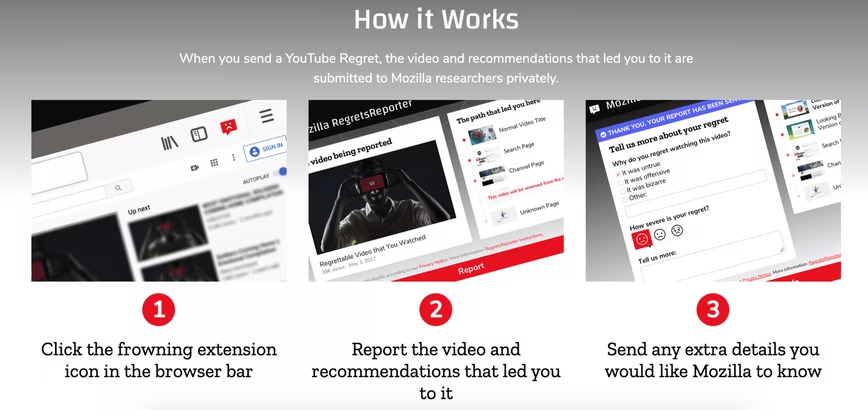

After installing the RegretsReporter and playing a YouTube video, you can click the frowning face icon in your browser to report the video, the recommendations that led you to it, and any extra details on “your regret.” Mozilla researchers will then search for patterns that led to the recommendations.

In a blogpost, Ashley Boyd, Mozilla’s VP of Advocacy & Engagement, gave three examples of what the extension could uncover:

-

What type of recommended videos lead to racist, violent, or conspiratorial content?

-

Are there patterns in terms of frequency or severity of harmful content?

-

Are there specific YouTube usage patterns that lead to harmful content being recommended?

“We ask users not to modify their YouTube behavior when using this extension,” said Boyd. “Don’t seek out regrettable content. Instead, use YouTube as you normally do. That is the only way that we can collectively understand whether YouTube’s problem with recommending regrettable content is improving, and which areas they need to do better on.”

Boyd said Mozilla built the extension after spending a year pressurizing YouTube to give independent researchers access to its recommendation data. The company has acknowledged that the algorithms can lead users to harmful content, but is yet to release the data.

Rather than continue waiting, Mozilla wants to “turn YouTube users into watchdogs” who will offer their recommendation data for investigation. The company says it will share the findings publicly, and hopes YouTube will use the information to improve its products.

A YouTube spokesperson said the company welcomed more research on this front, but questioned Mozilla’s methodology for the project:

The goal of our recommendation system is to connect users with content they love and on any given day, more than 200 million videos are recommended on the homepage alone,” the spokesperson said We are always interested to see research on these systems and exploring ways to partner with researchers even more closely. However it’s hard to draw broad conclusions from anecdotal examples and we update our recommendations systems on an ongoing basis, to improve the experience for users. For example, over the past year alone, we’ve launched over 30 different changes to reduce recommendations of borderline content. Thanks to this change, watchtime this type of content gets from non-subscribed recommendations has dropped by over 70% in the US.

Mozilla promises that all the data it collects is linked to a randomly-generated user ID, rather than your YouTube account, and that no one else will have access to the raw data. So your secret love of Alex Jones should be safe for now.

Alternatively, if you’d prefer to block the toxic temptations served up the algorithm, you can use another Chrome extension called Nudge to remove YouTube recommendations.

Happy streaming!

Updated September 17, 5PM CET: Response from YouTube added.

Get the TNW newsletter

Get the most important tech news in your inbox each week.