Slowly but surely, work on self-driving cars is progressing. Crashes still happen, tragic accidents come to pass every once in a while, and autonomous vehicles still make stupid mistakes that the most novice human drivers would avoid.

But eventually, scientists and researchers will teach our cars to see the world and drive the streets on a level that equates or exceeds the skills of most human drivers.

Distracted driving and DUI will become a thing of the past. Roads will become safer when humans are removed from behind the steering wheel. Accidents will become very rare happenings.

But accidents will happen, and the question is, how should self-driving cars make decisions when a fatal accident and loss of life is inevitable? As it happens, we can’t make a definite decision.

This is what a four-year-long online survey by MIT Media Labs shows. Called Moral Machine, MIT’s test presents participants with 13 different driving scenarios in which the driver must make a choice that will inevitably result in the loss of life of passengers or pedestrians.

For instance, in one scenario, the driver must choose between running over a group of pedestrians or changing direction and hitting an obstacle that will result in the death of the passengers. In other, more complicated scenarios, the participant must choose between two groups of pedestrians that differ in numbers, age, gender, and social status.

The results of the research, which MIT published in a paper in Nature scientific journal, show preferences and decisions differ based on the culture, economic and social conditions, and geographical locations.

For instance, participants from China, Japan, and South Korea were more likely to spare the lives of elderly over youth (the researchers hypothesize that this is due to the fact that in these countries, there’s greater emphasis on respecting the elderly).

In contrast, countries with individualist cultures, like United States, Canada, and France, were more inclined to spare young people.

All this brings us back to self-driving cars. How should a driverless car decide in a situation where human decisions widely diverge?

What driverless cars can do

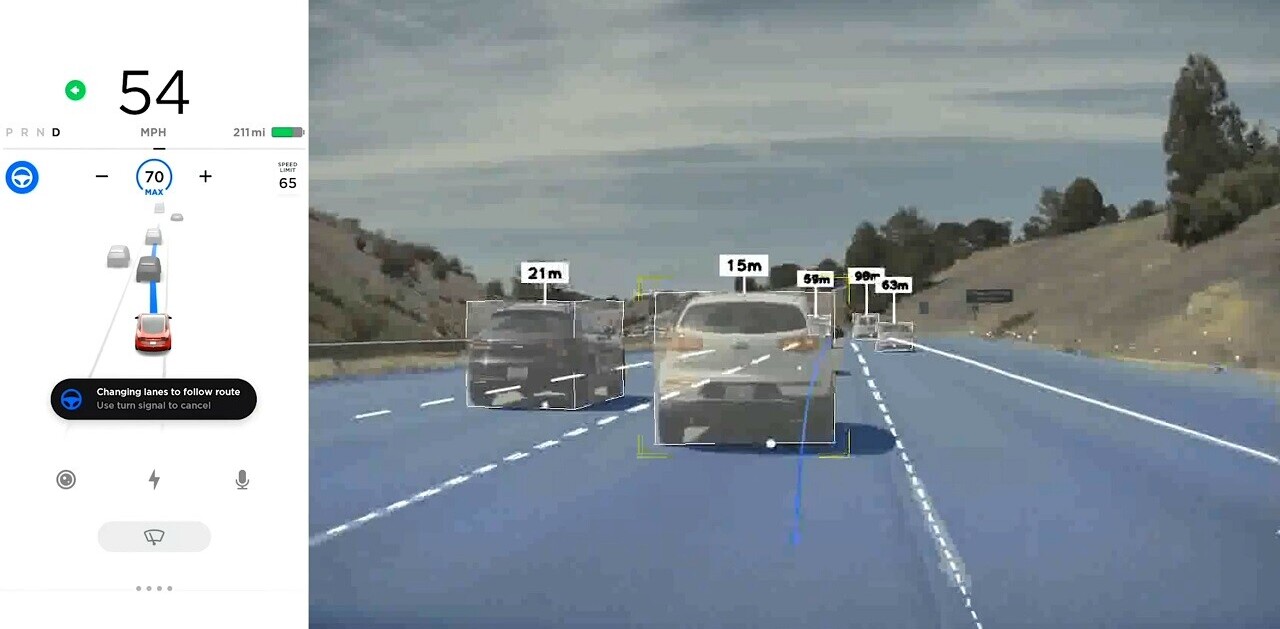

Driverless cars have some of the most advanced hardware and software technologies. They use sensors, cameras, lidars, radars, and computer vision to evaluate and make sense of their surroundings and make driving decisions.

As the technology develops, our cars will be able to make decisions in split seconds, perhaps much faster than the most skilled human drivers. This means in the future, a driverless car will be able to make an emergency stop 100 percent of the times when a pedestrian jumped in front of it in in a dark and misty night.

But that doesn’t mean self-driving cars make decisions at the same level as human drivers do. Basically, they’re powered by narrow artificial intelligence, technologies that can mimic behavior resembles human decisions, but only at the surface.

More specifically, self-driving cars use deep learning, a subset of narrow AI that is especially good at comparing and classifying data.

You train a deep learning algorithm with enough labeled data and it will be able to classify new information and decide what to do with it based on previous data. In the case of self-driving cars, if you provide it with enough samples of road conditions and driving scenarios, it will be able to know what to do when, say, a small child suddenly runs into the street after her ball.

To some degree, deep learning is contested as being too rigid and shallow. Some scientists believe that some problems simply can’t be solved with deep learning, no matter how much data you throw at them and how much training the AI algorithms go through. The jury is still out on whether detecting and responding to road conditions is one of them.

Whether deep learning will become good enough to respond to all road conditions or a combination or other technologies will crack the code and enable cars to safely navigate their way into different traffic and road conditions remains to be seen. But we’re almost certain it will happen, sooner or later.

But while advances in sensors and machine learning will enable driverless cars to avoid obstacles and pedestrians, they still won’t help our cars decide which life is worth saving more than others. Here, no amount of pattern-matching and statistics will help you decide. What you’re missing here is responsibility.

The difference between humans and AI

We’ve discussed the differences between human and artificial intelligence comprehensively in these pages. However, in this post, I would like to focus on a specific aspect of human intelligence that makes us different from AI.

We humans acknowledge and embrace our shortcomings. Our memory fades, we mix up our facts, we’re not fast at crunching numbers and processing information, and both our physical and mental reactions slow to a crawl. In contrast, AI algorithms never age, never mix up or forget facts and can process information at lightning-fast speeds.

However, we humans can make decisions on incomplete data. We can decide based on commonsense, culture, ethical values, and our beliefs. But more importantly, we can explain the reasoning behind our decisions and defend them.

This explains the large difference between the choices that the participants in MIT Media Lab’s test. We also have a conscience and we can bear the consequences of our decisions.

For instance, last year, a woman in Canada’s Quebec province decided to stop her car in the middle of a highway to save a family of ducks who were crossing the road.

Shortly after, a motorcycle crashed into her car and its two passengers died. The car driver went to court and was found guilty on two counts of criminal negligence causing death and two counts of dangerous driving causing death. She was eventually sentenced to nine months in prison, 240 hours of community service, and a five-year suspension to her driver’s license.

AI algorithms can’t accept responsibility for their decisions and can’t go to court for mistakes they make, which prevents them from taking on responsibilities in which they can make decisions in life-and-death situations.

When a self-driving car accidentally hits a pedestrian, we know who to hold responsible: the developer of the technology. We also (almost) know what to do: train the AI models to handle edge cases that hadn’t been taken into consideration.

Who is responsible for deaths caused by driverless cars

But who do you hold to account when a driverless car kills a pedestrian not because of an error in its deep learning algorithms, but as the result of the correct functionality of its system? The car isn’t sentient and can’t assume responsibility for its actions, even if it could explain them.

If the developer of the AI algorithms is held responsible, then the company’s representatives would have to appear in court for every death that their cars cause.

Such a measure would probably hamper innovation in machine learning and the AI industry in general, because no developer can guarantee that their driverless cars would function perfectly 100 percent of the time. Consequently, tech companies would become reluctant to engage in self-driving car companies to avoid the legal costs and complications.

What if we held the car manufacturer to account for casualties caused by the driverless tech tacked on their vehicles? Again, car manufacturers would have to answer for every accident their cars would be involved in, even if they don’t have full understanding of the technology they have integrated into their vehicles.

Neither can we hold the passengers to account either, because they have no control over the decisions the car makes. Doing so would only prod people to avoid using

In some domains, such as health care, recruitment and criminal justice, deep learning algorithms can function as augmented intelligence. This means that instead of automating critical decisions, they provide insights and suggestions leave the decisions to human experts who can assume responsibility for their actions.

Unfortunately, that isn’t possible with driverless cars, because turning over the control to a human a split second before a collision will be of no use.

How should driverless cars deal with life-and-death situations?

To be true, the premises set forth by the MIT Media Labs test are very rare happenings. Most drivers will never find themselves in such situations in their entire lives. But nonetheless, the mere fact that millions of people from more than 200 countries have taken the test shows how important even these rare happenings are.

Some experts suggest that the solution to preventing inevitable pedestrian deaths is to regulate the pedestrians themselves or at least teach them to change their behavior around self-driving cars.

Contrary to AI developers, car manufacturers and passengers, pedestrians are the only humans who can control the turnout of individual scenarios where driverless cars and pedestrians find themselves in tight situations. They can prevent them from happening in the first place.

This means that, for instance, governments set the rules and regulations that define how pedestrians must behave around driverless cars. This will enable developers to define clear functionalities for their cars and hold pedestrians responsible if they break the rules. This would be the shortest path to avoiding or minimizing situations in which human death is inevitable.

Not everyone is convinced that setting the responsibility on the shoulders of pedestrians. This way, they believe, our roads would become no different from railroads, where pedestrians and vehicles take full responsibility for accidents, and trains and their operators can’t be held to account for railway casualties.

Another solution would be to put physical safeguards and barriers that prevent pedestrians from entering roads and spaces where self-driving cars are moving. This would remove the problem altogether. An example is shown below, taken from the sci-fi move The Minority Report.

And maybe these problems will be a thing of the past by the time self-driving cars become the norm. The transition from horses and carts to automobiles created an upheaval in many aspects of life that weren’t directly related to commute. We have yet to learn how driverless cars will affect regulations, city infrastructures and behavioral norms.

This story is republished from TechTalks, the blog that explores how technology is solving problems… and creating new ones. Like them on Facebook here and follow them down here:

Get the TNW newsletter

Get the most important tech news in your inbox each week.