Google published a research blog post on Tuesday about a new compression algorithm for AI models. Within hours, memory stocks were falling. Micron dropped 3 per cent, Western Digital lost 4.7 per cent, and SanDisk fell 5.7 per cent, as investors recalculated how much physical memory the AI industry might actually need.

The algorithm is called TurboQuant, and it addresses one of the most expensive bottlenecks in running large language models: the key-value cache, a high-speed data store that holds context information so the model does not have to recompute it with every new token it generates. As models process longer inputs, the cache grows rapidly, consuming GPU memory that could otherwise be used to serve more users or run larger models. TurboQuant compresses the cache to just 3 bits per value, down from the standard 16, reducing its memory footprint by at least six times without, according to Google’s benchmarks, any measurable loss in accuracy.

The paper, which will be presented at ICLR 2026, was authored by Amir Zandieh, a research scientist at Google, and Vahab Mirrokni, a vice president and Google Fellow, along with collaborators at Google DeepMind, KAIST, and New York University. It builds on two earlier papers from the same group: QJL, published at AAAI 2025, and PolarQuant, which will appear at AISTATS 2026.

How it works

TurboQuant’s core innovation is eliminating the overhead that makes most compression techniques less effective than their headline numbers suggest. Traditional quantization methods reduce the size of data vectors but must store additional constants, normalization values that the system needs in order to decompress the data accurately. These constants typically add one or two extra bits per number, partially undoing the compression.

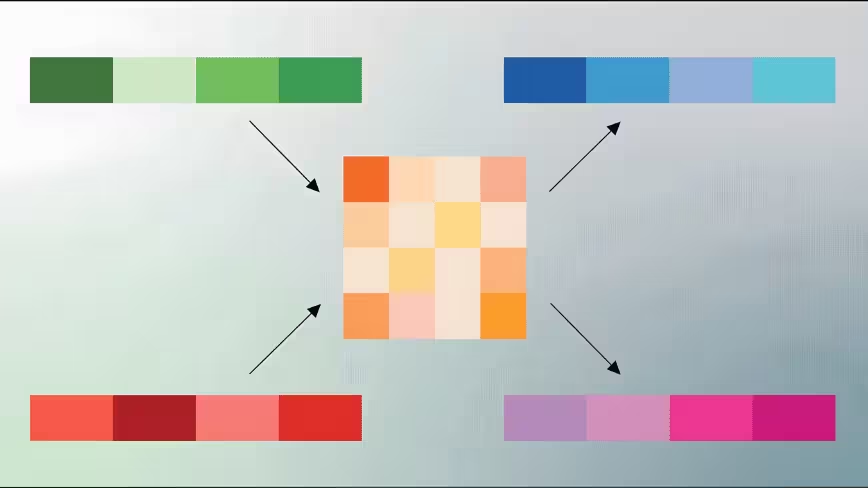

TurboQuant avoids this through a two-stage process. The first stage, called PolarQuant, converts data vectors from standard Cartesian coordinates into polar coordinates, separating each vector into a magnitude and a set of angles. Because the angular distributions follow predictable, concentrated patterns, the system can skip the expensive per-block normalization step entirely. The second stage applies QJL, a technique based on the Johnson-Lindenstrauss transform, which reduces the small residual error from the first stage to a single sign bit per dimension. The combined result is a representation that uses most of its compression budget on capturing the original data’s meaning and a minimal residual budget on error correction, with no overhead wasted on normalization constants.

Google tested TurboQuant across five standard benchmarks for long-context language models, including LongBench, Needle in a Haystack, and ZeroSCROLLS, using open-source models from the Gemma, Mistral, and Llama families. At 3 bits, TurboQuant matched or outperformed KIVI, the current standard baseline for key-value cache quantization, which was published at ICML 2024. On needle-in-a-haystack retrieval tasks, which test whether a model can locate a single piece of information buried in a long passage, TurboQuant achieved perfect scores while compressing the cache by a factor of six. At 4-bit precision, the algorithm delivered up to an eight-times speedup in computing attention on Nvidia H100 GPUs compared to the uncompressed 32-bit baseline.

What the market heard

The stock reaction was swift and, in the view of several analysts, disproportionate. Wells Fargo analyst Andrew Rocha noted that TurboQuant directly attacks the cost curve for memory in AI systems. If adopted broadly, he said, it quickly raises the question of how much memory capacity the industry actually needs. But Rocha and others also cautioned that the demand picture for AI memory remains strong, and that compression algorithms have existed for years without fundamentally altering procurement volumes.

The concern is not unfounded, however. AI infrastructure spending is growing at extraordinary rates, with Meta alone committing up to $27 billion in a recent deal with Nebius for dedicated compute capacity, and Google, Microsoft, and Amazon collectively planning hundreds of billions in capital expenditure on data centres through 2026. A technology that reduces memory requirements by six times does not reduce spending by six times, because memory is only one component of a data centre’s cost. But it changes the ratio, and in an industry spending at this scale, even marginal efficiency gains compound quickly.

The efficiency question

TurboQuant arrives at a moment when the AI industry is being forced to confront the economics of inference. Training a model is a one-time cost, however enormous. Running it, serving millions of queries per day with acceptable latency and accuracy, is the recurring expense that determines whether AI products are financially viable at scale. The key-value cache is central to this calculation: it is the bottleneck that limits how many concurrent users a single GPU can serve and how long a context window a model can practically support.

Compression techniques like TurboQuant are part of a broader push toward making inference cheaper, alongside hardware improvements such as Nvidia’s Vera Rubin architecture and Google’s own Ironwood TPUs. The question is whether these efficiency gains will reduce the total amount of hardware the industry buys, or whether they will simply enable more ambitious deployments at roughly the same cost. The history of computing suggests the latter: when storage gets cheaper, people store more; when bandwidth increases, applications consume it.

For Google, TurboQuant also has a direct commercial application beyond language models. The blog post notes that the algorithm improves vector search, the technology that powers semantic similarity lookups across billions of items. Google tested it against existing methods on the GloVe benchmark dataset and found it achieved superior recall ratios without requiring the large codebooks or dataset-specific tuning that competing approaches demand. This matters because vector search underpins everything from Google Search to YouTube recommendations to advertising targeting, which is to say, it underpins Google’s revenue.

The paper’s contribution is real: a training-free compression method that achieves measurably better results than the existing state of the art, with strong theoretical foundations and practical implementation on production hardware. Whether it reshapes the economics of AI infrastructure or simply becomes one more optimisation absorbed into the industry’s insatiable appetite for compute is a question the market will answer over months, not hours.

Get the TNW newsletter

Get the most important tech news in your inbox each week.