After a Tesla driver fatally crashed into a truck last May while using the vehicle’s autopilot feature, a federal investigation was launched to determine the feature’s safety. Some thought a recall was imminent, but that investigation has now concluded, with no recall in sight.

Though the loss of life is tragic and shows Tesla still has much work to do, the 13-page report by the National Highway Traffic Safety Administration (NHTSA) seems to suggest the vehicles are still safer on the whole because of the feature.

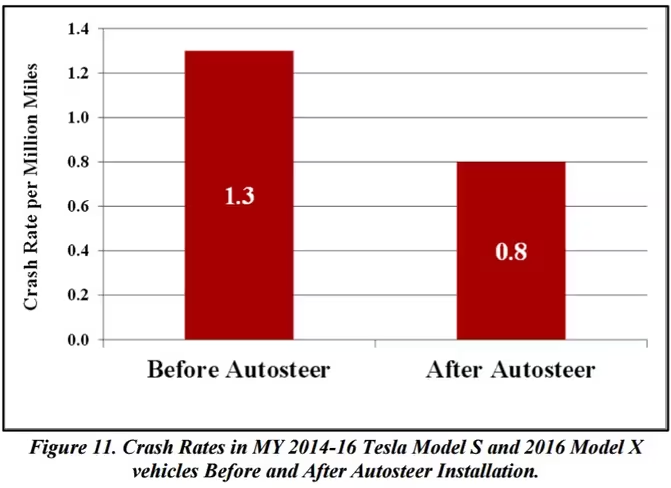

A key finding was that airbag-deploying crashes dropped “by almost 40 percent” since Tesla introduced the Autopilot feature. That doesn’t seem to control for any other Tesla updates that could have improved safety in the same time window, but it’s still a significant figure.

Who would’ve thunk it; computers may be better at driving than humans at driving after all. But it’s also important to understand their limitations.

An important argument in the report is that Tesla’s automatic emergency braking (AEB), and those of other companies, are not designed to avoid all types of accidents.

In particular, none of the vehicles studied by the administration were designed to prevent collisions in which another vehicle is crossing perpendicularly in front of you. The report notes that “braking for crossing path collisions, such as that present in the Florida fatal crash, are outside the expected performance capabilities of the system.”

The report also differentiates between Autopilot mode – which is meant to basically be an enhanced cruise control with some steering – and AEB, which is a separate system that works regardless of Autopilot being on or off.

Data from the crash shows that Tesla’s emergency braking system did not activate – again, it wasn’t designed for that kind of collision – but also that the driver had “at least seven seconds” to see the truck the vehicle crashed into, where most of the crashes studied develop in less than three to four seconds.

Even with such short notice, the report suggests that most drivers will try to break or steer away, but in the Florida crash, the driver took “no braking, steering, or other actions” to prevent the crash. The driver of the truck he crashed into said the man had been watching a Harry Potter movie, but there is no evidence to confirm that claim.

Of course, part of the argument against Tesla is that you wouldn’t even be able to watch a movie – or you know, take a nap – without such extensive automation.

Tesla does have checks in place, like pinging you with audiovisual notifications and requiring you put your hands on the wheel after a certain amount of time (variable depending on traffic conditions). Following the accident, an update made Autopilot stingier: If you ignore the warnings and reminder enough times, you will “strike out” and not be able to engage autopilot until your next drive.

Though Tesla’s autopilot reveal gave some people hope they could get to their destination without actually having to drive – and in at least one case, it helped an injured man get to a hospital – the truth is the feature’ is still only meant to be an aid, not a replacement for driving skills.

Even taking the temptation for distraction into account, the near-40 percent decrease in accidents suggests Autopilot is doing more good than harm. It’s up to Tesla and other car makers to continue to educate people about the limitations of their automated systems as they become more popular over the years. And its up to you to always pay attention on the road.

Get the TNW newsletter

Get the most important tech news in your inbox each week.