The internet is not easy to navigate for people with visual imparities. While there are screen reader applications to help them, often, websites or users don’t add alt text to images. In turn, the reader can’t describe to the user what the picture looks like.

Thankfully, we have seen plenty of AI models in the last few years that make this task easier by automatically captioning photos. Facebook, which introduced a model called Automatic Alternative Text (AAT) in 2016, has updated its model to identify objects in a photo 10 times more efficiently than before, and in greater detail.

The refreshed model can easily recognize things such as activities, landmarks, and types of animals in a picture. The new model could also tell you if people are standing in the front or at the back in a scene.

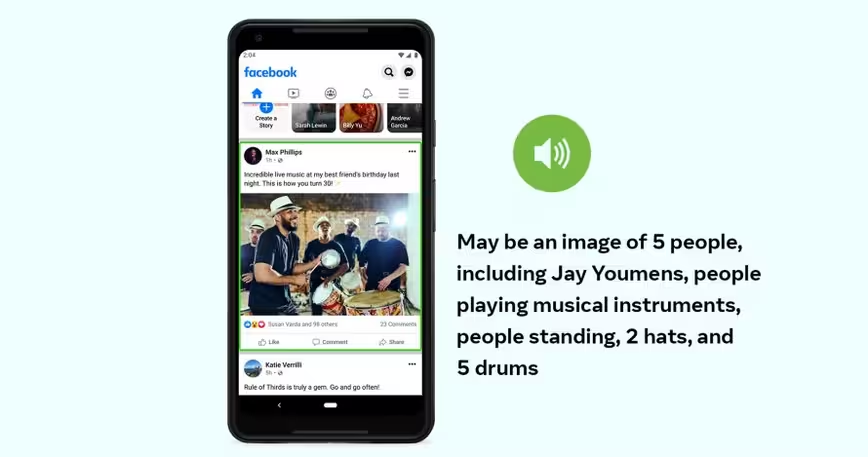

Facebook’s earlier model would’ve described the photo below as “maybe a picture of 5 people.” But the updated model would describe it as “maybe an image of 5 people playing musical instruments, people standing, 2 hats, and 5 drums.”

Facebook’s previous model used human-labeled and human-vetted data. But to expand its range, and cut down the training time, the team trained the new model on public images such as Instagram photos with captions and hashtags. The company also claims that it made sure that AI understands different genders, skin colors, and cultural contexts.

The new model also allows users to choose to get a detailed description of all photos or some specific interests, such as pictures from friends and family on the Facebook newsfeed.

There are plenty of enterprise solutions around that let you automatically caption your images. However, Facebook’s model is one of the few large-scale solutions for this problem.

You can learn more about Facebook’s updated image captioning model here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.