CIMON, an AI-powered robot developed by IBM and Airbus, recently acted perfectly normal during interactions with human crew aboard the International Space Station (ISS). A slew of journalists don’t agree with that assessment, but we’ll let you decide.

When a crew member tried to ask it to do stuff it got confused, misinterpreted certain voice commands, and generally failed to produce the expected results with any consistency.

Yep, sounds like business as usual.

If you own a smart speaker, interact with a virtual assistant, or have ever played Zork (okay, maybe not that one) you know exactly how it feels to interact with an ordinary chatbot – and there’s not exactly a chasm between IBM’s Watson and Amazon’s Alexa.

We love our AI-powered gadgets when they work, and they’re getting better all the time, but when they don’t they can be frustrating.

In Watson’s defense, it’s among the first AI solutions to be tested in space, and certainly the first floating chat bot on the ISS.

But, as you can clearly see in the video above (starting around the 3:30 mark), the worst it can be accused of is not being very useful.

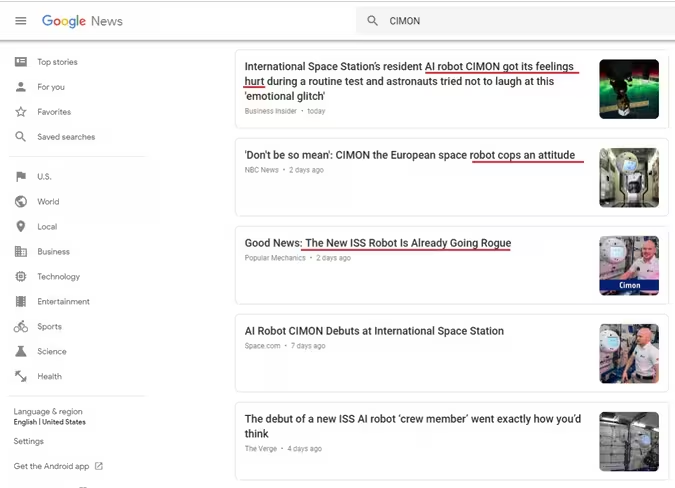

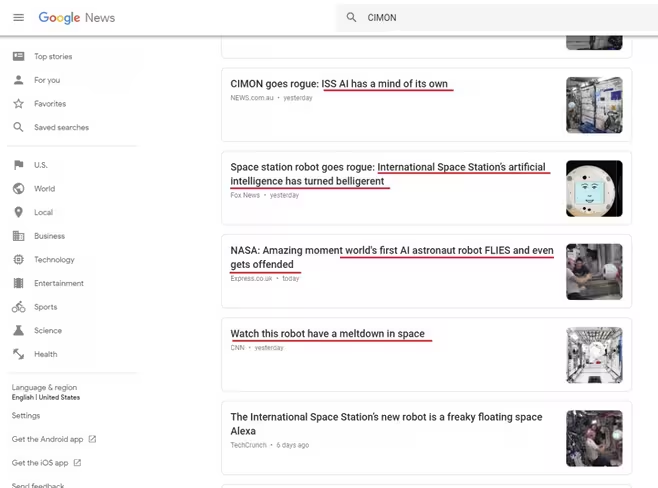

Why then, fellow journalists, does anyone think this is appropriate:

Did I miss a memo about a contest where we all try to come up with the most ridiculous headline possible and then shoehorn in as many references to fictional AI/robots in the article as we can? Because, if so, way to work with a difficult prompt on this one everybody.

It’s time we all stopped reporting every little thing a machine learning-powered construct does as though we’re seeing evidence of robot sentience, even if it’s a joke.

AI can do a lot of things, but even IBM’s Watson – an AI we really like — can’t get offended or get its feeling hurt. And it certainly did not go rogue, get belligerent, or have a meltdown by any measure.

Just stop it. You’re all being ridiculous. No wonder Elon gets so aggravated.

Don’t forget to check out our Artificial Intelligence section for more analysis on what’s really happening in the world of AI.

Get the TNW newsletter

Get the most important tech news in your inbox each week.