The US 2nd Amendment right to keep and bear arms was added to the Constitution in 1791. In the two centuries since, firearm technology has changed significantly. But the 2nd Amendment hasn’t.

In 1791, for example, US citizens were given the right to carry a single-shot firearm or sword in public. Because, well, that’s all there was. In 2021, however, there exists a vast array of weaponry ranging from easily-concealed handguns to assault rifles capable of firing hundreds of rounds per minute with uncanny accuracy.

It’s so-far proven pointless to debate whether the US founders would have viewed the right to bear arms differently if they’d been aware of semi-automatic weapons such as the Glock 21 or Colt AR-15.

But the advent of deep learning AI technology combined with the stalwart, unwavering nature of US courts when it comes to defending the 2nd Amendment presents some interesting new wrinkles to the conversation.

It’s legal to keep and own a firearm in all 50 US states – though certain conditions do apply and the exact restrictions can vary widely from one state to the next.

In Texas, for example, it’s typically legal to open-carry any firearm you can legally own. Interestingly though, it is not legal to open-carry a sword.

But what happens when we introduce AI-powered weapons to the mix? There are three major areas of concern at the intersection of machine learning and the right to keep and bear arms:

- Sentry guns

- Defense drones

- Autonomous attack vehicles

Sentry guns

In 2018, a Syrian man built this dead-simple autonomous sentry gun:

The system features a universal rifle mount, a trigger-pulling mechanism, and a base that rotates 360-degrees to track targets using heat signatures. It’s completely controlled via laptop and, presumably, could work with any number of simple artificial intelligence systems to track, tag, and eliminate targets.

In the military context, it’s easy to imagine these seeing heavy-use in defense of forward bases or along strategic choke points.

Traditionally, similar technology has been banned for civilian use. It’s illegal to set up “spring guns” in defense of property in the US.

In fact, it’s generally illegal in the US to set up any form of active “trap” capable of killing a human indiscriminately. You can protect your property with electrified fences topped with razor wire, but you can’t legally use tripwires attached to shotguns to defend your home or business.

There is a huge leap, however, between a tripwire connected to a shotgun’s trigger and an AI system that can determine whether a stranger approaching the property is a mail carrier, a police officer, or an unwanted intruder.

Arguably, such a system would be safer than guard dogs trained to attack strangers on sight or armed humans – there’s no risk of an autonomous rifle firing out of fear for its life.

And, as you can clearly tell from the video above, we live in a world where just about anybody with access to GitHub and a metal shop could litter their property with fully-autonomous turrets.

Today, it’s easy to see the government pushing back against such a thing (law enforcement likely won’t be keen on such a paradigm). But, like all arguments concerning personal armament in the US: the more the government embraces autonomous killing machines, the greater the implied impetus becomes for its citizens to do the same.

And that brings us to the open-carry conversation.

Defense drones

This one’s a bit more far-fetched because, unlike sentry guns, defense drones are imaginary future-tech.

The big idea would be a drone capable of quietly following you around like a personal guardian angel. It’d observe the environment for hostile threats and, when necessary, intercede on your behalf in order to prevent you from coming to harm.

Currently, there are millions of people in the US who either can’t physically operate a firearm, such as many disabled and elderly people, or who aren’t legally allowed to, such as felons and children.

But if everyone had access to an autonomous drone capable of killing on their behalf, then all US citizens would be equally-protected by the 2nd Amendment.

Autonomous attack vehicles

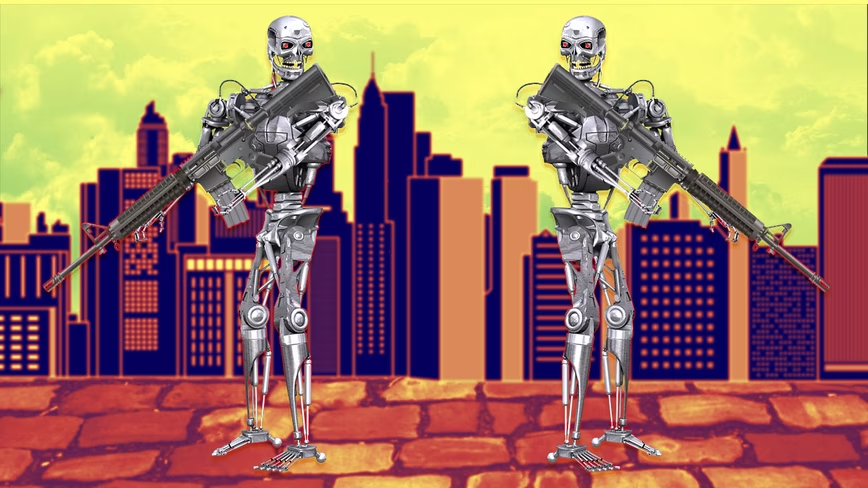

AKA: killer robots. Based on current law, it would depend on the nature of the machine. If you put autonomous weapons on a vehicle you can ride in, local law enforcement, the DMV, or the NHSTA would probably take exception and ban the machine.

But if you built a humanoid robot and gave it rifle, you might have a leg (or two) to stand on in future 2A arguments. If said robot was physically constrained to your property, you could argue it was just another kind of autonomous sentry.

At the end of the day, there’s no telling what the future holds. The 2A argument is only going to get more murky as the creation and deployment of autonomous killing technology is made more accessible to the average person.

Disclaimer: It’s probably illegal to build personal autonomous weapons of any kind in the US right now. You should definitely contact local law enforcement before you consider partaking in any such endeavor.

Get the TNW newsletter

Get the most important tech news in your inbox each week.