What if I told you I was selling a set of computer programs that could automagically solve all of your hiring, diversity, and management problems overnight? You’d be stupid not to at least listen to the rest of the offer, right?

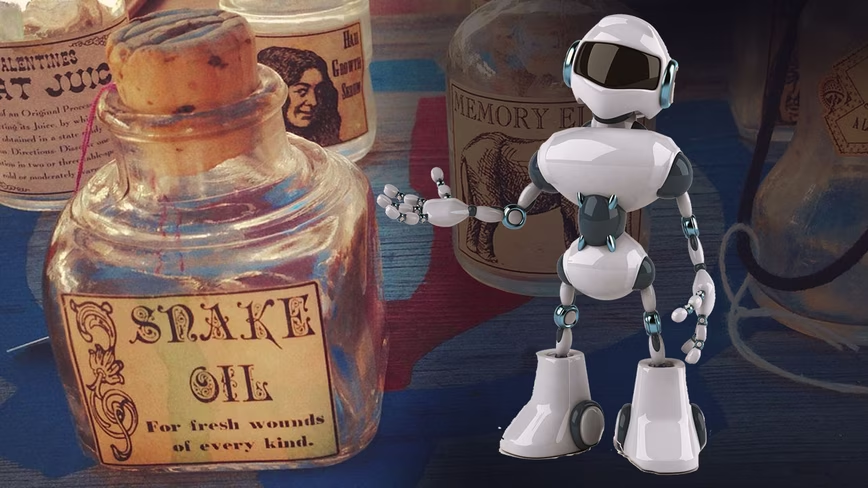

Of course no such system exists. The vast majority of AI products purported to predict social outcomes are blatant scams. The fact that most of them are legal doesn’t stop them from being snake oil.

Typically, the following AI systems fall under the “legal snake oil” category:

- AI that predicts recidivism

- AI that predicts job success

- Predictive policing

- AI that predicts whether an individual will become a criminal or terrorist

- AI that predicts outcomes for children

The reason for this is simple: AI cannot do anything a human (given enough time and resources) could not themselves do. Artificial intelligence is not psychic and it cannot predict social outcomes.

As associate professor of computer science at Princeton University Arvind Narayanan said in a series of recent lectures on snake oil AI:

These problems are hard because we can’t predict the future. That should be common sense. But we seem to have decided to suspend common sense when AI is involved.

Think about it, have you ever heard of a big business that’s never made a single hiring mistake?

These systems work on the same principle as the magic beans from Jack and the Beanstalk. You have to install the systems, pay for them, and then use them for an extended period of time before you can evaluate their effectiveness.

That means you’re being sold on statistics up front. And, when it comes to benchmarking black box AI systems, you may as well be measuring how much mana it takes to cast a fireball spell or counting how many angels can dance on the head of a pin: there’s no science to be done.

Take HireVue, one of the most popular AI-hiring system vendors in the world. Its platform can purportedly measure everything from “leadership potential” to “personality” and “work style” from a combination of video interviews and games.

That sounds pretty fancy, and HireVue’s statistical claims all seem quite impressive. But the bottom line is that AI can’t do any of those things.

The AI doesn’t measure candidate quality, it measures a candidate’s adherence to an arbitrary set of rules decided on by the platform’s developers.

Here’s a snippet from a recent article by the Financial Times’ Sarah O’Connor that explains how silly the video interview process really is:

While it’s hard to communicate naturally in such an unnatural situation, the platforms simultaneously urge jobseekers to “be authentic” to have the best chance of success. “Get excited and share your energy with the camera, letting your personality shine,” HireVue advises.

Unless you’re being hired to be a TV news anchorperson, this is ridiculous.

“Energy” and “personality” are subjective ideas that can’t possibly be measured, as is “authenticity” when it comes to humans.

HireVue’s systems, like all AI purported to predict social outcomes, are nothing more than arbitrary discriminators.

If the only “good” candidates are those who smile, maintain eye contact, and exhibit the right “authenticity” and “energy,” then candidates with muscular, neurological, or nervous system disorders who can’t do those things are instantly excluded. Candidates who don’t present as neurotypical on camera are excluded. And candidates who are culturally diverse from the creators of the software are excluded.

So why do CEOs and HR leaders still insist on using AI-powered hiring solutions? There are two simple reasons:

- They’re gullible enough to believe the vendor’s claims

- They recognize the value in being able to blame the algorithm

Here are some other scientific and (well-sourced) journalistic resources explaining why AI purported to predict social outcomes is almost always a scam:

- Artificial Intelligence in the job interview process (University of Sussex)

- Machine Bias (ProPublica)

- How to recognize AI snake oil (Princeton University)

- The Making of AI Snake Oil (Toward Data Science)

- Data and Algorithms at Work: The Case for Worker Technology Rights (UC Berkeley)

- An AI to stop hiring bias could be bad news for disabled people (Wired)

- AI can’t predict how a child’s life will turn out even with a ton of data (MIT Technology Review)

- Measuring the predictability of life outcomes with a scientific mass collaboration (Princeton University)

- AI can’t tell if you’re lying – anyone who says otherwise is selling something (Neural)

- Hiring Algorithms Are Not Neutral (Harvard Business Review)

- The Perils of Predictive Policing (Georgetown Public Policy Review)

- Predictive policing is a scam that perpetuates systemic bias (Neural)

Get the TNW newsletter

Get the most important tech news in your inbox each week.