Data quality has always been an afterthought. Teams spend months instrumenting a feature, building pipelines, and standing up dashboards, and only when a stakeholder flags a suspicious number does anyone ask whether the underlying data is actually correct. By that point, the cost of fixing it has multiplied several times over.

This is not a niche problem. It plays out across engineering organizations of every size, and the consequences range from wasted compute cycles to leadership losing trust in the data team entirely. Most of these failures are preventable if you treat data quality as a first-class concern from day one rather than a cleanup task for later.

How a typical data project unfolds

Before diagnosing the problem, it helps to walk through how most data engineering projects get started. It usually begins with a cross-functional discussion around a new feature being launched and what metrics stakeholders want to track. The data team works with data scientists and analysts to define the key metrics. Engineering figures out what can actually be instrumented and where the constraints are. A data engineer then translates all of this into a logging specification that describes exactly what events to capture, what fields to include, and why each one matters.

That logging spec becomes the contract everyone references. Downstream consumers rely on it. When it works as intended, the whole system hums along well.

Before data reaches production, there is typically a validation phase in dev and staging environments. Engineers walk through key interaction flows, confirm the right events are firing with the right fields, fix what is broken, and repeat the cycle until everything checks out. It is time consuming but it is supposed to be the safety net.

The problem is what happens after that.

The gap between staging and production reality

Once data goes live and the ETL pipelines are running, most teams operate under an implicit assumption that the data contract agreed upon during instrumentation will hold. It rarely does, not permanently.

Here is a common scenario. Your pipeline expects an event to fire when a user completes a specific action. Months later, a server side change alters the timing so the event now fires at an earlier stage in the flow with a different value in a key field. No one flags it as a data impacting change. The pipeline keeps running and the numbers keep flowing into dashboards.

Weeks or months pass before anyone notices the metrics look flat. A data scientist digs in, traces it back, and confirms the root cause. Now the team is looking at a full remediation effort: updating ETL logic, backfilling affected partitions across aggregate tables and reporting layers, and having an uncomfortable conversation with stakeholders about how long the numbers have been off.

The compounding cost of that single missed change includes engineering time on analysis, effort on codebase updates, compute resources for backfills, and most damagingly, eroded trust in the data team. Once stakeholders have been burned by bad numbers a couple of times, they start questioning everything. That loss of confidence is hard to rebuild.

This pattern is especially common in large systems with many independent microservices, each evolving on its own release cycle. There is no single point of failure, just a slow drift between what the pipeline expects and what the data actually contains.

Why validation cannot stop at staging

The core issue is that data validation is treated as a one-time gate rather than an ongoing process. Staging validation is important but it only verifies the state of the system at a single point in time. Production is a moving target.

What is needed is data quality enforcement at every layer of the pipeline, from the point data is produced, through transport, and all the way into the processed tables your consumers depend on. The modern data tooling ecosystem has matured enough to make this practical.

Enforcing quality at the source

The first line of defense is the data contract at the producer level. When a strict schema is enforced at the point of emission with typed fields and defined structure, a breaking change fails immediately rather than silently propagating downstream. Schema registries, commonly used with streaming platforms like Apache Kafka, serialize data against a schema before it is transported and validate it again on deserialization. Forward and backward compatibility checks ensure that schema evolution does not silently break consuming pipelines.

Avro formatted schemas stored in a schema registry are a widely adopted pattern for exactly this reason. They create an explicit, versioned contract between producers and consumers that is enforced at runtime and not just documented in a spec file that may or may not be read.

Write, audit, publish: A quality gate in the pipeline

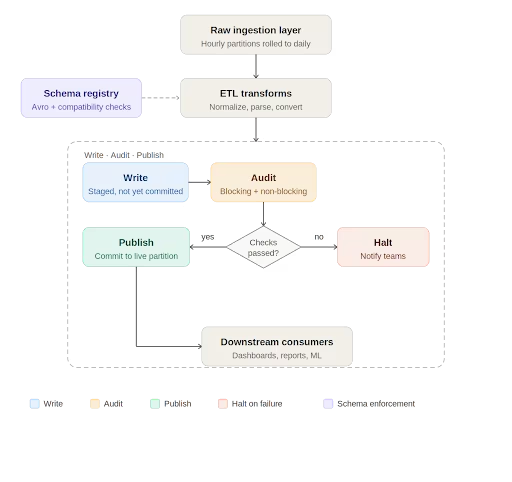

At the processing layer, Apache Iceberg has introduced a useful pattern for data quality enforcement called Write-Audit-Publish, or WAP. Iceberg operates on a file metadata model where every write is tracked as a commit. The WAP workflow takes advantage of this to introduce an audit step before data is declared production ready.

In practice, the daily pipeline works like this. Raw data lands in an ingestion layer, typically rolled up from smaller time window partitions into a full daily partition. The ETL job picks up this data, runs transformations such as normalizations, timezone conversions, and default value handling, and writes to an Iceberg table. If WAP is enabled on that table, the write is staged with its own commit identifier rather than being immediately committed to the live partition.

At this point, automated data quality checks run against the staged data. These checks fall into two categories. Blocking checks are critical validations such as missing required columns, null values in non-nullable fields, and enum values outside expected ranges. If a blocking check fails, the pipeline halts, the relevant teams are notified, and downstream consumers are informed that the data for that partition is not yet available. Non-blocking checks catch issues that are meaningful but not severe enough to stop the pipeline. They generate alerts for the engineering team to investigate and may trigger targeted backfills for a small number of recent partitions.

Only when all checks pass does the pipeline commit the data to the live table and mark the job as successful. Consumers get data that has been explicitly validated, not just processed.

Data quality as engineering practice, not a cleanup project

There is a broader point embedded in all of this. Data quality cannot be something the team circles back to after the pipeline is built. It needs to be designed into the system from the start and treated with the same discipline as any other part of the engineering stack.

With modern code generation tools making it cheaper than ever to stand up a new pipeline, it is tempting to move fast and validate later. But the maintenance burden of an untested pipeline, especially one feeding dashboards used by product, business, and leadership teams, is significant. A pipeline that runs every day and silently produces wrong numbers is worse than one that fails loudly.

The goal is for data engineers to be producers of trustworthy, well documented data artifacts. That means enforcing contracts at the source, validating at every stage of transport and transformation, and treating quality checks as a permanent part of the pipeline rather than a one time gate at launch.

When stakeholders ask whether the numbers are right, the answer should not be that we think so. It should be backed by an auditable, automated process that catches problems before anyone outside the data team ever sees them.

Get the TNW newsletter

Get the most important tech news in your inbox each week.

Provided by Medialister