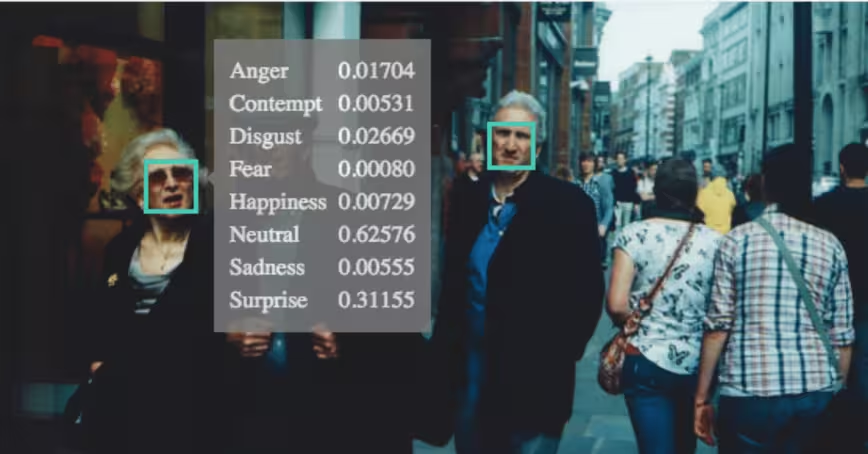

A British police force is set to trial a facial recognition system that infers people’s moods by analyzing CCTV footage.

Lincolnshire Police will be able to use the system to search the film for certain moods and facial expressions, the London Times reports. It will also allow cops to find people wearing hats and glasses, or carrying bags and umbrellas.

The force has got funding from the Home Office to test the tool in the market town of Gainsborough, but ethical concerns have delayed the pilot’s launch.

A police spokesperson told the Times that all the footage will be deleted after 31 days. The force will also carry out a human rights and privacy assessment before the trial gets the green light.

[Read: Most mobile apps suck — here’s how to fix them]

But critics say the system will violate people’s privacy.

“There’s a huge amount of money from the Home Office for this technology and they’re getting themselves into legal trouble, breaching human rights and expanding state surveillance while no one is watching,” said Silkie Carlo, director of civil liberties group Big Brother Watch.

The police are also yet to explain how the system works. Emotion detection AI is estimated to be a $20 billion market but there’s still little scientific evidence that the tech really works. In December 2019, research institute AI Now called for regulators to ban the tech from decisions that impact people’s lives, as the field is “built on markedly shaky foundations.”

“At the same time as these technologies are being rolled out, large numbers of studies are showing that there is… no substantial evidence that people have this consistent relationship between the emotion that you are feeling and the way that your face looks,” AI Now’s co-founder Prof Kate Crawford told the BBC late last year.

If the new system gets rolled out, it risks further eroding privacy in one of the most surveilled countries in the world.

So you like our media brand Neural? You should join our Neural event track at TNW2020, where you’ll hear how artificial intelligence is transforming industries and businesses.

Get the TNW newsletter

Get the most important tech news in your inbox each week.