TL;DR

AWS will sell OpenAI models after Microsoft ended its exclusive reselling rights, completing a restructuring that began with Amazon’s $50 billion investment in OpenAI’s $110 billion funding round. The companies jointly built a Stateful Runtime Environment for agentic AI on Bedrock. But the deal arrives as the WSJ reports OpenAI missed revenue and user targets, with $25 billion in expected cash burn against $30 billion revenue, and hundreds of billions in infrastructure commitments to AWS, Azure, and Oracle that assume growth OpenAI has not yet demonstrated.

Amazon Web Services will begin selling OpenAI’s models to its cloud customers, the company announced on Tuesday, one day after Microsoft agreed to end the exclusive reselling arrangement that had given Azure sole access to OpenAI’s technology for the first three years of the generative AI era. “It’s something that our customers have asked for, for a really long time,” Matt Garman, AWS’s chief executive, told Bloomberg Television. Some of OpenAI’s latest models will be available to preview on AWS starting Tuesday, with the most powerful GPT models arriving within weeks. The announcement completes a restructuring of the AI industry’s most consequential partnership that began in February when Amazon committed up to $50 billion as part of OpenAI’s $110 billion funding round, a deal that valued the ChatGPT maker at $852 billion and gave Amazon its largest-ever investment in any company. OpenAI simultaneously committed to spending $100 billion on AWS computing power and Trainium chips over eight years, consuming two gigawatts of capacity. The question the deal answers is not whether OpenAI’s models are good enough to sell on rival clouds. The question is whether OpenAI can sell enough of them, anywhere, to justify what it has promised to spend.

The restructuring

Microsoft and OpenAI amended their partnership agreement on Monday in a restructuring that dismantles the exclusive arrangement that defined the first phase of the AI boom. Microsoft’s licence to OpenAI’s intellectual property for models and products, previously exclusive, becomes non-exclusive but extends through 2032. Microsoft retains its 20 per cent share of OpenAI’s revenue through 2030, now subject to a cap. OpenAI must still ship its models on Azure first unless Microsoft cannot or chooses not to support the required capabilities, a clause that preserves Azure’s early-access advantage while removing the lock-in that kept AWS and Google Cloud customers from accessing OpenAI’s technology through their existing cloud provider. The restructuring also removes the AGI clause, the legally unusual provision that would have terminated Microsoft’s commercial rights if OpenAI’s board determined the company had achieved artificial general intelligence. That clause had become a governance liability rather than a practical safeguard, and its removal signals that both companies have accepted the relationship is commercial, not existential.

The practical significance is that OpenAI can now serve customers on any cloud. For AWS, which spent the first three years of the AI boom assembling the best of the rest on its Bedrock model marketplace, including Anthropic’s Claude, Meta’s Llama, and Mistral, the addition of OpenAI closes what had been Azure’s single most powerful competitive advantage. Amazon’s expanded investment of up to $25 billion in Anthropic, announced alongside the OpenAI deal, means AWS now has exclusive or preferred access to the two most commercially significant AI model families in the enterprise market. Superhuman, the productivity company formerly known as Grammarly, had turned to Microsoft for AI services specifically because Azure was the only cloud offering OpenAI’s models. That dynamic is now over. AWS customers can access OpenAI on the infrastructure they already use, with the security configurations and compliance certifications they already have in place.

The product

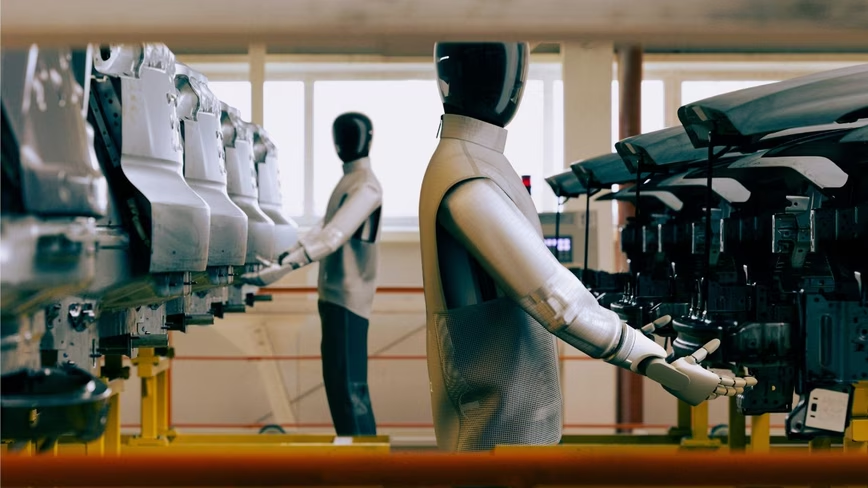

The two companies have jointly developed a Stateful Runtime Environment powered by OpenAI’s models, available through Amazon Bedrock, which provides persistent orchestration and memory for AI agents running complex, multi-step workflows. Instead of stitching together disconnected API requests, the runtime maintains working context across steps, carrying forward memory, tool state, environment configuration, and identity boundaries. Amazon introduced the product as Amazon Bedrock Managed Agents at an event in San Francisco where AWS also announced a broader push into business applications. “Business customers of OpenAI want those models in a trusted environment that they know, and in a trusted infrastructure,” said Denise Dresser, OpenAI’s chief revenue officer. The Stateful Runtime Environment is trained to run on AWS infrastructure and integrated with Amazon Bedrock AgentCore, meaning the agentic capabilities are not a standalone product bolted onto existing infrastructure but a jointly engineered layer designed to make OpenAI’s models operationally native to AWS.

The joint product reflects a broader shift in how AI models are sold. The first generation of the AI boom was defined by model access: which cloud had the best models. The second generation is being defined by model integration: which cloud makes those models most useful within existing enterprise workflows. Microsoft has already begun releasing its own in-house AI models through Mustafa Suleyman’s superintelligence team, a parallel strategy that suggests Microsoft is preparing for a future in which OpenAI’s models are one offering among many rather than Azure’s defining advantage. Google Cloud responded with a $750 million partner fund for agentic AI deployments at Cloud Next 2026, investing through consulting partners to lock in enterprise customers building AI agents on Vertex AI. The three hyperscalers are converging on the same strategy, multi-model marketplaces with agentic integration, from different starting positions. AWS starts with the broadest infrastructure footprint and now the broadest model catalogue. Azure starts with the deepest Microsoft 365 integration and a seven-year head start on OpenAI deployment. Google starts with its own Gemini models and the closest relationship between AI research and cloud product development. The winner will be determined not by which cloud has the best models but by which cloud makes those models disappear into the workflow.

The problem

The AWS deal arrives at the worst possible moment for OpenAI’s growth narrative. On Monday, the Wall Street Journal reported that OpenAI had fallen short of several internal targets for both revenue and user growth in 2026. An internal milestone of one billion weekly active ChatGPT users by year-end went unmet. Monthly revenue targets were missed multiple times as Anthropic gained ground in coding and enterprise and Google’s Gemini claimed a larger share of the consumer market. Chief financial officer Sarah Friar has reportedly warned colleagues that if revenue growth does not accelerate, the company could face difficulty funding its compute commitments. OpenAI expects to burn through $25 billion in cash in 2026 against a revenue target of $30 billion. OpenAI pushed back, saying it was “firing on all cylinders,” but the market responded to the WSJ report by selling the companies most exposed to OpenAI’s spending commitments: Oracle dropped more than 4 per cent, and chipmakers Nvidia, Broadcom, and AMD declined between 3 and 4 per cent.

The arithmetic of OpenAI’s infrastructure commitments is the story underneath the AWS announcement. OpenAI has committed to spending $100 billion on AWS over eight years, on top of its existing Azure spending and a $300 billion, five-year partnership with Oracle for Stargate computing infrastructure. Those commitments assume revenue will grow from approximately $12 billion in annualised run rate to a scale that can sustain hundreds of billions in infrastructure spending. The AWS deal is designed to help close that gap by giving OpenAI access to the cloud’s largest customer base, but it also raises the stakes: every dollar OpenAI earns on AWS is a dollar that might have been earned on Azure, and Microsoft’s 20 per cent revenue share means it collects whether the models run on its cloud or a competitor’s. Garman said there was still more demand for AI services than supply of computing power. “The OpenAI team, I think, would gladly take more capacity from us this year and next year and the year after that as we add it,” he said. The supply constraint may be real, but the revenue constraint is what the market is watching. OpenAI’s models are now available everywhere. The question is whether everywhere is enough.