Scientists from Cornell University have developed a way for robots to identify physical interactions just by analyzing a user’s shadows.

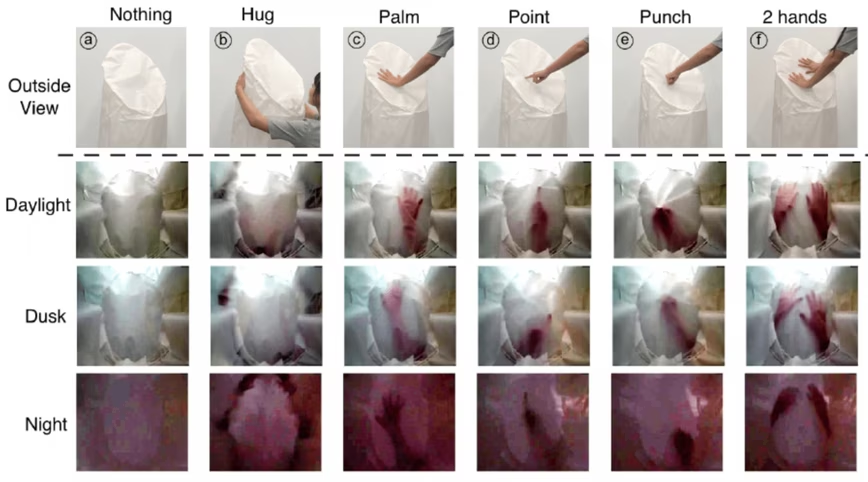

Their ShadowSense system uses an off-the-shelf USB camera to capture the shadows produced by hand gestures on a robot’s skin. Algorithms then classify the movements to infer the user’s specific interaction.

Study lead author Guy Hoffman said the method provides a natural way of interacting with robots without relying on large and costly sensor arrays:

Touch is such an important mode of communication for most organisms, but it has been virtually absent from human-robot interaction. One of the reasons is that full-body touch used to require a massive number of sensors, and was therefore not practical to implement. This research offers a low-cost alternative.

The researchers tried out the system on an inflatable robot with a camera underneath its skin.

[Read: How Polestar is using blockchain to increase transparency]

They trained and tested the classification algorithms with shadow images of six gestures: touching with a palm, punching, touching with two hands, hugging, pointing, and not touching.

It successfully distinguished between the gestures with 87.5 − 96% accuracy, depending on the lighting.

The researchers envision mobile guide robots using the tech to respond to different gestures, such as turning to face a human when it detects a poke, and moving away when it senses a tap on the back.

It could also add interactive touch screens to inflatable robots and make home assistant droids more privacy-friendly.

“If the robot can only see you in the form of your shadow, it can detect what you’re doing without taking high fidelity images of your appearance,” said Hoffman. “That gives you a physical filter and protection, and provides psychological comfort.”

You can read the full study paper here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.