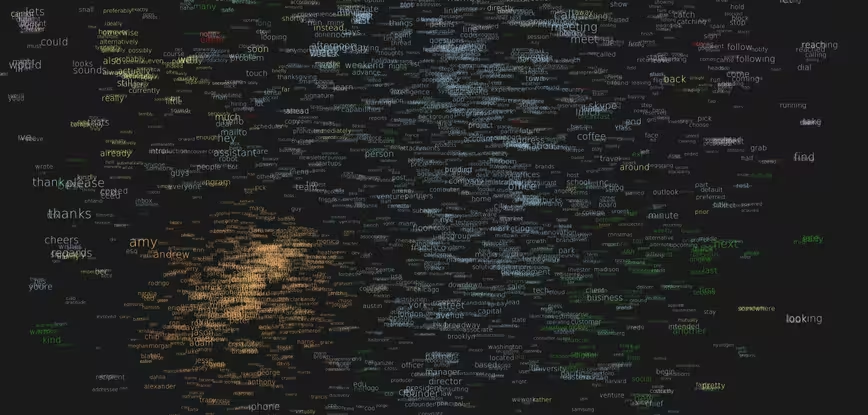

The folks over at x.ai – creators of Amy, the artificial intelligence answer to scheduling meetings – have had a shot at showing exactly what it looks like inside their bot’s brain, using AI, of course.

The team used a powerful deep-learning model, a Recurrent Neural Network (RNN), to trawl 500,000 words in its database, looking at their sequence in a sentence to understand what they mean, then predicting how to categorize them.

Without a human ever telling the RNN the definitions of different word groups, it has managed to understand that Stanford is different from Instagram, and that Jesse, Luke and Jason are names.

This data was cut to down to the 3,500 most frequently used words and has then been projected into a 2D shape in order to show the relationships the AI has made between different words.

The size reflects the frequency of word use – looks like guys called Andrew are Amy’s biggest users – with blue representing nouns, purple for verbs, orange for proper nouns, green for adjectives, red for conjunctions and yellow for adverbs.

If you look closely, you’ll notice that the kinds of busy people who are beta users of Amy are also likely to talk about founders, coffee and Skype.

Check it out more closely for yourself here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.