A team of researchers from MIT and Massachusetts General Hospital recently published a study linking social awareness to individual neuronal activity. To the best of our knowledge, this is the first time evidence for the ‘theory of mind‘ has been identified at this scale.

Measuring large groups of neurons is the bread-and-butter of neurology. Even a simple MRI can highlight specific regions of the brain and give scientists an indication of what they’re used for and, in many cases, what kind of thoughts are happening. But figuring out what’s going on at the single-neuron level is an entirely different feat.

According to the paper:

Here, using recordings from single cells in the human dorsomedial prefrontal cortex, we identify neurons that reliably encode information about others’ beliefs across richly varying scenarios and that distinguish self- from other-belief-related representations … these findings reveal a detailed cellular process in the human dorsomedial prefrontal cortex for representing another’s beliefs and identify candidate neurons that could support theory of mind.

In other words: the researchers believe they’ve observed individual brain neurons forming the patterns that cause us to consider what other people might be feeling and thinking. They’re identifying empathy in action.

This could have a huge impact on brain research, especially in the area of mental illness and social anxiety disorders or in the development of individualized treatments for people with autism spectrum disorder.

Perhaps the most interesting thing about it, however, is what we could potentially learn about consciousness from the team’s work.

[Read: How this company leveraged AI to become the Netflix of Finland]

The researchers asked 15 patients who were slated to undergo a specific kind of brain surgery (not related to the study) to answer a few questions and undergo an simple behavioral test. Per a press release from Massachusetts General Hospital:

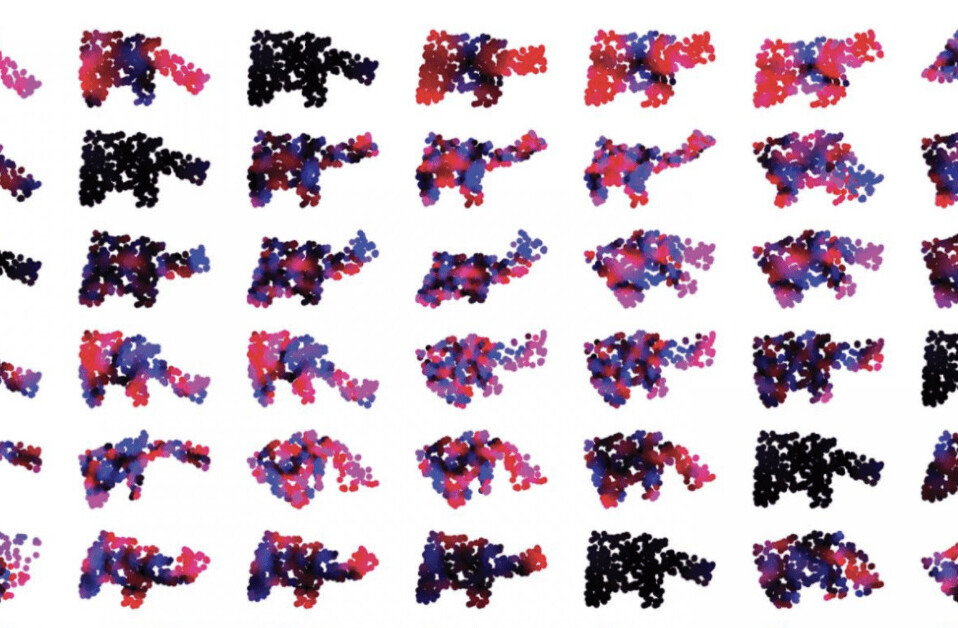

Micro-electrodes inserted in the dorsomedial prefrontal cortex recorded the behavior of individual neurons as patients listened to short narratives and answered questions about them. For example, participants were presented with this scenario to evaluate how they considered another’s beliefs of reality: “You and Tom see a jar on the table. After Tom leaves, you move the jar to a cabinet. Where does Tom believe the jar to be?”

The participants had to make inferences about another’s beliefs after hearing each story. The experiment did not change the planned surgical approach or alter clinical care.

The experiment basically took a grand concept (brain activity) and dialed it in as much as possible. By adding this layer of knowledge to our collective understanding of how individual neurons communicate and work together to emerge what’s ultimately a theory of other minds within our own consciousness, it may become possible to identify and quantify other neuronal systems in action using similar experimental techniques.

It would, of course, be impossible for human scientists to come up with ways to stimulate, observe, and label 100 billion neurons – if for no other reason than the fact it would take thousands of years just to count them much less watch them respond to provocation.

Luckily, we’ve entered the artificial intelligence age and if there’s one thing AI is good at it’s doing really monotonous things, such as labeling 80 billion individual neurons, really quickly.

It’s not much of a stretch to imagine the Massachusetts team’s methodology being automated. While it appears the current iteration requires the use of invasive sensors – hence the use of volunteers who were already slated to undergo brain surgery – it’s certainly within the realm of possibility that such fine readings could be achieved with an external device one day.

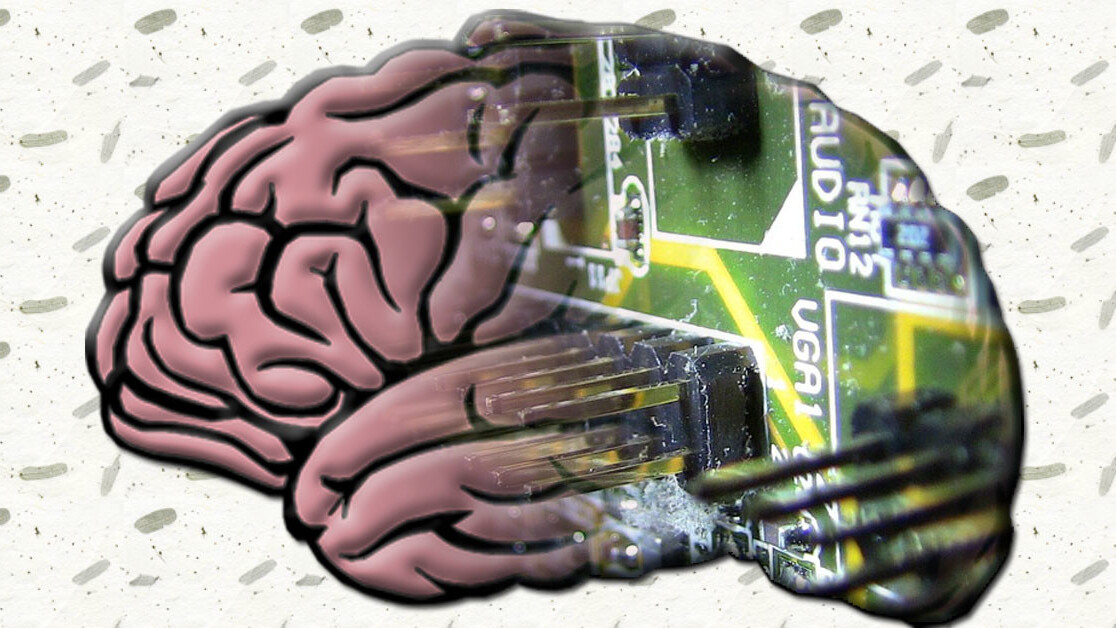

The ultimate goal of such a system would be to identify and map every neuron in the human brain as it operates in real time. It’d be like seeing a hedge maze from a hot air balloon after an eternity lost in its twists.

This would give us a god’s eye view of consciousness in action and, potentially, allow us to replicate it more accurately in machines.

Get the TNW newsletter

Get the most important tech news in your inbox each week.