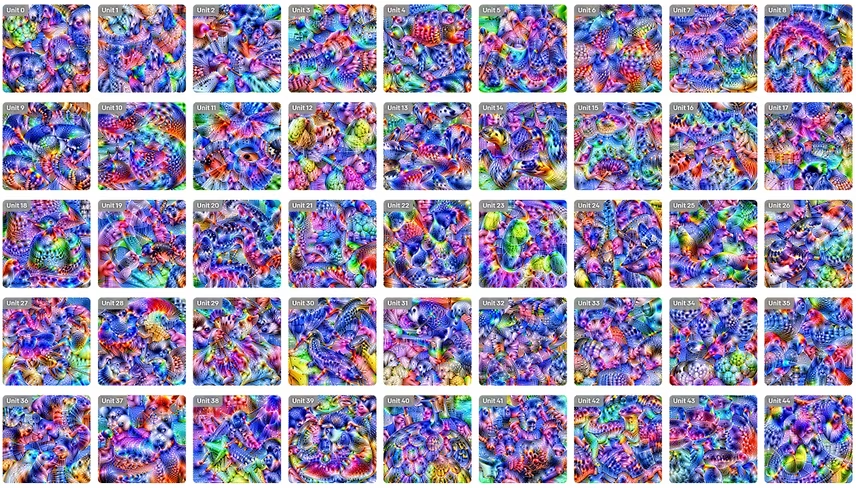

OpenAI today released ‘Microscope,’ a database of complex AI visualizations designed to aid researchers in developing techniques to reverse-engineer neural network nodes. All that’s well and fine, but the real sell here is how dazzling the images are.

Microscope, so named because it puts neural networks under a metaphorical microscope to expose their inner workings, is a visual method for showing what a neural network’s expansive system of layers and nodes looks like.

According to OpenAI’s Microscope website:

The primary value is in providing persistent, shared artifacts to facilitate long-term comparative study of these models.

Researchers can use these accurate models to compare differences in neural networks, much like they would compare cells under an actual microscope. Without such visualizations, developers are forced to compare raw output data – something that’s not always useful.

Read: MIT scientists can ‘hack’ your dreams with sounds and smells

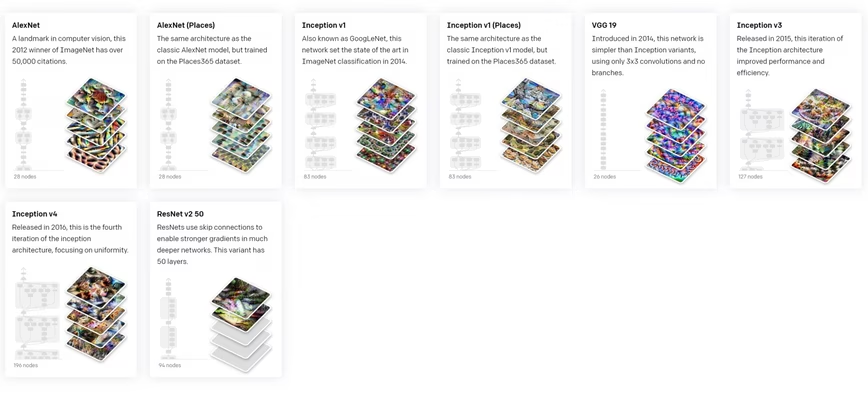

The project currently has images for eight of the most popular computer vision AI systems ever created, including AlexNET, Inception, and ResNet. Each neural network is represented by a menagerie of images that would challenge a kaleidoscope for sheer exuberance of color and beauty.

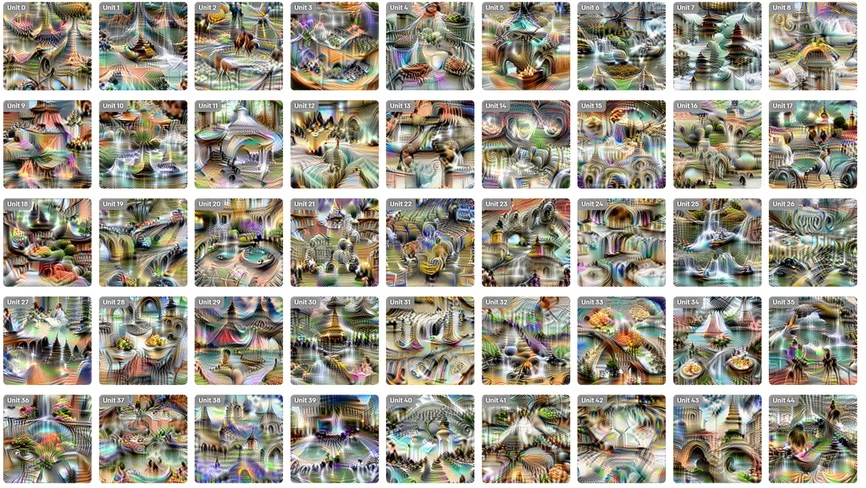

Something about the neural network trained on AlexNet (Places) makes me think of Paris:

And ResNet looks like an aquarium made of stained-glass windows:

According to OpenAI, the researchers focused on these particular models because they’re representative of the field:

Just as biologists often focus on the study of a few “model organisms,” Microscope focuses on exploring a small number of models in detail. Our initial release includes nine frequently studied vision models, but we plan to expand this set over time.

Even the models we’ve included result in hundreds of thousands of neurons and many model idiosyncrasies. Making everything work smoothly turns out to be more difficult than one would expect!

Hopefully OpenAI’s work will help researchers understand why certain neural networks act one way, and those designed with different algorithms act another. Currently, many of these systems operate in a “black box,” meaning it’s unclear why they make the decisions they do. These visualizations should help researchers figure out how to back-trace neural network decisions, sort of like an inside-out map.

You can check out the rest of the images and learn more about Microscope here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.