Big data and analytics are becoming more central to organizations in almost every industry, and as they spread, so do the questions that surround them. A significant sticking point — and, in my opinion, one of the more interesting ones — is the way these organizations choose to store their data, and why.

Today, there are two major models of how data is stored for analysis: the data warehouse and the data lake.

On the surface, they both do the same thing: store data until it’s ready to be called up for processing and analysis. However, the similarities end once you take a deeper look, as data lakes offer a radically different perspective of how data should be kept, and more importantly, which data should be stored.

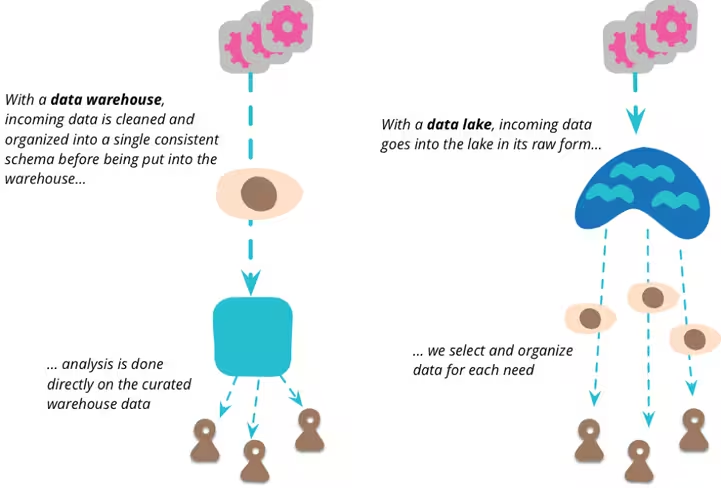

The difference, in simple terms, is that while data warehouses keep data that has been scrubbed and is structured, data lakes take a more inclusive approach, storing raw data along with structured data in a flat architecture.

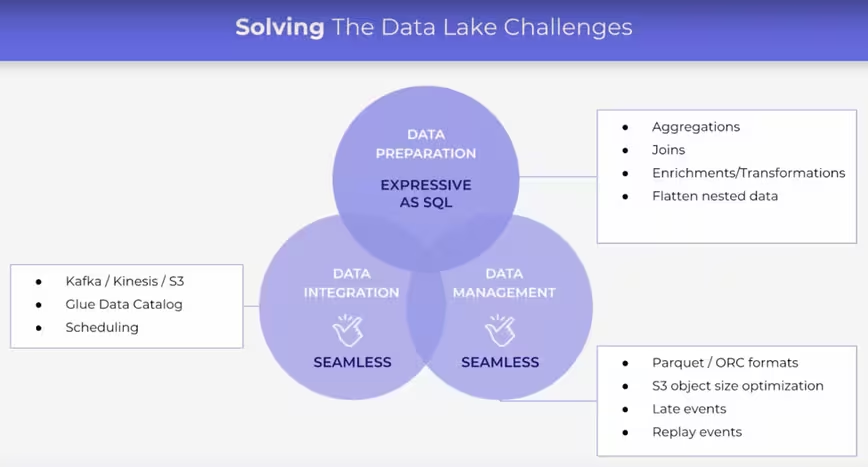

To critics, this makes for data swamps that users must wade through to find useful information. This is an understandable critique, but it’s one I think misses the mark. While data lakes do store data that hasn’t been processed or scrubbed, that doesn’t make them useless.

Quite the opposite, in fact.

Because of this massive pool of information, companies can use data lakes to give themselves a significant edge. More importantly, by not filtering out any of the data they collect, these companies are likely to be better prepared to understand future trends than those which avoid raw signals in favor of structure.

A simpler, more flexible structure

Data warehouses are arguably the more common implementation of data storage today, mostly due to the need for end users to access pre-scrubbed data that is narrowly focused. To achieve this, data warehouses must live with some pretty heavy limitations.

Data warehouses are rigid by definition, which makes them poorly suited to the increasingly flexible world of big data analytics. This makes them unable to cope with different demands, quick changes, and massive data sets that aren’t always pre-cleaned for efficiency. Data lake platforms offer a much faster way to create analysis, as the term’s originator, James Dixon, explains:

If you think of a datamart as a store of bottled water — cleansed and packaged and structured for easy consumption — the data lake is a large body of water in a more natural state. The contents of the data lake stream in from a source to fill the lake, and various users of the lake can come to examine, dive in, or take samples.

In my experience, companies that value the potential of data, as opposed to strictly immediate results, data lakes offer a significant improvement in terms of the pool of information to choose from, and the ways they can access this data.

As opposed to the scrubbed data that warehouses store, data lakes simply aggregate data collected from a variety of sources and provide a bigger toolkit to process it.

The value of taking the plunge

Data lakes don’t discriminate. Instead, they allow a variety of data formats to be stored simultaneously. Unlike data warehouses, these structures let users create ad hoc aggregations at any moment, accessing different data when needed and performing analysis that don’t always adhere to a defined structure.

If you’re at a company that produces large amounts of data, getting rid of most of it when “scrubbing” it means you may be giving up on significant value.

A recent case study by Intel found that creating data lakes and implementing governance policies, as opposed to establishing rigid data silos in a warehouse, yielded significantly better results on an organization-wide level. Dell EMC was able to slash query lag times from an average of four hours to under a minute after taking the plunge into a lake.

In another recent example, user feedback platform Vicomi switched their database management to Upsolver, and claimed to reduce their operational time significantly, as well as provide for simpler and more flexible analytics. Vicomi found the data lake architecture provided a more agile storage solution that made deeper analytics easier.

Even telecommunication monsters like Verizon have come around to the value of data lakes. Apparently, the company went from using 90 percent of its data warehouse for ELT (Extract, Load, and Transform) processes, which eat up resource space, to impressive results. Over five years, they were able to reduce capital expenses in the area by $33 million and managed to up their storage capacity 20x.

To me, anyway, it’s clear that, as opposed to warehouses, which require significant resources, data lakes are cheaper, faster, and more adaptive. The difference is so stark that it’s almost laughable.

More than anything, I find that data lakes highlight the importance of not just data that has been cleaned, but of all data. By focusing on the forest instead of the trees, they also offer a more creative way to view data. Instead of complex builds and ecosystems that limit the ways it can be processed, using a data lake encourages a creative and unstructured approach.

Why I believe in raw data

Perhaps most importantly, the different data flows that gather in a data lake mean that data scientists and analysts have access to a much broader array of information.

Instead of simply looking at transactions, organizations can see how different types of data interact with each other and find previously unseen links or interesting patterns that may lead to larger discoveries. In a world that is becoming increasingly data-dependent, limiting the amount of information available to understand past performance, future trends, and other key patterns is folly.

Get the TNW newsletter

Get the most important tech news in your inbox each week.