Despite its overwhelming success, the human brain peaked about two million years ago. Lucky for us, computers are helping us understand our brains better, but there may be some consequences to giving AI a skeleton key to our mind.

A team of Japanese researchers recently conducted a series of experiments in creating an end-to-end solution for training a neural network to interpret fMRI scans. Where previous work achieved similar results, the difference in the new method involves how the AI is trained.

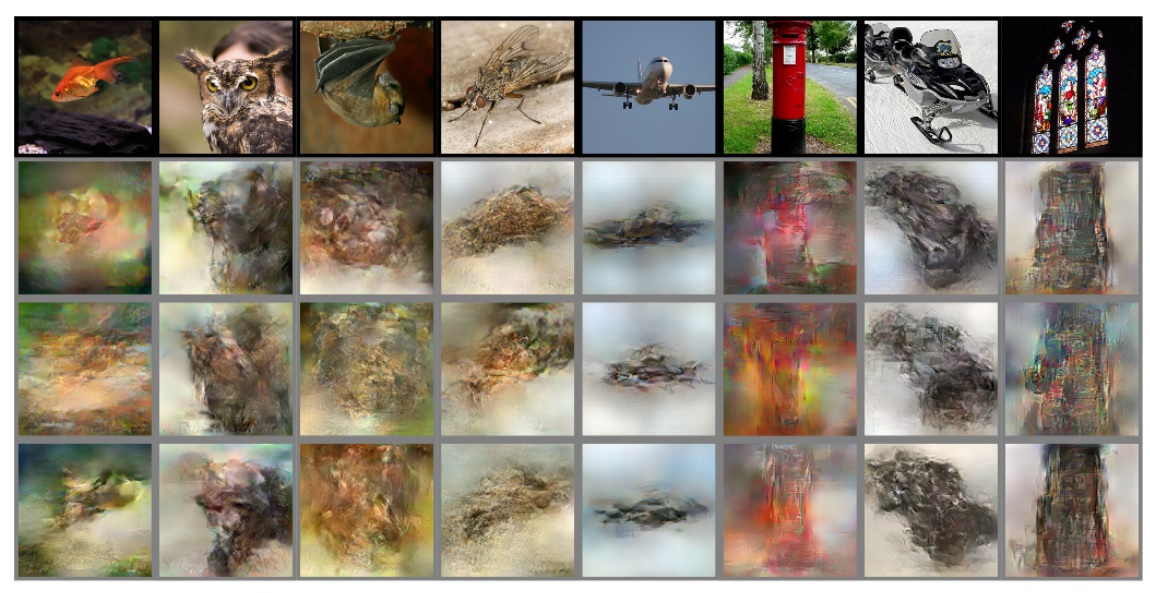

An fMRI is a non-invasive and safe brain scan similar to a normal MRI. What differs is the fMRI merely shows changes in blood flow. The images from these scans can be interpreted by an AI system and ‘translated’ into a visual representation of what the person being scanned was thinking about.

This isn’t totally novel; we reported on the team’s previous efforts a couple months ago. What’s new is how the machine gets its training data.

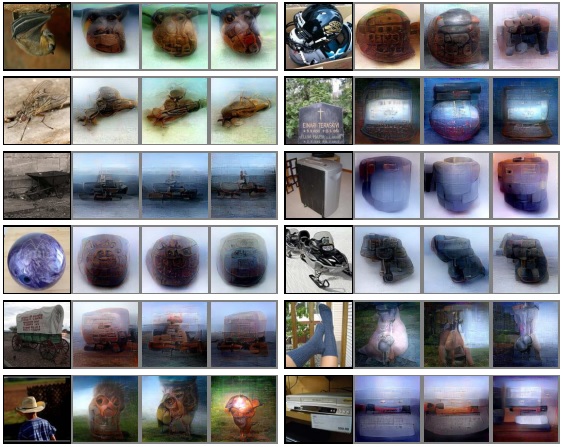

In the earlier research, the group used a neural network that’d been pre-trained on regular images. The results it produced were interpretations of brain scans based on other images it’d seen.

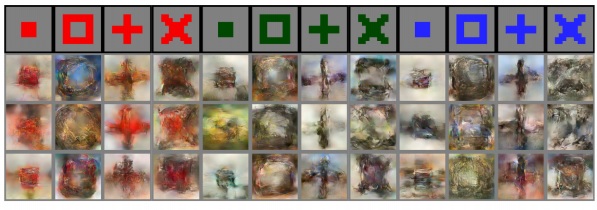

The above images show what a human saw and then three different ways an AI interpreted fMRI scans from a person viewing that image. Each image was created by a neural network trained on image recognition using a large data set of regular images. Now it’s been trained solely on images of brain-scans.

Basically the old way was like showing someone a bunch of pictures and then asking them to interpret an inkblot as one of them. Now, the researchers are just using the inkblots and the computers have to try and guess what they represent.

The fMRI scans represent brain activity as a human subject looks at a specific image. Researchers know the input, the computer doesn’t, so humans judge the machine’s output and provide feedback.

Perhaps most amazing: this system was trained on about 6,000 images – a drop in the bucket compared to the millions some neural networks use. The scarcity of brain scans makes it a difficult process, but as you can see even a small sample data-set produces exciting results.

But, when it comes to AI, if you’re not scared then you’re not paying attention.

We’ve already seen machine learning turn a device no more complex than a WiFi router into a human emotion detector. With the right advances in non-invasive brain scanning it’s possible that information similar to that provided by an fMRI could be gleaned by machines through undetectable means.

AI could hypothetically interpret our brainwaves as we conduct ourselves in, for example, an airport. It could scan for potentially threatening mental imagery like bombs or firearms and alert security.

And there’s also the possibility that this technology could be used by government agencies to circumvent a person’s rights. In the US, this means a person’s right not to be “compelled in any criminal case to be a witness against himself” may no longer apply.

With artificial intelligence interpreting even our rudimentary yes/no thoughts we could effectively be rendered indefensible to interrogation – the implications of which are unthinkable.

Then again, maybe this technology will open up a world of telekinetic communication through Facebook Messenger via cloud AI translating our brainwaves. Perhaps we’ll control devices in the future by dedicating a small portion of our “mind’s eye” to visualizing an action and thinking “send” or something similar. This could lay the groundwork for incredibly advanced human-machine interfaces.

Still, if you’re the type of person who doesn’t trust a polygraph, you’re definitely not ready for the AI-powered future where computers can tell what you’re thinking.

H/t: MIT’s Technology Review

Want to hear more about AI from the world’s leading experts? Join our Machine:Learners track at TNW Conference 2018. Check out info and get your tickets here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.