Elon Musk likes to make predictions. You may have seen a few, like these:

- We’re living in a computer simulation

- Tesla will surpass Apple’s market cap by 2025

- Humans can make Mars inhabitable by blasting it with nukes

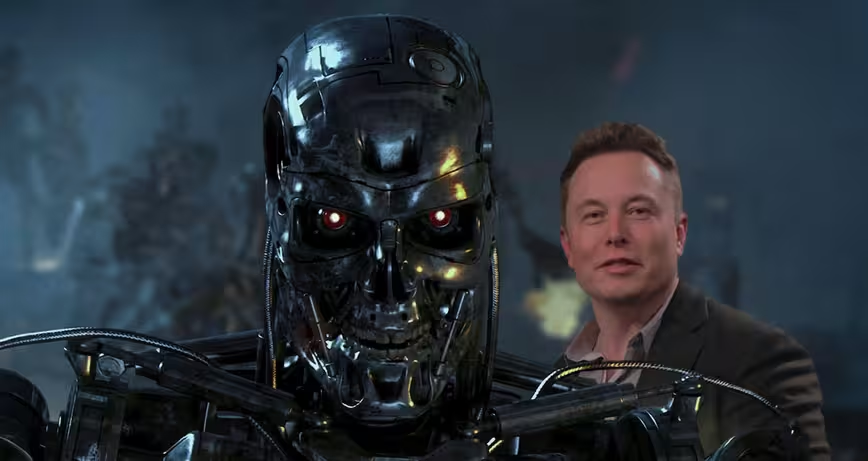

In a recent talk with Musk-led startup Neuralink, the master of predictions made another one: AI is probably going to kill us all. At a recent talk, Musk said all efforts to make AI safe have only “a five to 10 percent chance of success.”

Musk’s latest proclamation is on-brand, if nothing else. Musk, in years past warned many times of the threat AI posed. Back in 2014, he predicted AI was on the verge of something “seriously dangerous.”

The risk of something seriously dangerous happening is in the five year timeframe. Ten years at most.

We’ve successfully passed the first part of that prediction, limbs intact. As for the second, it’s beginning to look more logical by the day.

And then just last year Musk declared humans had essentially lost the battle against AI already, and that the only way to beat them was to join them. “Under any rate of advancement in AI, we will be left behind by a lot,” Musk said. “The benign situation with ultra-intelligent AI is that we would be so far below in intelligence we’d be like a pet, or a house cat.”

To combat this, Musk is a big proponent of the neural lace, a mesh implant containing electrodes capable of monitoring brain function. Essentially, it’s a ribbon cable between your brain and a PC, allowing for simultaneous data transfer both ways. This is the basis for Neuralink, one of Musk’s most recent startups — it’s getting hard to keep track, Elon.

It may be time to sound the alarm, if only slightly. Musk, the leader of both Neuralink and OpenAI — which recently developed an AI capable of teaching itself — is deeply invested in AI. For the real life Tony Stark to be this alarmed about it, maybe it’s time for the rest of us to share some of that concern.

Get the TNW newsletter

Get the most important tech news in your inbox each week.