Yesterday, Apple was ordered by a US federal court judge to assist the Department of Justice (DOJ) in unlocking an iPhone used by one of the shooters in the San Bernadino attacks.

The ruling requires Apple to build malware that disables a built-in security feature that causes the device to wipe its memory after 10 unsuccessful login attempts. Disabling this leaves the phone open to a brute force attack — continued algorithmic guessing — by the government and greatly impacts the future of data security.

Securing this data is something Apple has taken a firm stance on in the past. This ruling would undermine not only Apple’s focus on securing its customers, but would shift the balance of power from the consumer to the government should this (currently unavailable) exploit ever be created.

With the entire world watching, Apple’s Tim Cook addressed some of these concerns in an attempt to clarify the company’s stance on this order, privacy and its continued efforts to fight the government for this type of access.

What he mostly glossed over is just how much this decision will shape the future of privacy and data security.

A veritable Pandora’s Box

The US government is quick to point out that this isn’t a backdoor and it’s only intended for use on one phone, not all of Apple’s customers. But it is a backdoor and it’s naive to assume that it’d only be used once.

BREAKING: White House says Department of Justice is not asking Apple to create a new backdoor, asking for access to one device.

— Reuters (@Reuters) February 17, 2016

Much like it has proven time and time again, the government is clueless about cyber security, and encryption in particular.

You can’t weaken one phone. The very design of this exploit is software-based, meaning Apple isn’t hacking a single phone, it’s creating software that can then be used to access any other iPhone. Should this exploit ever find its way into the wild, or even into the un-checked hands of law enforcement, it’ll prove to be a security crisis even Orwell’s ‘1984’ couldn’t have predicted.

In a world that’s increasingly concerned about being watched by government, imagine a future where each of us carries a tracking device with easily-accessible intelligence. As our mobile devices become further ingrained in our existence, they become an extension of ourselves and therefore a valuable tool to cripple the very idea of privacy and security.

Even if this exploit was contained and then destroyed, the knowledge of how it was created lives on in the minds of its developers. We’ve proven again and again that even the most secure of facilities can’t protect sensitive information, how is it possible to assume that Apple — or the FBI — is fully capable of protecting a piece of software that just became the most valuable exploit on the planet?

It’s just this one time

The @FBI is creating a world where citizens rely on #Apple to defend their rights, rather than the other way around. https://t.co/vdjB6CuB7k

— Edward Snowden (@Snowden) February 17, 2016

Tim Cook wrote:

The government suggests this tool could only be used once, on one phone. But that’s simply not true. Once created, the technique could be used over and over again, on any number of devices. In the physical world, it would be the equivalent of a master key, capable of opening hundreds of millions of locks — from restaurants and banks to stores and homes. No reasonable person would find that acceptable.

It’s just this one time, until the next time.

The “just this one time” argument is a conundrum. If access to this data is crucial to national security, are we to believe that we’ll never again be faced with a similar scenario? And if we are, what stops government or law enforcement from using the same argument?

Once you create a loophole in encryption technology, you can’t un-create it. You also can’t determine who’s capable of using it and who isn’t. If law enforcement, or even Apple, has software capable of disabling security features why are we assuming that they’re the only ones that could take advantage of it? The very thought that this could be a single-use solution is naive, and dangerous.

It’s not hard to envision a world where this very exploit, and others like it, became the de facto means for gathering evidence as it pertains to national security. At that point, what’s deemed necessary use of the software?

Is it even worth it?

Whether it works or not is irrelevant, but it’s still important to understand just what the government is attempting to do.

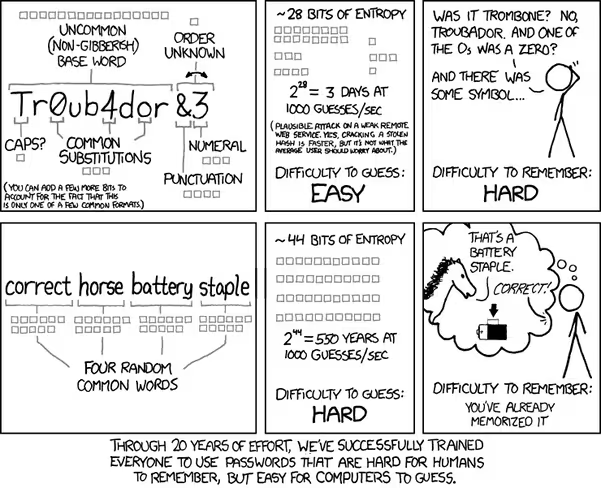

Cracking a strong password, even with modern supercomputers, isn’t as easy as running a brute force exploit until a c-list actor proclaims “I’m in” as television would lead you to believe.

A six-digit, all lower-case, alphanumeric password could take several years to crack using brute force. Four random words strung together could take centuries. A 20-digit password that uses letters, numbers and special characters isn’t even feasibly crackable with modern brute force technology.

Of course, that’s if the device owner used a strong password.

But it doesn’t matter. The implications of this court order, and how Apple responds to it, are the foundation for what the future of data security looks like.

Currently, we have strong tools to protect us from hackers and government overreach, but much like a house of cards, it can quickly crumble once you start to shift its foundation.

In this case, encryption is the foundation. Should Apple weaken it, the idea of privacy and security falls. Bad actors now have access to our most-used devices to create additional malware designed to do just about anything: access your camera, photos, email, text messages, call history, location data, social media accounts or even remotely hijack or disable your device.

Our smartphones act simultaneously as the key to information and communication with anyone around the world and our biggest liability for protecting personal security and privacy. There’s a lot riding on how this plays out.

We’re all watching, Apple.

Get the TNW newsletter

Get the most important tech news in your inbox each week.